Robots Among Us

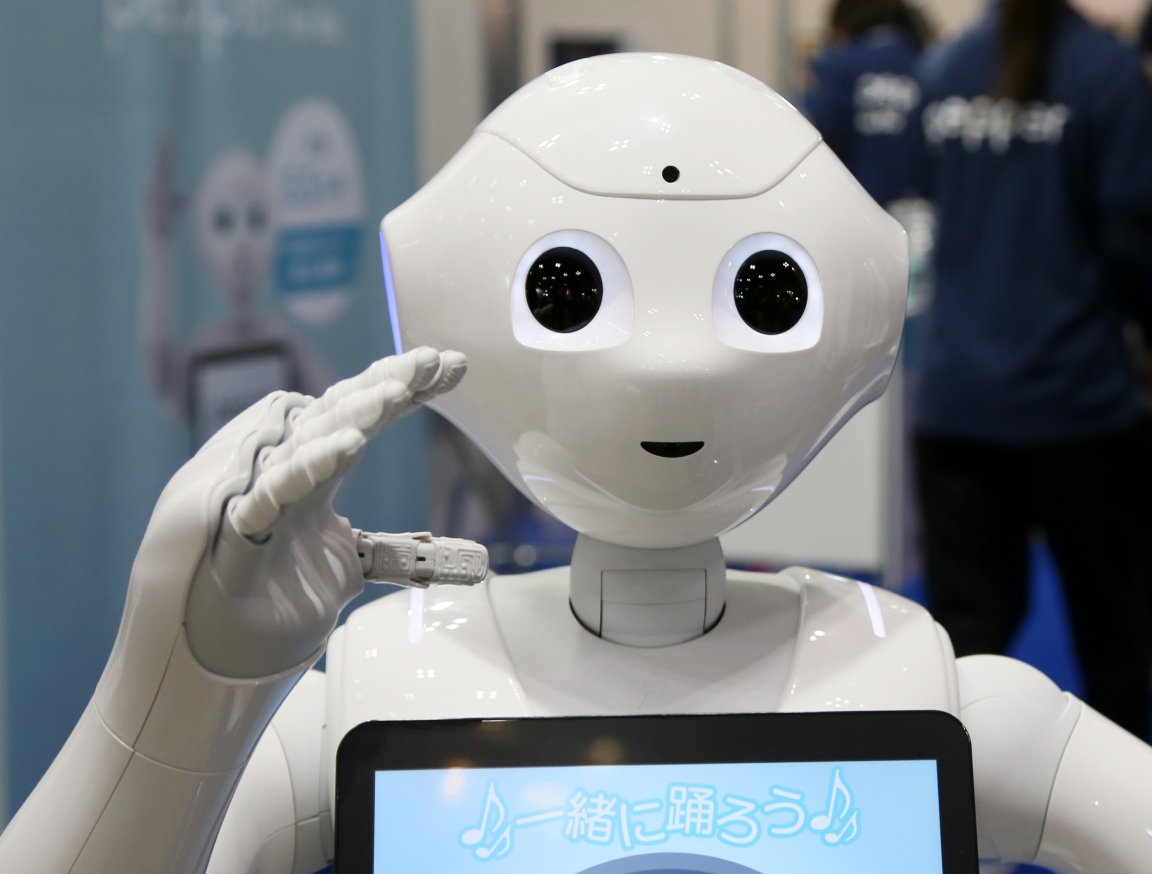

The rise of automation is prompting all kinds of questions about the future of work for human employees, but there’s also another important consideration at hand — how are we to interact with robots as they become more commonplace in our day-to-day lives?

As robots become more capable of performing tasks independently, it’s crucial that they’re taught how to communicate with the humans that they cross paths with, whether they’re co-workers or complete strangers. Natural language will play an important role, but it’s only one part of a greater whole.

More Than Words

When humans talk to one another, what’s said only comprises a small amount of the information being relayed. Everything from facial expressions to the intonation of a person’s voice might give extra context or added insight into the topic at hand.

This is something that the scientists and engineers constructing the robots of tomorrow are careful to consider. A full vocabulary isn’t necessarily enough — there’s more to conveying a message than just finding the right words.

A project known as Baxter being developed by Rethink Robotics uses a pair of eyes on a screen to let people know what the robot is going to do next. The display is on a swivel, meaning that it can direct its attention to one of its two arms before it performs an action, making sure that any bystanders are well aware of what kind of motion is coming.

This is a two-way street. As well as being able to signal their intentions to human onlookers, robots will need to be able to pick up of social cues dropped by others in order to be effective in their roles.

We’ve all been locked in a “hallway dance” when trying to give way to another human walking the opposite direction down a corridor. Nine times out of ten, it’s no big deal — but in a busy hospital ward, when one dance partner is a bulky robot, it could cause some problems.

A team at MIT’s Interactive Robotics Group led by Dr. Julie Shah has used machine learning techniques to teach a robot to observe anticipatory indicators from a human that can reveal which way they’re planning to turn. We know how to pick up on these cues from experience, but machines need to be taught the basics from scratch.

Customer Service

At present, we tend to think of robots as being good at manual labor. They can be built strong, so it makes sense to think of them lifting heavy loads and doing other physically intensive tasks.

Further advances in helping robots to interact with humans will allow them to take on a much wider range of vocations. Customer service positions, especially those where there’s a limited range of responsibilities, will be a perfect fit for machines once they’re able to hold a natural, productive conversation reliably.

A robot named Mario has already been trialled as a concierge in Belgium, handing out room keys and ingratiating himself with guests via a high-five. A project called RAVE is creating a robot that can teach young children without human input, holding their attention for six minutes at a time.

Robots excel in certain tasks because of their non-human qualities — they don’t tire, and they won’t turn their nose up at unpleasant or unfulfilling tasks.

However, as they take on a more diverse set of roles, they’ll need to learn some distinctly human skills. Being able to communicate with people is a key part of all kinds of vocations, and it doesn’t come naturally for a robot.