Researchers are sifting through an avalanche of data produced by one of the largest cosmological simulations ever performed, led by scientists at the U.S. Department of Energy’s (DOE) Argonne National Laboratory.

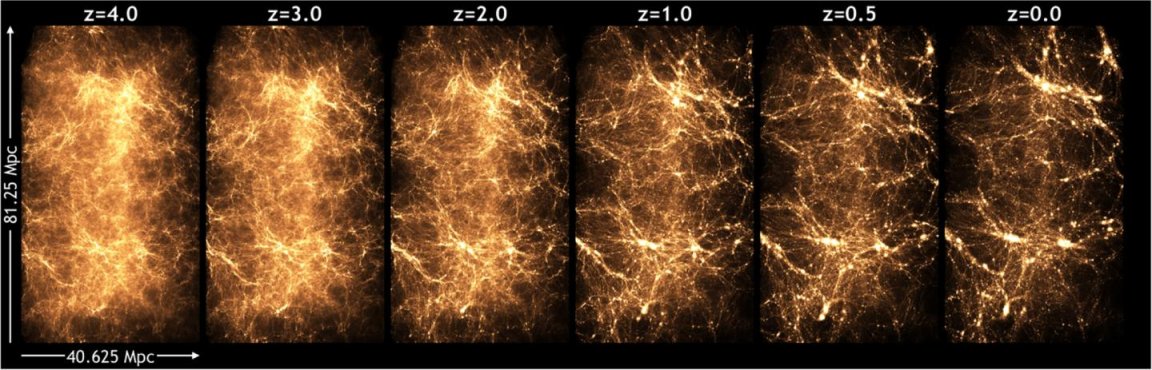

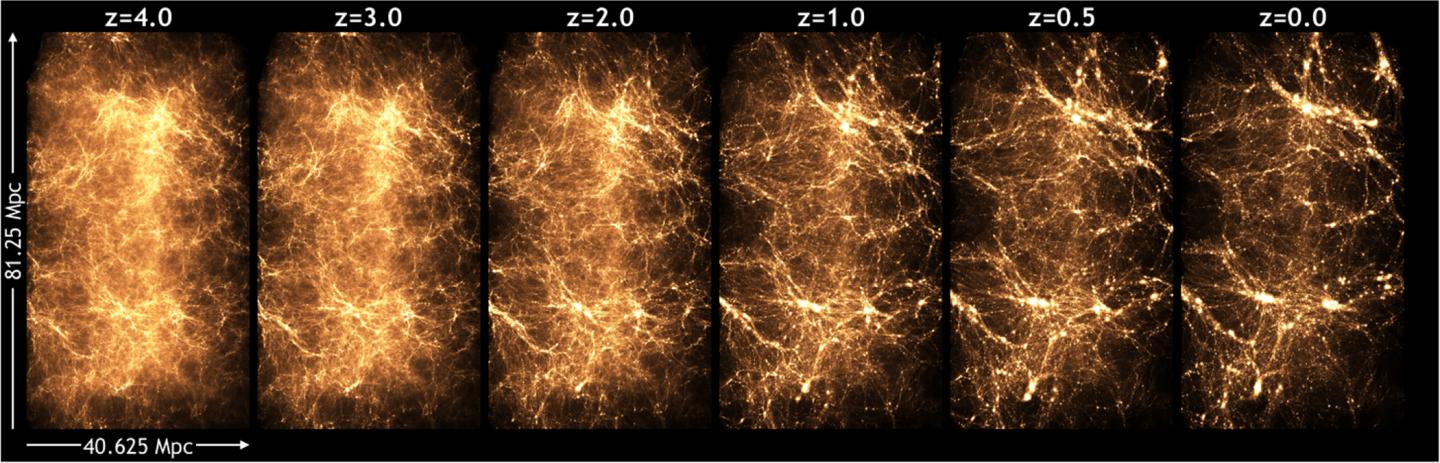

The simulation, run on the Titan supercomputer at DOE’s Oak Ridge National Laboratory, helped model the evolution of the universe from just 50 million years after the Big Bang to the present day—from its earliest infancy to its current adulthood. Over the course of 13.8 billion years, the matter in the universe clumped together to form galaxies, stars and planets; but we’re not sure precisely how.

These kinds of simulations help scientists understand dark energy, a form of energy that affects the expansion rate of the universe, including the distribution of galaxies, composed of ordinary matter, as well as dark matter, a mysterious kind of matter that no instrument has directly measured so far.

Intensive sky surveys with powerful telescopes, like the Sloan Digital Sky Survey and the new, more detailed Dark Energy Survey, show scientists where galaxies and stars were when their light was first emitted. And surveys of the Cosmic Microwave Background, light remaining from when the universe was only 300,000 years old, show us how the universe began—”very uniform, with matter clumping together over time,” said Katrin Heitmann, an Argonne physicist who led the simulation.

The simulation fills in the temporal gap to show how the universe might have evolved in between: “Gravity acts on the dark matter, which begins to clump more and more, and in the clumps, galaxies form,” said Heitmann.

Called the Q Continuum, the simulation involved half a trillion particles—dividing the universe up into cubes with sides 100,000 kilometers long. This makes it one of the largest cosmology simulations at such high resolution. It ran using more than 90 percent of the supercomputer. For perspective, typically less than one percent of jobs use 90 percent of the Mira supercomputer at Argonne, said officials at the Argonne Leadership Computing Facility, a DOE Office of Science User Facility . Staff at both the Argonne and Oak Ridge computing facilities helped adapt the code for its run on Titan.

“This is a very rich simulation,” Heitmann said. “We can use this data to look at why galaxies clump this way, as well as the fundamental physics of structure formation itself.”

Analysis has already begun on the two and a half petabytes of data that were generated, and will continue for several years, she said. Scientists can pull information on such astrophysical phenomena as strong lensing, weak lensing shear, cluster lensing and galaxy-galaxy lensing.

The code to run the simulation is called Hardware/Hybrid Accelerated Cosmology Code (HACC), which was first written in 2008, around the time scientific supercomputers broke the petaflop barrier (a quadrillion operations per second). HACC is designed with an inherent flexibility that enables it to run on supercomputers with different architectures.

(Source: Department of Energy/Argonne National Laboratory)