A new website, “NotRealNews.net,” uses artificial intelligence to populate what resembles a news site’s home page, complete with AI-written fake news stories.

The website, a project by the AI development company Big Bird, is supposed to be a showcase of how the company’s algorithms can help journalists quickly write compelling news, according to the website’s “about” page.

But despite the website’s title, the realistic-enough articles aren’t labeled as fake news or the marketing stunt that they are — meaning their existence is more likely to make journalists pull out their hair than it is to help them.

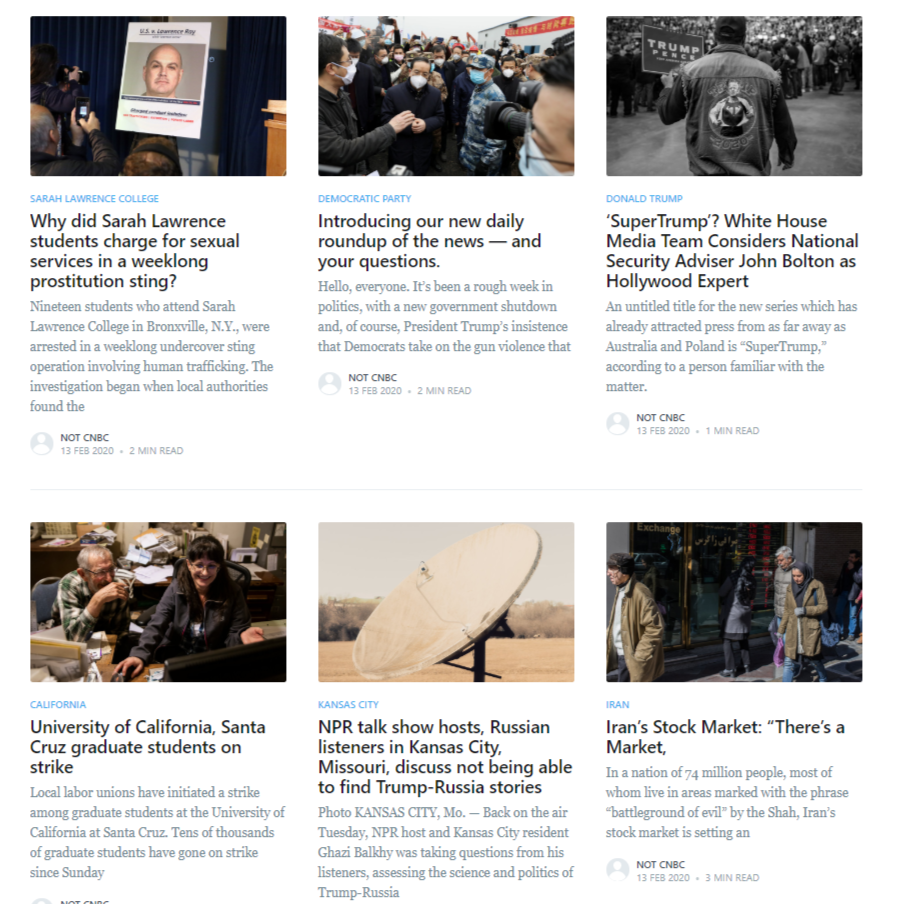

A quick scroll down the website’s home page reveals a smattering of political, cultural, and scientific news stories.

Aside from entertaining algorithmic errors, like the headlines “Iran’s Stock Market: ‘There’s a Market,'” and “In wake of death of British soldier, thousands as for plastic-free pubs,” the articles are mostly convincing. In fact, many appear to be closely based on actual news stories, like the resignation of UK finance minister Sajid Javid.

It bears stating clearly: this is dangerous. Articles on the website contain fictional updates from the U.S. presidential race. Some are misinformation about the ongoing coronavirus outbreak, a news cycle that’s already full of confusing and sometimes conflicting reports. One headline describes a sexual assault.

The idea, according to Big Bird’s logic, is that journalists would be able to save time by taking AI-generated article templates but to then validate and fact-check them by removing the algorithm’s errors and filling them in with factual information.

Basically, it would turn reporters into the algorithm’s editor — but given the system’s believable prose and close proximity to the truth, it seems like that arrangement would almost guarantee that some fake news would slip through the cracks.

It all raises a crucial question: Given that reporters are already perfectly capable of writing the news without a tech company’s help, who is this for?

As of press time, Big Bird has not responded to Futurism’s request for comment.

More on AI-written fake news: New AI Generates Horrifyingly Plausible Fake News