Going Fully Autonomous

NuTonomy, a taxi startup, claims their fleet of fully autonomous self-driving driving taxis will hit the road in two years; last year, Fisker Inc. unveiled the Fisker EMotion, a car they assert will carry fully autonomous driving features; and right before 2016 wound to a close, Elon Musk boldly announced that every Tesla vehicle will be fully autonomous by 2017.

Things are apparently looking good for the future of autonomous driving. But the latest statement made by Gill Pratt, the head of Toyota’s Research Institute, brings the world’s anticipation for a driverless future to a sobering halt – he asserts “we’re not even close to Level 5."

Level 5 autonomy is the holy grail of autonomous driving – it means a vehicle can operate with zero human intervention. But as Pratt points out, the journey to Level 5 is a slow one:

Historically human beings have shown zero tolerance for injury or death caused by flaws in a machine. As wonderful as AI is, AI systems are inevitably flawed… We’re not even close to Level 5. It’ll take many years and many more miles, in simulated and real world testing, to achieve the perfection required for level 5 autonomy.

Level 4 vehicles – cars that can perform all safety-critical driving functions of an entire trip – are more feasible, according to Pratt. Even so, reaching that point could take decades.

The Future of Autonomous Driving

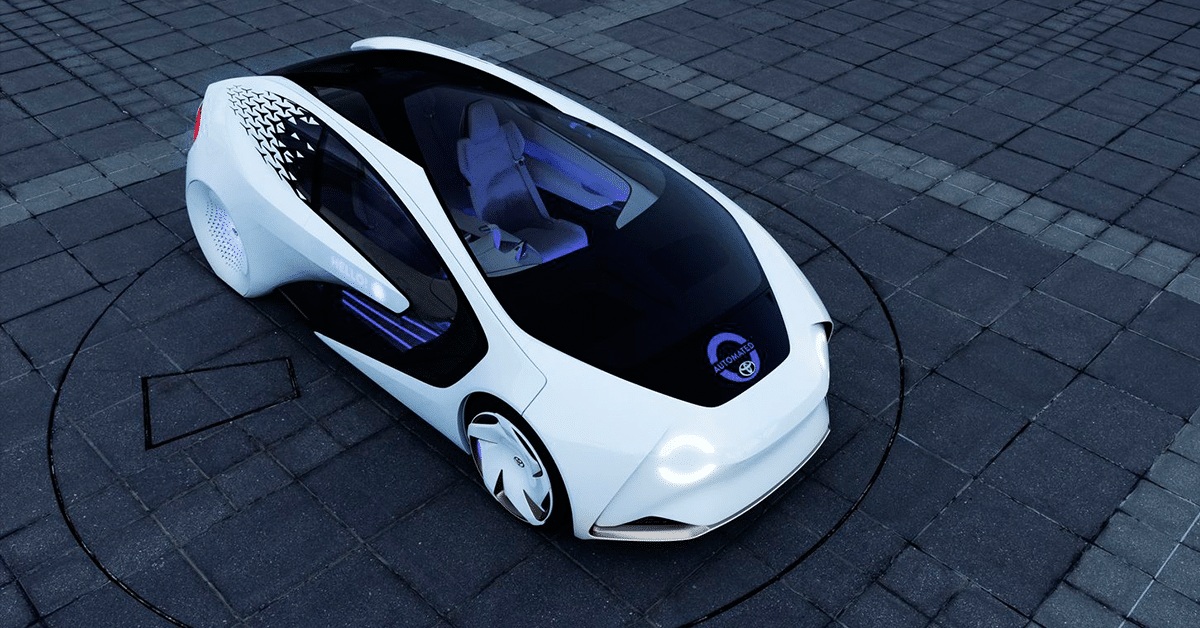

Despite Toyota’s pragmatic approach to autonomous vehicles, the company isn’t shying away from introducing their own version of it. Simultaneous to Pratt talking about how the world should be more aware of the nuances and implications of putting driverless cars on the road, Toyota also unveiled the Concept-i.

Unlike most autonomous cars, which focus on a vehicle’s capability to operate without a human behind the wheel, the Concept-i “demonstrates Toyota’s view that vehicles of the future should start with the people who use them,” the company explained in a press release.

As described in a previous Futurism article:

Powered by an artificial intelligence (AI) agent nicknamed “Yui,” the car learns about the driver, monitoring everything from their schedule and driving patterns to their responsiveness and emotions. Communication between Yui and the driver isn’t strictly voice-based — the interior is designed so that Yui can use light, sound, and touch to convey information.

Shifting the spotlight on how humans can more seamlessly engage with a car instead of pushing for being completely independent from human intervention is in line with the manufacturer’s belief that autonomous driving is not a technology we can afford to rush.

Share This Article