This article is part of a series about season four of Black Mirror, in which Futurism considers the technology pivotal to each episode and evaluates how close we are to having it. Please note that this article contains mild spoilers. Season four of Black Mirror is now available on Netflix.

A Twisted Museum

Miles and miles of desert and open highway — and then, a small roadside museum, one of those things people pull over and visit to stretch their legs but rarely seek out on purpose. It looks uninhabited; its windows are barred with rusty metal, making it a dark blemish on the peaceful, dusty continuity of the desert.

Sometimes, things look exactly as they should…because what’s inside Rolo Hayne’s Black Museum is just as twisted and dark as its exterior suggests.

“There’s a sad, sick story behind almost everything in here,” whispers Rolo Haynes, owner and proprietor, to the museum’s sole visitor. Haynes has collected criminological artifacts, each of which tells its own story of hope, pain, and horror. But unlike a collection of medieval torture instruments in the basement of a history museum (and in true Black Mirror fashion) each artifact was once a gleaming specimen of cutting-edge neurotechnology.

Rolo describes each artifact to the visitor in flashbacks. The first is a web of glowing diodes, draped over a mannequin head — the first piece of Rolo’s collection that foreshadows how “the main attraction came to be” (you’ll have to watch the show to see what that is exactly).

One of the most disturbing sequences hinges on headgear-like tech. In its former utility, the cap-like device, we learn, would gather information about the physical sensations of its wearer non-invasively. The information from the transceiver would then be sent wirelessly to a neural implant that was once installed in the base of a doctor’s skull, right behind their left ear. By slipping headgear gear onto a patient, the doctor could feel the physical sensations of the wearer.

The doctor could feel and experience the exact physical sensations of their patient, figure out whether or not they could say what was wrong, and often deliver a near-perfect diagnosis. The doctors wouldn’t experience any physical damage, no matter how severe the discomfort or pain, but the frequent sensations of pain have some, well, unforeseen consequences.

But how long will it be until we have to seriously contend with this technology and the potential consequences that it brings with it?

![Black Mirror - Black Museum | Official Trailer [HD] | Netflix thumbnail](https://i.ytimg.com/vi/CV0J3Bq3BIc/hqdefault.jpg)

Picking Up Signals

A device that can transmit one person’s physical sensations to another is not as impossible as it sounds, though the technology has a long way to go to until it’s able to do so perfectly. The entire process can be split into three steps: (a) recording signals from the brain, (b) decoding them and translating them into a language that the receiver brain can understand, and (c) simulating the sensation in the receiver brain.

Let’s start with the first step: recording signals from the brain. Electrodes or fiber-optics can record information such as pain signals from the sender brain. And the hardware required to link a human brain to a computer has become smaller over time, making the prospect of implanting a device or antenna a very real possibility. But any of these devices, no matter how small, would need to be surgically placed in the brain, and such an invasive procedure involving the brain is still risky and imperfect.

Image Credit: Netflix

Image Credit: Netflix

The surgery itself is risky, but also the recipient’s body could reject the implant, or it could deteriorate or malfunction over time.

However, there’s a non-invasive way to do the same thing, in which a device reads brain signals from the surface of the skin. “If you go the non-invasive route, you have the luxury of recording from multiple sites, sometimes the entire brain, without any surgery. However, you lose precision,” says Andrea Stocco, an assistant professor at the Department of Psychology and Institute for Learning and Brain Sciences at the University of Washington in Seattle. That is, because the receiver is so far from where the signals are coming from in the brain, devices often can’t pinpoint their origin closer than a general area.

The method most often used to do that today is called an electroencephalogram (EEG). It measures electrical signals in the brain, and its headset looks similar to Haynes’ transceiver in the show. EEGs can help doctors diagnose and treat brain disorders in which a lot of signals are going haywire, such as epilepsy, but they’re not precise enough to do much more than that. “EEG headsets can be made portable and cheap, but they have terrible problems in isolating signals, since they tend to pick up signals from all over the brain,” Stocco says.

Sending Simplistic Signals

Steps two and three in the process — replicating these signals into patterns of neural activity that the receiver can understand — are perhaps the most difficult. Of course, without a perfect reading, translating and transmitting signals becomes much more difficult. Robert Gaunt, assistant professor of physical medicine and rehabilitation at the University of Pittsburgh, has taken on this challenge. But instead of relaying physical sensation from brain to brain, he has helped rehabilitate sensation in those lacking it — a device he’s working on allowed an amputee to feel touch again via a robotic arm.

Using electrical stimulation in the brain, “we can create perceptions that people would describe as being cutaneous, or touch, in nature at specific locations on the body,” Gaunt tells Futurism, no matter whether that part of the body is physically present or connected to the brain.

But your sense of touch is surprisingly complex, and simulating sensation in those who can no longer feel is still early in its development. So far, the technology can’t make many distinctions between those “cutaneous” perceptions — say, the temperature and pressure of holding an ice pack to the skin. And some sensations are more difficult to conjure than others, just because of the region of the brain that controls them. “It’s easier to create sensations of touch, pressure, vibration, or a tingle than it is with pain. And that has got to do with some detailed physiological reasons about the sizes of axons and nerve cells themselves,” Gaunt says.

Touch-based sensations are multimodal — a variety of sensors (nerves) in our hands send small snippets of different information to the brain, which synthesizes the entire sensation. To recreate that perfectly in a lab, scientists would have to manipulate each in the exact combination, and relay them at the right speed. In short, it would be a huge challenge.

Furthermore, no two brains are the same. “Neural codes differ from individual to individual. Although there is a fair degree of similarity, especially at the level of brain architecture, there are also many differences between individual brains,” Stocco says. So even if we could perfectly replicate all of these signals, “it is not possible to simply ‘copy’ a pattern of activity from one brain to another; you would need to adapt and ‘translate’ it,” Stocco says.

Translating these brain signals is still very complex. Neurons in the brain all act a little differently, and scientists are just starting to get a sense of how to manipulate the communication system between them. “Every time you stimulate a neuron, you create a complex cascade of effects in a dynamic system. That means that, even if you fire your probe at 50Hz [for example], the cells nearby might not be responding at the same frequency.”

So we’re still a ways from being able to stimulate the brain all that precisely.

Feeling The Future

So let’s get to it then: how far are we, exactly, from being able to read sensations, decode them, and successfully transmit them to a receiver brain? Without having to resort to highly invasive neural stimulation or applying electricity to the skin, Stocco believes the future lies in minimally invasive technologies. To record signals, “you could slip a network of tiny cortical sensors just underneath the skull, and have it reside permanently” — sort of the way the cap works in Black Mirror, except it would be placed directly on the brain.

To stimulate the brain, Stocco is betting on ultrasound. You’ve probably heard of ultrasound— it’s been used in medicine since World War 2 to do things like take images of fetuses or opening the blood-brain barrier to deliver drugs. In 2014, researchers at Virginia Tech attempted to modulate neurons firing in the brain using a focused beam of ultrasound waves sent through the skull. The non-invasive experiment was not able make participants feel something that wasn’t there, but the ultrasound helped them better distinguish between two stimuli.

It’s not quite Black Mirror technology, but it’s an interesting finding that could warrant further study.

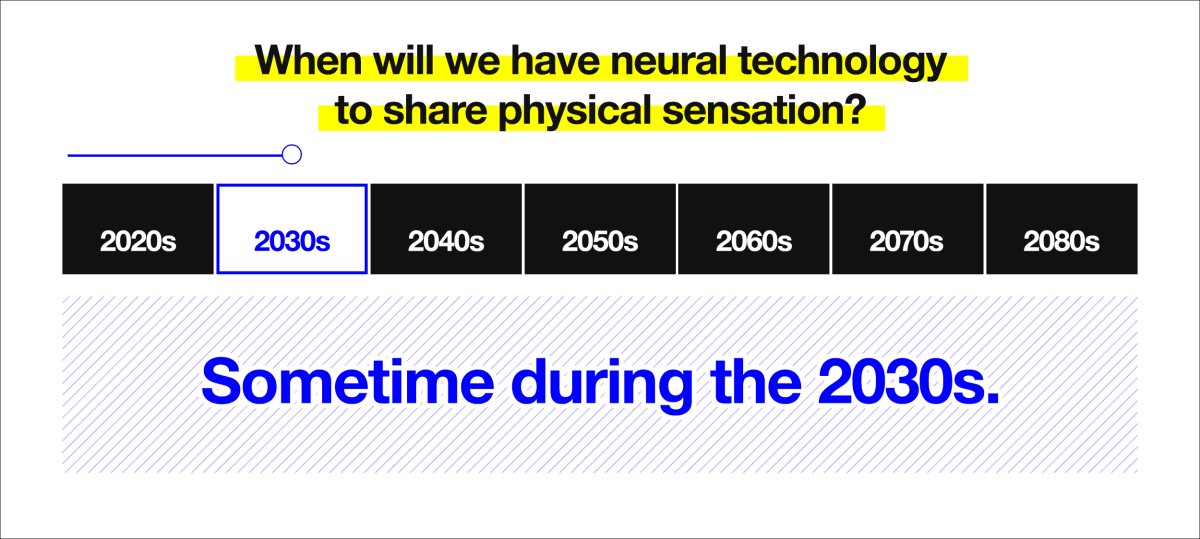

Even though the technology overall has a ways to go, it’s changing fast. And Stocco is optimistic that we could send physical sensations from one brain to the other pretty soon. “In twenty years, we have moved from crude pilots to having working limb prosthetics and cochlear implants, and as of now even a working memory prosthetic. My bet is that something close to a full neural interface that would let us feel what others feel could be reached by the end of 2038,” Stocco says.