Want your news delivered with the icy indifference of a literal robot? You might want to bookmark the newly launched site Knowhere News. Knowhere is a startup that combines machine learning technologies and human journalists to deliver the facts on popular news stories.

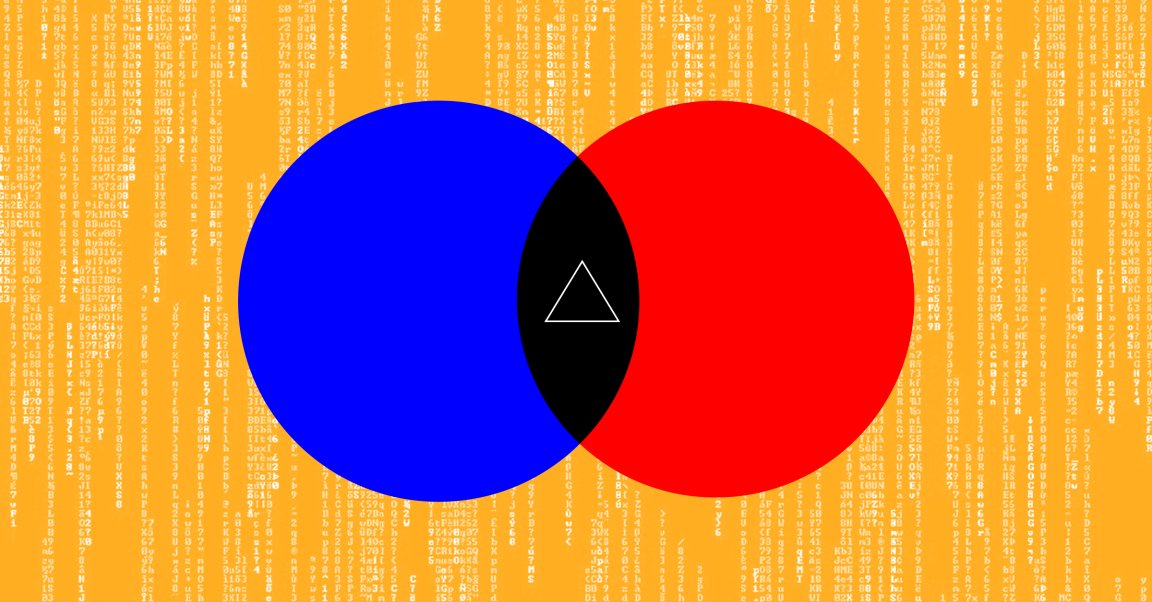

Here’s how it works. First, the site’s artificial intelligence (AI) chooses a story based on what’s popular on the internet right now. Once it picks a topic, it looks at more than a thousand news sources to gather details. Left-leaning sites, right-leaning sites – the AI looks at them all.

Then, the AI writes its own “impartial” version of the story based on what it finds (sometimes in as little as 60 seconds). This take on the news contains the most basic facts, with the AI striving to remove any potential bias. The AI also takes into account the “trustworthiness” of each source, something Knowhere’s co-founders preemptively determined. This ensures a site with a stellar reputation for accuracy isn’t overshadowed by one that plays a little fast and loose with the facts.

For some of the more political stories, the AI produces two additional versions labeled “Left” and “Right.” Those skew pretty much exactly how you’d expect from their headlines:

- Impartial: “US to add citizenship question to 2020 census”

- Left: “California sues Trump administration over census citizenship question”

- Right: “Liberals object to inclusion of citizenship question on 2020 census”

Some controversial but not necessarily political stories receive “Positive” and “Negative” spins:

- Impartial: “Facebook scans things you send on messenger, Mark Zuckerberg admits”

- Positive: “Facebook reveals that it scans Messenger for inappropriate content”

- Negative: “Facebook admits to spying on Messenger, ‘scanning’ private images and links”

Even the images used with the stories occasionally reflect the content’s bias. The “Positive” Facebook story features CEO Mark Zuckerberg grinning, while the “Negative” one has him looking like his dog just died.

Knowhere’s AI isn’t putting journalists out of work, either.

Editor-in-chief and co-founder Nathaniel Barling told Motherboard that a pair of human editors review every story. This ensures you feel like you’re reading something written by an actual journalist, and not a Twitter chatbot. Those edits are then fed back into the AI, helping it improve over time. Barling himself then approves each story before it goes live. “The buck stops with me,” he told Motherboard.

This human element could be the tech’s major flaw. As we’ve seen with other AIs, they tend to take on the biases of their creators, so Barling and his editors will need to be as impartial as humanly possible — literally — to ensure the AI retains its impartiality.

Knowhere just raised $1.8 million in seed funding, so clearly investors thinks it has potential to change how we get our news. But will it be able to reach enough people — and the right people — to really matter?

Impartiality is Knowhere’s selling point, so if you think it sounds like a site you want to visit, you’re probably someone who already values impartiality in news. Awesome. You aren’t the problem.

The problem is some people are perfectly happy existing in an echo chamber where they get news from a source that reflects what they’re thinking. And if you’re one of those news sources, you don’t want to alienate your audience, right? So you keep feeding the same comfortable readers the same biased stories.

This wouldn’t be such a big deal if the media status quo didn’t wreak havoc on our society, our democracy, and our planet.

So, impartial stories written by AI. Pretty neat? Sure. But society changing? We’ll probably need more than a clever algorithm for that.