A Good First Impression

We’re getting increasingly better at creating artificial intelligence (AI) that can think and act like humans. In addition to the relatively more cut-and-dry actions involved in fields like healthcare, transportation, and manufacturing, we’re also starting to see AI that is capable of more subjective tasks like making music and movie trailers that look a lot like human-made ones. Next up on the road to being human: making a snap judgment based on a person’s appearance.

Researchers from the University of Notre Dame have created an algorithm that judges a person’s face for such characteristics as trustworthiness or dominance. To do this, they first asked human participants to judge 6,300 black and white pictures of faces according to perceived trustworthiness, dominance, IQ, and age. The majority of these images, along with their labels, were then used to train and fine tune the algorithm.

After that, the machine was tested to see whether it responded to the remaining 100 images the same way humans did. Not only did it make roughly the same first impression judgments the humans did, further testing showed that it judged the faces using the same features humans use (e.g., the mouth to indicate trustworthiness, a lowered brow for dominance, etc.).

The Future of Casting?

On the surface, this research may seem rather inconsequential, but these types of algorithms could have surprising uses.

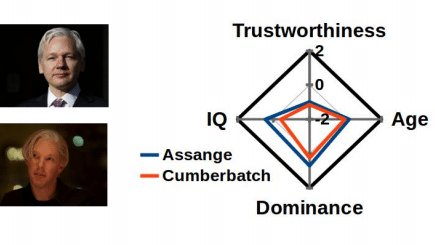

As part of the study, the researchers ask the machine to judge the faces of Edward Snowden and Julian Assange according to trustworthiness and dominance, and then they compared the judgments to those of the actors that played the men in films (Joseph Gordon-Levitt and Benedict Cumberbatch, respectively). They found that Snowden had similar ratings to Gordon-Levitt and Assange to Cumberbatch, which could imply that those were casting choices that would be well-received by audiences.

In a similar vein, the algorithm could be applied to politics, helping parties pick politicians with trustworthy faces, or to other research and marketing tests to predict initial reactions to spokespeople or maybe even products. Changes in perception over time can also be measured by taking multiple ratings, just like the researchers did when they applied their algorithm to each frame in a movie.

Perhaps most interesting would be seeing how the people on the other end of these judgments react. If you could find out from an impartial machine that the world likely perceives you as untrustworthy, more or less intelligent than you are, older or younger, how would that affect you? Would you try to change your demeanor?