Time for an upgrade

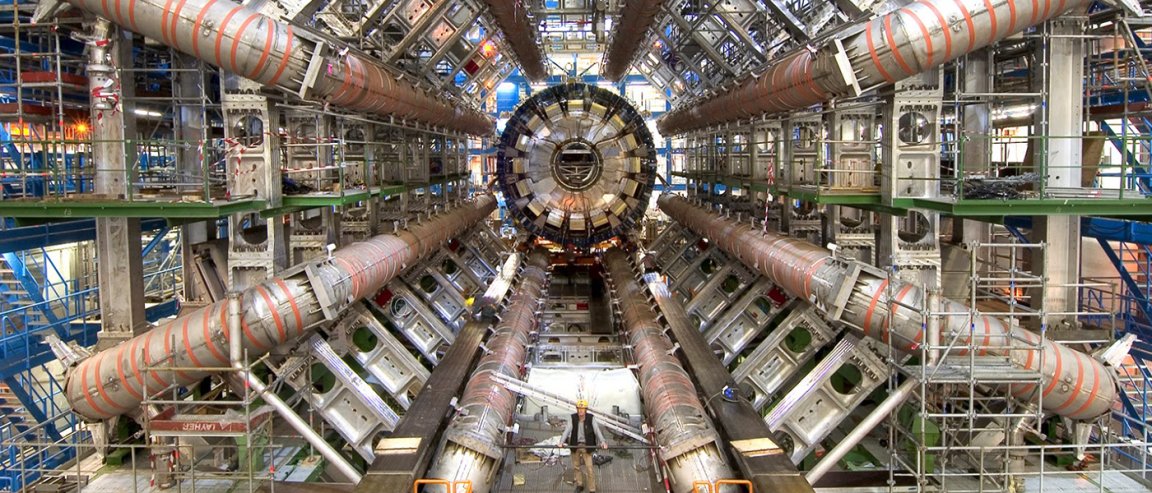

The Large Hadron Collider (LHC) is arguably one of the most important scientific tools humanity has ever come up with. It allows us to probe at the inner workings of the universe, giving us a glimpse at what happens at the subatomic level.

But this incredibly complex machine is close to experiencing a problem familiar to anyone with a computer—it is running out of disk space.

Given the amount of work that goes on with the LHC, this isn’t super surprising. It’s actually doing collisions about 70% of the time, more than double its expected rate.

“The number of hard drives that we buy and store the data on is determined years before we take the data, and it’s based on the projected LHC uptime and luminosity,” said Jim Olsen, a physics professor working on the CMS project. “Because the LHC is outperforming all estimates and even the best rosy scenarios, we started to run out of disk space. We had to quickly consolidate the old simulations and data to make room for the new collisions.”

Dark Sectors

CERN, who built the LHC, has been looking at different approaches to their data problem. Of course, they are buying more hard drives to expand their storage facilities, which currently have a 250 petabyte capacity. But they are also exploring options such as a hybrid system that incorporates cloud computing into storage and data analysis.

Obviously this is a big issue, but it also underscores the importance of the LHC in our quest to understand the universe around us. The LHC discovered the Higgs Boson, a holy grail of particle physics – and collision experiments don’t seem to be slowing down.

In fact, LHC experiments could shed light into the dark sectors of physics, or even properties that don’t fit with the Standard Model. Some of the latest experiments, TOTEM and ATLAS/ALFA, could even help us understand the dynamics of cosmic rays.

We just need to clear up some disk space first.