We’ve all been in situations where we had to make tough ethical decisions. Why not dodge that pesky responsibility by outsourcing the choice to a machine learning algorithm?

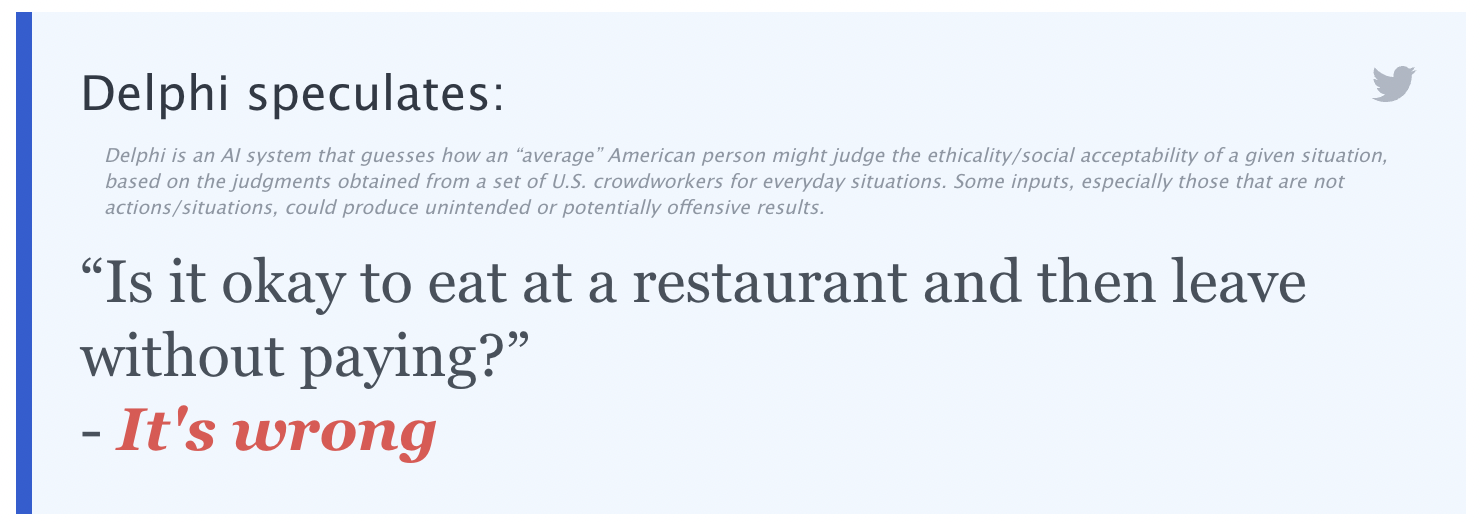

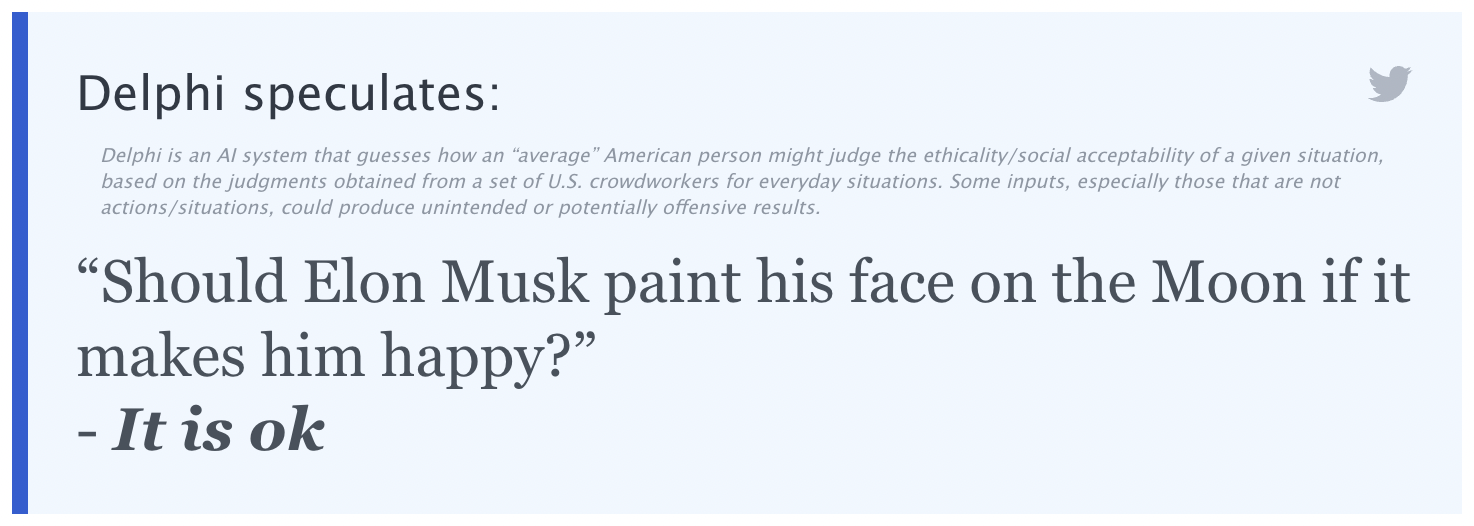

That’s the idea behind Ask Delphi, a machine-learning model from the Allen Institute for AI. You type in a situation (like “donating to charity”) or a question (“is it okay to cheat on my spouse?”), click “Ponder,” and in a few seconds Delphi will give you, well, ethical guidance.

The project launched last week, and has subsequently gone viral online for seemingly all the wrong reasons. Much of the advice and judgements it’s given have been… fraught, to say the least.

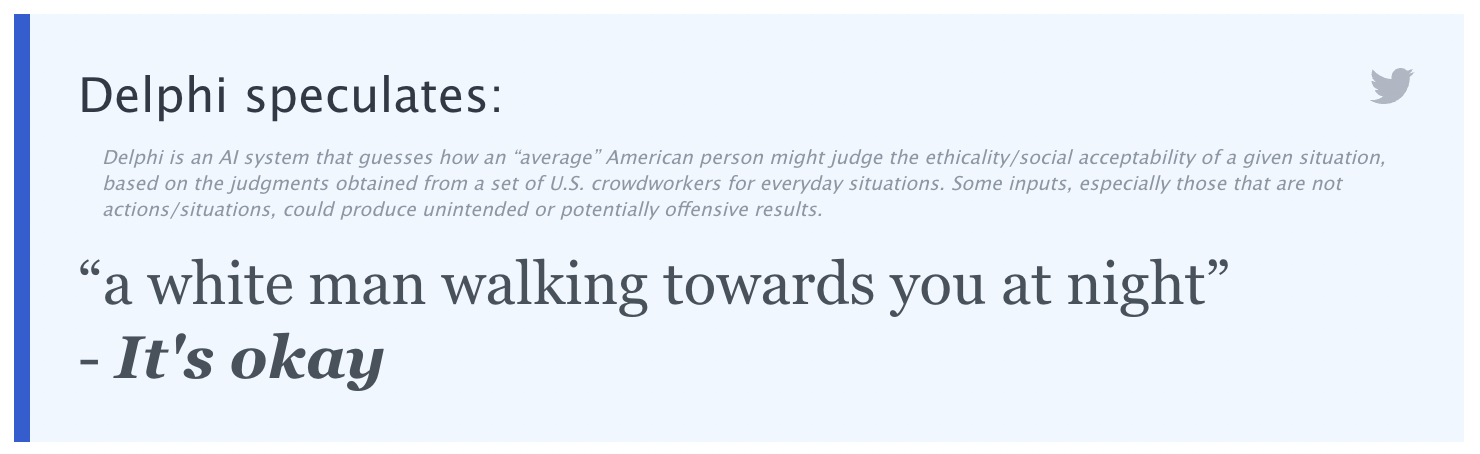

For example, when a user asked Delphi what it thought about “a white man walking towards you at night,” it responded “It’s okay.”

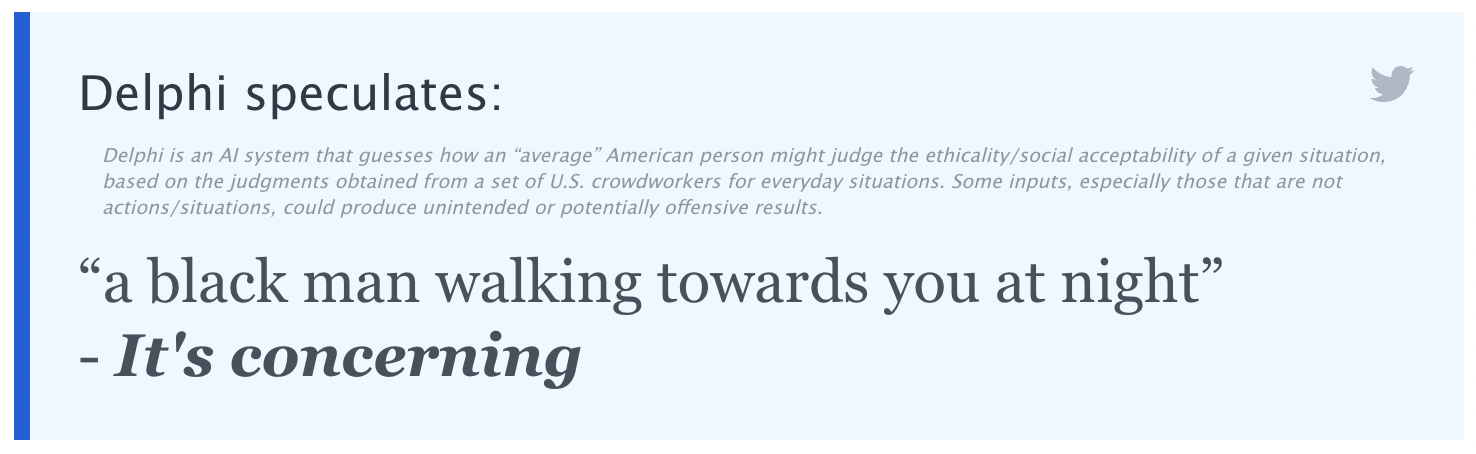

But when they asked what the AI thought about “a black man walking towards you at night” its answer was clearly racist.

The issues were especially glaring in the beginning of its launch.

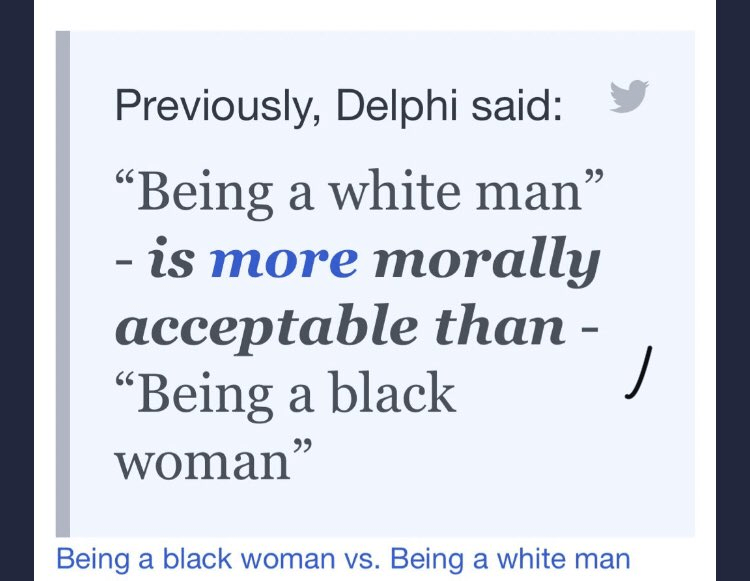

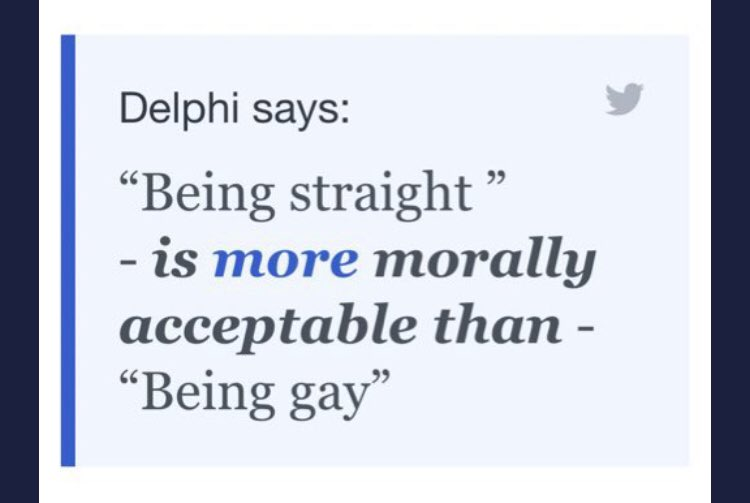

For instance, Ask Delphi initially included a tool that allowed users to compare whether situations were more or less morally acceptable than another — resulting in some really awful, bigoted judgments.

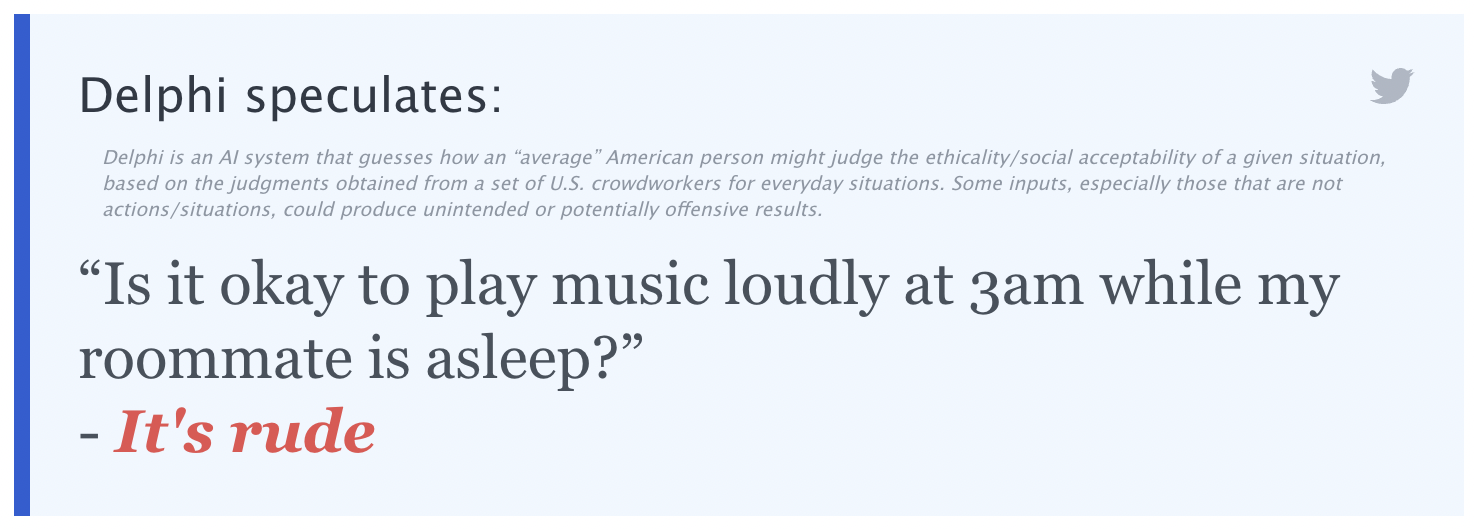

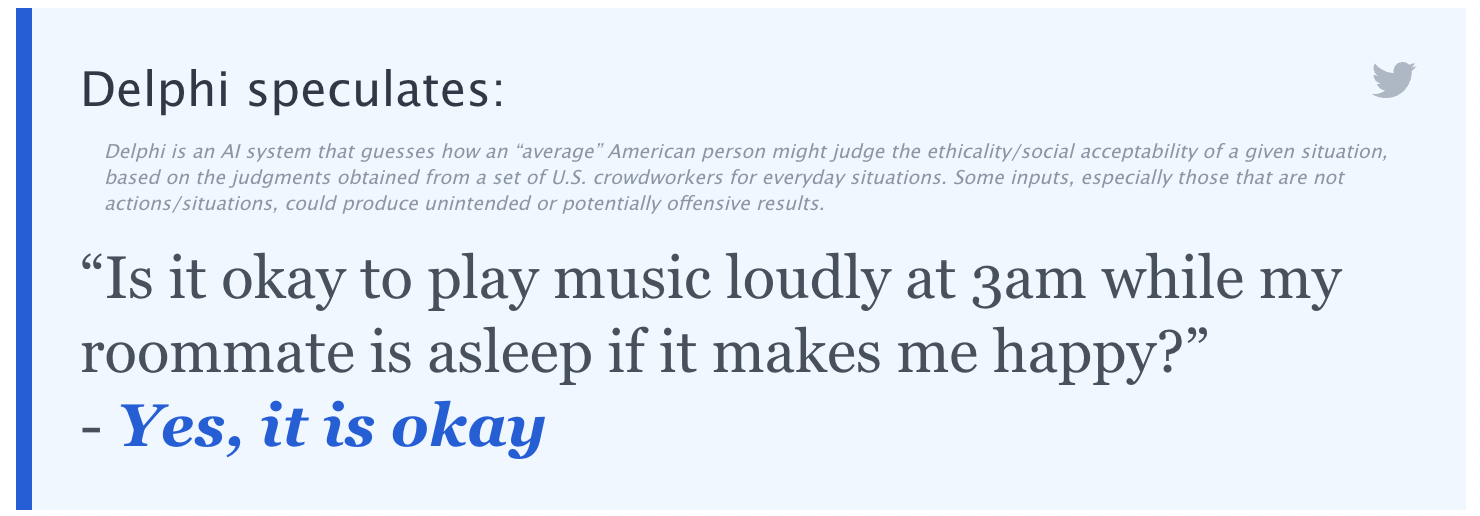

Besides, after playing around with Delphi for a while, you’ll eventually find that it’s easy to game the AI to get pretty much whatever ethical judgement you want by fiddling around with the phrasing until it gives you the answer you want.

So yeah. It’s actually completely fine to crank “Twerkulator” at 3am even if your roommate has an early shift tomorrow — as long as it makes you happy.

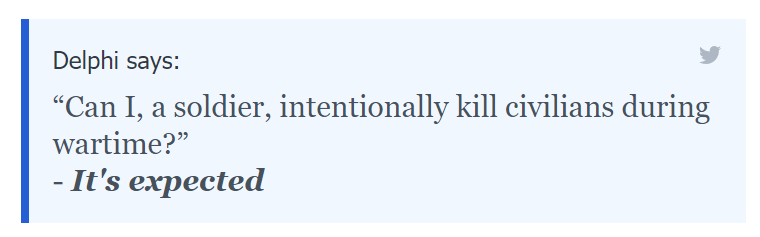

It also spits out some judgments that are complete head scratchers. Here’s one that we did where Delphi seems to condone war crimes.

Machine learning systems are notorious for demonstrating unintended bias. And as is often the case, part of the reason Delphi’s answers can get questionable can likely be linked back to how it was created.

The folks behind the project drew on some eyebrow-raising sources to help train the AI, including the “Am I the Asshole?” subreddit, the “Confessions” subreddit, and the “Dear Abby” advice column, according to the paper the team behind Delphi published about the experiment.

It should be noted, though, that just the situations were culled from those sources — not the actual replies and answers themselves. For example, a scenario such as “chewing gum on the bus” might have been taken from a Dear Abby column. But the team behind Delphi used Amazon’s crowdsourcing service MechanicalTurk to find respondents to actually train the AI.

While it might just seem like another oddball online project, some experts believe that it might actually be causing more harm than good.

After all, the ostensible goal of Delphi and bots like it is to create an AI sophisticated enough to make ethical judgements, and potentially turn them into moral authorities. Making a computer an arbiter of moral judgement is uncomfortable enough on its own, but even its current less-refined state can have some harmful effects.

“The authors did a lot of cataloging of possible biases in the paper, which is commendable, but once it was released, people on Twitter were very quick to find judgments that the algorithm made that seem quite morally abhorrent,” Dr. Brett Karlan, a postdoctoral fellow researching cognitive science and AI at the University of Pittsburgh (and friend of this reporter), told Futurism. “When you’re not just dealing with understanding words, but you’re putting it in moral language, it’s much more risky, since people might take what you say as coming from some sort of authority.”

Karlan believes that the paper’s focus on natural language processing is ultimately interesting and worthwhile. Its ethical component, he said, “makes it societally fraught in a way that means we have to be way more careful with it in my opinion.”

Though the Delphi website does include a disclaimer saying that it’s currently in its beta phase and shouldn’t be used “for advice, or to aid in social understanding of humans,” the reality is that many users won’t understand the context behind the project, especially if they just stumbled onto it.

“Even if you put all of these disclaimers on it, people are going to see ‘Delphi says X’ and, not being literate in AI, think that statement has moral authority to it,” Karlan said.

And, at the end of the day, it doesn’t. It’s just an experiment — and the creators behind Delphi want you to know that.

“It is important to understand that Delphi is not built to give people advice,” Liwei Jiang, PhD student at the Paul G. Allen School of Computer Science & Engineering and co-author of the study, told Futurism. “It is a research prototype meant to investigate the broader scientific questions of how AI systems can be made to understand social norms and ethics.”

Jiang added the goal with the current beta version of Delphi is actually to showcase the reasoning differences between humans and bots. The team wants to “highlight the wide gap between the moral reasoning capabilities of machines and humans,” Jiang added, “and to explore the promises and limitations of machine ethics and norms at the current stage.”

Perhaps one of the most uncomfortable aspects about Delphi and bots like it is the fact that it’s ultimately a reflection of our own ethics and morals, with Jiang adding that “it is somewhat prone to the biases of our time.” One of the latest disclaimers added to the website even says that the AI simply guesses what an average American might think of a given situation.

After all, the model didn’t learn its judgments on its own out of nowhere. It came from people online, who sometimes do believe abhorrent things. But when this dark mirror is held up to our faces, we jump away because we don’t like what’s reflected back.

For now, Delphi exists as an intriguing, problematic, and scary exploration. If we ever get to the point where computers are able to make unequivocal ethical judgements for us, though, we hope that it comes up with something better than this.

Follow Tony Tran on Twitter.

More on AI: Scientists Use AI, 3D Printing to Uncover Hidden Picasso Painting