Breaking Rules

Despite all the benefits of robots in society, performing dangerous industrial tasks and going to places humans can’t or won’t go, there are still very credible fears about them. The military is increasing reliance on drones and automated robots. Many feel that jobs are being threatened by increasing automation. Now they have something else to worry about: robots that can harm humans on their own.

A roboticist and artist based in Berkeley, California has just created a robot that breaks Asimov’s first rule, “A robot may not injure a human being, or, through inaction, allow a human being to come to harm.”

Let’s qualify that: robots harm humans all the time. Industrial accidents that involve robots and humans are common and drone strikes certainly kill many. But this one is different: it hurts humans deliberately and even its creator, Alexander Reben, cannot tell whether it will harm someone.

“The decision to hurt a person,” Reben said to The Washington Post, “happens in a way that I can’t predict.” The software does not use machine learning or artificial intelligence to decide but neither is it a 50:50 chance. “I don’t know the probability,” Reben adds.

Ethical Dilemmas

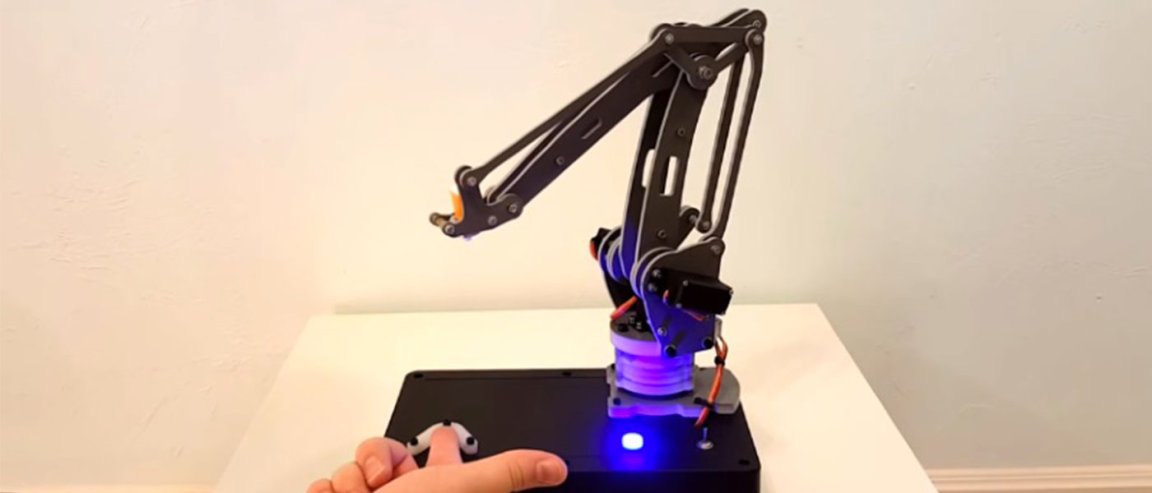

Yes, the robot harms humans but was designed to inflict minimal harm. Reben’s creation is a robot arm. A sensor detects when someone places a finger beneath the arm. If the robot decides to strike, it will do so with a a small needle attached to the arm. It cost about $200 (£141) to make and took a few days to put together.

The main goal of the robot is for people to confront the possibility of robots that can harm humans, about responsibility for the harm caused, and how we view robots in general.

“I wanted to make a robot that does this that actually exists…That was important to, to take it out of the thought experiment realm into reality, because once something exists in the world, you have to confront it. It becomes more urgent. You can’t just pontificate about it.” Reben said to The Fast Company.