Ninebot

At the 2016 CES, Intel unveils the Ninebot Segway—a self-balancing segway that can transform into a self-propelling personal helper, one that is equipped with with voice recognition, live streaming camera capabilities, object detection, and even facial recognition.

The device follows the same operating mechanism as other self-balancing Segways, using gyro stabilization, which users can steer using their legs and can reach a top speed of 18 km/h (11 mph), and it can travel up to 30 kilometers (18 miles).

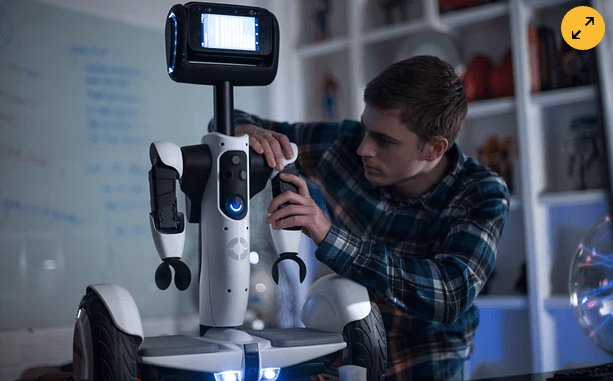

However, once you step off, the segway transforms into a robot helper that can communicate, navigate itself, and even work around obstacles. Most of the robot parts are able to fold away when it needs to be ridden. Ultimately, this makes it look a lot more like a (now entirely headless) Ninebot Mini Segway.

At the convention, the Ninebot was shown successfully navigating the on-stage living room, communicating with its human operators, and even following the inventor of the device off stage—all made possible with Intel’s RealSense tech. Notably, a collaboration was recently announced between Intel and Segway.

Robot Companion

While a release date has yet to be announced for the Ninebot, it’s evident that the company behind it is making inroads towards the creation of a consumer friendly robot companion.

The product, and the technology driving it, is part of an ongoing and collaborative open robotics development platform powered by Intel’s Atom and RealSense chips and cameras. This means that, when the device is officially made available, hopefully by the second half of the year, it will come bundled with a developer kit.

Also, its face is an emoji.