Many times, when you see images of nebulae, planets, and galaxies – if you are like me – you find yourself imagining the splendor of actually seeing that object in real life, maybe even having a moment of doubt when you ask yourself, “Is this even real?” Unfortunately, I have some tough news for you. Some will take it harder than others, but eventually, we’ll all get over it.

Many times, the coloring in the images released by NASA or the ESA don’t reflect what you see if you were able to observe these regions with your own two eyes. Instead, they are generally shown in something called “false color.”

True Color Images:

A true-color image, on the other hand, captures the region exactly as it is. Even though we definitely have the technological capability to take true-color pictures, scientists tend to take issue with the terminology since color isn’t a static thing. Take Mars, as an example. Some of the pictures the rovers take are in “true color”, but the perceived color changes depending on atmospheric conditions. Jim Bell, a scientist in charge of the rover’s Pancam color imaging system, says, “We actually try to avoid the term ‘true color’ because nobody really knows precisely what the ‘truth’ is on Mars. Colors change from moment to moment. It’s a dynamic thing. We try not to draw the line that hard by saying ‘this is the truth!’”

[Reference: UniverseToday]

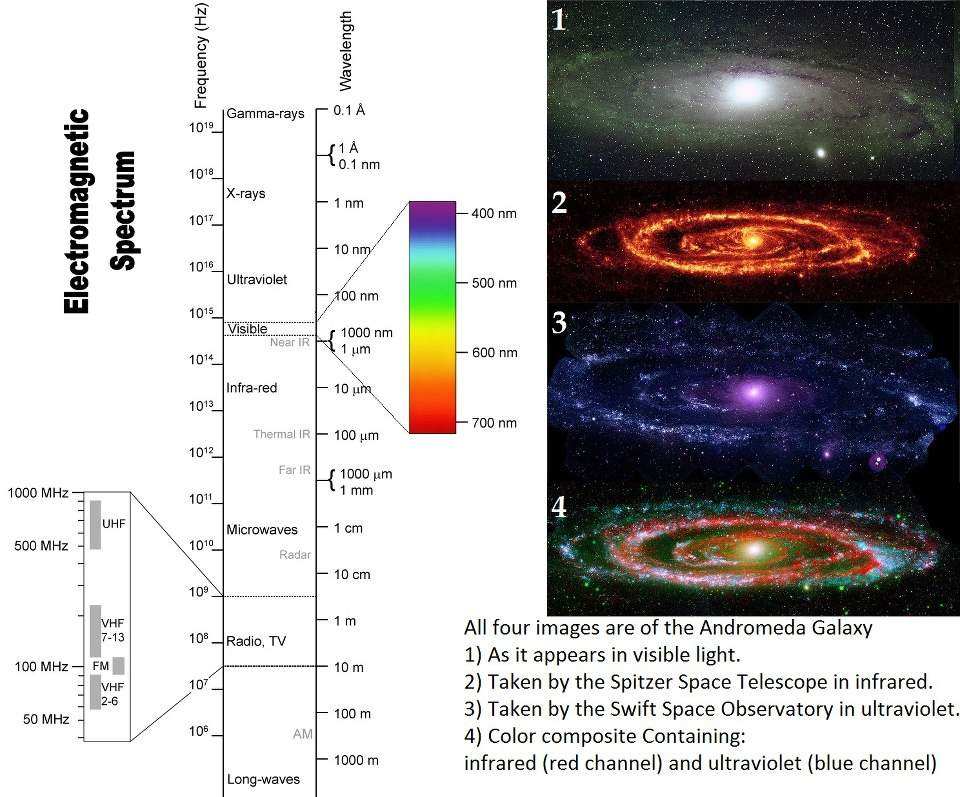

When we gather data from various portions of the electromagnetic spectrum (like in x-ray, in ultraviolet or in infrared light), the image, by nature, has to be falsely colored. Otherwise, we couldn’t see it. Even for the bits of the electromagnetic spectrum we can see, when taking pictures of celestial objects, oftentimes they are so faint that they appear very dark to us. Again, this not very useful for scientists.

In general, a true color image isn’t helpful for a multitude of reasons. In order to learn as much as we can from any given image, scientists want to cram as much information into each pixel that they possibly can, thus giving rise to insertion of false color data.

False Color:

In astrophotography, there are two basic kinds of false-color images you can take:

- Images taken of parts of the electromagnetic spectrum invisible to the human eye. These frequencies are then mapped to the red, green, or blue color channels (as seen the pictures of Andromeda shown above).

- Images taken of very specific frequencies of visible light emitted by specific elements. Again, these frequencies are mapped to the red, green, or blue color channels. This is also called narrowband imagery.

Both types of false-color images reveal secrets about the object we’re viewing. Using the different frequencies of light, scientists are able to peel away layers of a galaxy or nebula to reveal what lurks behind the dust. Scientists are able to see interesting objects and areas — like star-forming regions and black holes — using this method. They can also learn about the movements of these objects by measuring the redshift/blueshift.

Through narrowband imaging, scientists are able to see what objects are made of by analyzing the light reflected by different elements. They can measure the chemical composition of nebulae, stars, planets, galaxies, etc., providing details about what the universe is made of.

Even then, many times, images are taken in natural color. This is a close approximation of what the object actually looks like by mapping visible frequencies to the red, green, and blue channels. Even then, if you were sitting in a spaceship looking at the object yourself, it would probably look a little different. But, as I mentioned before, color isn’t static and depends on the environmental conditions, as well as the eye of the beholder.

What does this all mean? Simply put, if you were close enough to see the objects we image, they would look magnificent in a different, and sometimes misleading, way. Hubble, Chandra, Spitzer, and all the other observatories we have still offer us an unprecedented view into the cosmos, revealing the invisible secrets of the universe.

Just because the image is in false-color doesn’t mean it isn’t splendid or real.

Further Reading:

- “Behind the Pictures – Color as a Tool” HubbleSite. A fantastic article on the Hubble coloring process.

- “Phony Colors? Or Just Misunderstood?” Robert Hurt, NASA. 2 August 2010.

- “True or False (Color): The Art of Extraterrestrial Photography“. Nancy Atkinson, Universe Today. 1 October 2007

- “False-colour astrophotography explained“. Jonathan Crowe. 25 April 2010.