The next time you sit down to watch a movie, the algorithm behind your streaming service might recommend a blockbuster that was written by AI, performed by robots, and animated and rendered by a deep learning algorithm. An AI algorithm may have even read the script and suggested the studio buy the rights.

It’s easy to think that technology like algorithms and robots will make the film industry go the way of the factory worker and the customer service rep, and argue that artistic filmmaking is in its death throes. For the film industry, the same narrative doesn’t apply — artificial intelligence seems to have enhanced Hollywood’s creativity, not squelched it.

It’s true that some jobs and tasks are being rendered obsolete now that computers can do them better. The job requirements for a visual effects artist are no longer owning a beret and being good at painting backdrops; the industry now calls for engineers who are good at training deep learning algorithms to do the mundane work, like manually smoothing out an effect or making a digital character look realistic. In doing so, the creative artists who still work in the industry can spend less time hunched over a computer meticulously editing frame-by-frame and go do more interesting things, explains Darren Hendler, who heads Digital Domain’s Digital Humans Group.

Just like computers made it so animators didn’t have to draw every frame by hand, advanced algorithms can automatically render advanced visual effects. In both cases, the animator didn’t lose their job.

“We find that a lot of the more manual, mundane jobs become easy targets [for AI automation] where we can have a system that can do that much, much quicker, freeing up those people to do more creative tasks,” Hendler tells Futurism.

“I think you see a lot of things where it becomes easier and easier for actors to play their alter egos, creatures, characters,” he adds. “You’re gonna see more where the actors are doing their performance in front of other actors, where they’re going to be mapped onto the characters later.”

We find that a lot of the more manual, mundane jobs become easy targets [for AI automation] where we can have a system that can do that much, much quicker, freeing up those people to do more creative tasks.

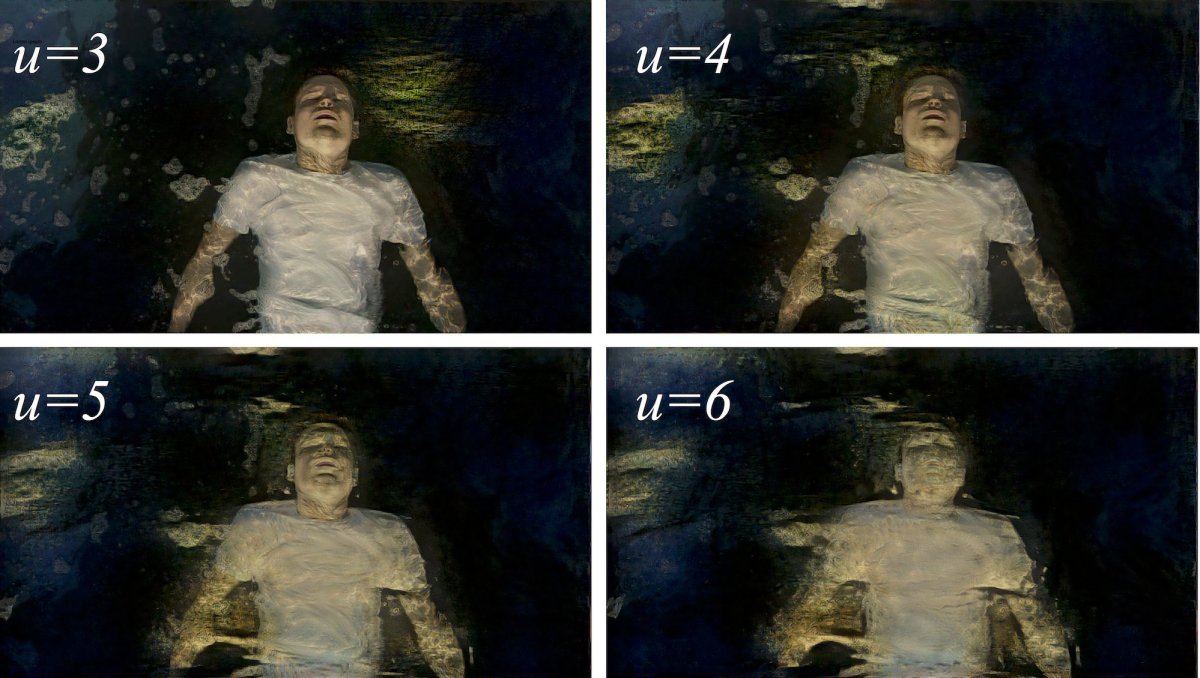

Recently, Hendler and his team used artificial intelligence and other sophisticated software to turn Josh Brolin into Thanos for Avengers: Infinity War. More specifically, they used an AI algorithm trained on high-resolution scans of Brolin’s face to track his expressions down to individual wrinkles, then used another algorithm to automatically map the resulting face renders onto Thanos’ body before animators went in to make some finishing touches.

Such a process yields the best of both motion capture worlds — the high resolution usually obtained by unwieldy camera setups and the more nuanced performance made possible by letting an actor perform surrounded by their costars, instead of alone in front of a green screen. And even though face mapping and swapping technology would normally take weeks, Digital Domain’s machine learning algorithm could do it in nearly real-time, which let them set up a sort of digital mirror for Brolin.

“When Josh Brolin came for the first day to set, he could already see what his character, his performance would look like,” says Hendler.

Yes, these algorithms can quickly accomplish tasks that used to require dedicated teams of people. But when used effectively they can help bring out the best performances, the smoothest edits, and the most advanced visual effects possible today. And even though the most advanced (read: expensive) algorithms may be limited to major Disney blockbusters right now, Hendler suspects that they’ll someday become the norm.

“I think it’s going to be pretty widespread,” he says. “I think the tricky part is its such a different mindset and approach that people are just figuring out how to get it to work.” Still, as people become more familiar with training machines to do their animation for them, Hendler thinks that it’s only logical that more and more applications for artificial intelligence in filmmaking are about to emerge.

“[Machine learning] hasn’t been widely adopted yet because filmmakers don’t fully understand it,” Hendler says. “But we’re starting to see elements of deep learning and machine learning incorporated into very specific areas. It’s something so new and so different from anything we’ve done in the past.”

But many of these applications already have emerged. Last January, Kristen Stewart (yes, that one) directed a short film and partnered with an Adobe engineer to develop a new kind of neural network that edited their footage to look like an impressionist painting that Stewart made.

Meanwhile, Disney built robot acrobats that can be flung high into the air so that they can later be edited (perhaps by AI) to look like the performers and their stunt doubles. Now those actors get to rest easy and focus on the less dangerous parts of their job.

Artificial intelligence may soon move beyond even the performance and editing sides of the filmmaking process — it may soon weigh in on whether or not a film is made in the first place. A Belgian AI company called Scriptbook developed an algorithm that the company claims can predict whether or not a film will be commercially successful just by analyzing the screenplay, according to Variety.

Normally, script coverage is handled by a production house or agency’s hierarchy of executive assistants and interns, the latter which don’t need to be paid under California law. To justify the $5,000 expenditure over sometimes-free human labor, Scriptbook claims that its algorithm is three times better at predicting box office success than human readers. The company also asserts that it would have recommended that Sony Pictures not make 22 of its biggest box office flops over the past three years, which would have saved the production company millions of dollars.

Scriptbook has yet to respond to Futurism’s multiple requests for information about its technology and the limitations of its algorithm (we will update this article if and when the company replies). But Variety mentioned that the AI system can predict an MPAA rating (R, PG-13, that sort of thing), detect who the characters are and what emotions they express, and also predict a screenplay’s target audience. It can also determine whether or not a film will pass the Bechdel Test, a bare minimum baseline for representation of women in media. The algorithm can also determine whether or not the film will include a diverse cast of characters, though it’s worth noting that many scripts don’t specify a character’s race and whitewashing can occur later on in the process.

Human script readers can do all this too, of course. And human-written coverage often includes a comprehensive summary and recommendations as to whether or not a particular production house should pursue a particular screenplay based on its branding and audience. Given how much artificial intelligence struggles with emotional communication, it’s unlikely that Scriptbook can provide the same level of comprehensive analysis that a person could. But, to be fair, Scriptbook suggested to Variety that their system could be used to aid human readers, not replace them.

Getting some help from an algorithm could help people ground their coverage in some cold, hard data before they recommend one script over another. While it may seem like this takes a lot of the creative decision-making out of human hands, tools like Scriptbook can help studio houses make better financial choices — and it would be naïve to assume there was a time when they weren’t primarily motivated by the bottom line.

Hollywood’s automated future isn’t one that takes humans out of the frame (that is, unless powerful humans decide to do so). Rather, Hendler foresees a future where creative people get to keep on being creative as they work alongside the time-saving machines that will make their jobs simpler and less mundane.

“We’re still, even now, figuring out how we can apply [machine learning] to all the different problems,” Hendler says. “We’re gonna see some very, very drastic speed improvements in the next two, three years in a lot of the things that we do on the visual effects side, and hopefully quality improvements too.”

To read more about people using artificial intelligence to create special effects, click here: The Oscar for Best Visual Effects Goes To: AI