A New Network

Despite all of the arguably worthwhile hype over artificial intelligence (AI) and artificial neural networks, current systems require huge quantities of data to learn, and experts have become increasingly concerned that future systems will, too.

Now, the Google researcher responsible for much of the hype over neural networks has developed a new type of AI he believes will address this limitation: capsule networks.

Geoff Hinton outlined his capsule networks in a pair of open-access research papers published on ArXiv and OpenReview.net. He claims the papers prove ideas he’s been musing for nearly 40 years. “It’s made a lot of intuitive sense to me for a very long time, it just hasn’t worked well,” Hinton said in an interview with Wired. “We’ve finally got something that works well.”

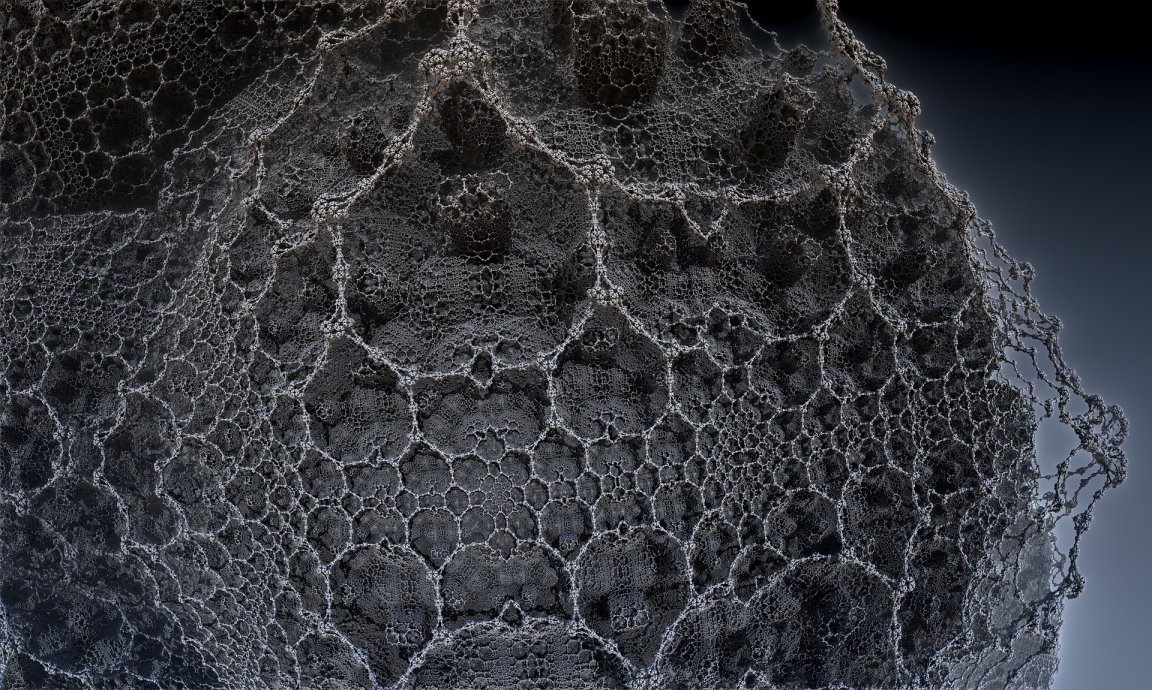

Each “capsule” in Hinton’s network comprises a small group of artificial neurons that cooperate to identify things. These capsules are organized into layers, and each layer of capsules is designed to identify specific features in an image. When multiple capsules within a layer agree on what they’ve identified, they activate the next layer, and then the next. The layers cascade onward until the network is sure about what it is identifying.

Presently, a computer must look at thousands of photos of an object from many different perspectives in order to recognize that object from different angles. Hinton told Wired he believes the redundancies in the layers will allow capsule networks to identify objects from multiple angles and in different scenarios.

So far, he seems to be right. In a test identifying handwritten digits, capsule networks were able to match the accuracy of the best old-school neural networks, and in a test identifying toys from multiple angles, they halved the error rate.

Improved Vision

In 2012, Hinton and two graduate students at the University of Toronto proved that artificial neural networks could advance a computer’s ability to understand images by leaps and bounds. Their research lit imaginations afire in the tech world, and soon, all three were working for Google.

Half a decade later, neural networks are now implemented into everything from self-guided robots to Tesla’s electric vehicles and used for applications as varied as translating languages in Google searches and improving our understanding of the quantum world. According to some, they’re even “approaching human levels of consciousness.“

Still, Hinton isn’t convinced the technology is anywhere near as good as it could be. “I think the way we’re doing computer vision is just wrong,” he told Wired. “It works better than anything else at present, but that doesn’t mean it’s right.”

While Hinton acknowledges to Wired that his capsule networks work more slowly than existing image-recognition software and have yet to be tested on large collections of images, he is optimistic his new system will be an improvement on traditional neural networks once he’s able to address its shortcomings. Considering all we’ve been able to accomplish with the “wrong” kind of computer vision, just imagine what we’ll be able to do with the right.