Fake Spotting

Researchers have developed an algorithm that identifies deepfake portraits by looking at their eyes.

Computer scientists at the University of Buffalo created the deepfake-spotting algorithm, according to a press release, that analyzes the reflections in the eyes of portraits to determine their authenticity.

In a study accepted in the IEEE International Conference on Acoustics, Speech and Signal Processing, the researchers claim that the algorithm is 94 percent effective at identifying the false images — an intriguing new approach to verifying real image and video.

Windows To The Soul

The reason the algorithm is able to detect the false imagery is surprisingly simple: deepfake AIs suck at creating accurate eye reflections.

“The cornea is almost like a perfect semisphere and is very reflective,” said Dr. Siwei Lyu, SUNY Empire Innovation professor and lead author of the study, in the press release. “So, anything that is coming to the eye with a light emitting from those sources will have an image on the cornea.”

“The two eyes should have very similar reflective patterns because they’re seeing the same thing,” he added. “It’s something that we typically don’t typically notice when we look at a face.”

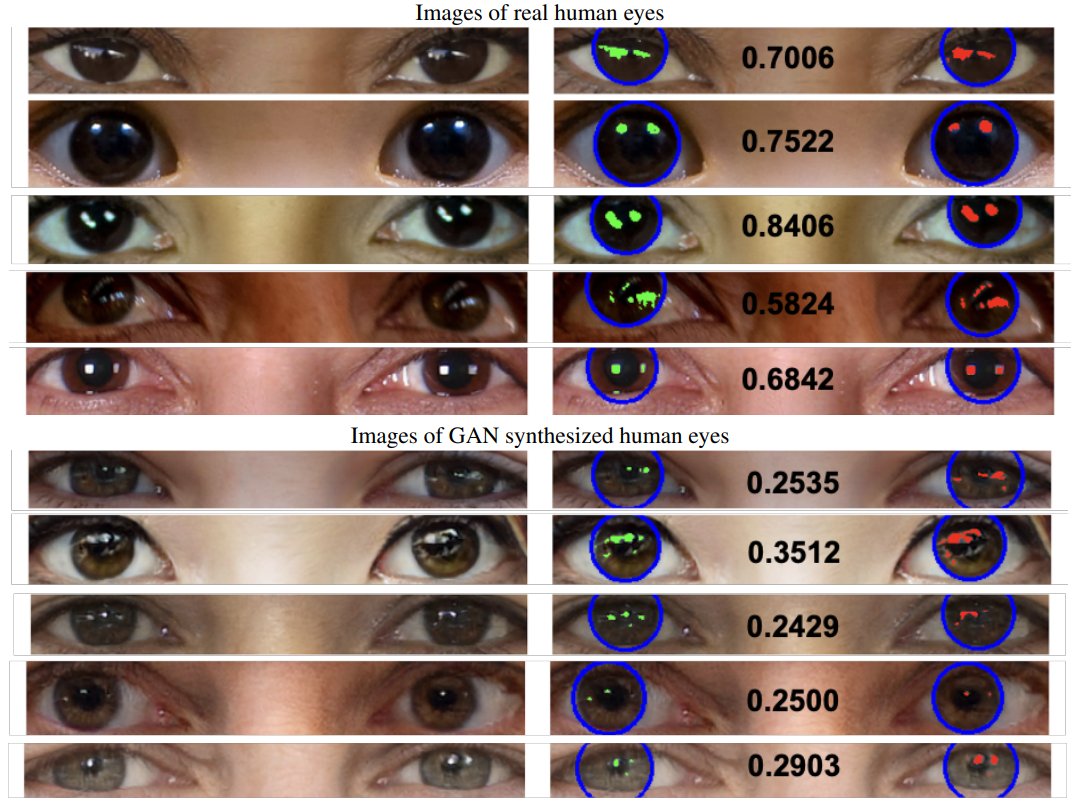

However, deepfake AI is surprisingly inept at creating consistent reflections in both eyes. Heck, you can probably do a good job spotting the difference yourself using the images below of real human eyes and AI-generated eyes:

Deepfake Dangers

The algorithm’s development is a part of Lyu’s evangelism calling attention to the growing need for tools to spot deepfakes. His warnings and expertise on the subject even brought him to Congress in 2019 where he testified on the dangers of deepfakes and how to fight them.

“Unfortunately, a big chunk of these kinds of fake videos were created for pornographic purposes, and that [caused] a lot of… psychological damage to the victims,” he said in the press release. “There’s also the potential political impact, the fake video showing politicians saying something or doing something that they’re not supposed to do. That’s bad.”

Not to mention, deepfakes can create uncanny, fake videos of mega-famous Scientologists.

READ MORE: New Deepfake Spotting Tool Proves 94% Effective — Here’s the Secret of Its Success [SciTechDaily]

More on AI: New Browser App Lets You Create Photorealistic Fake People