Advanced Transport

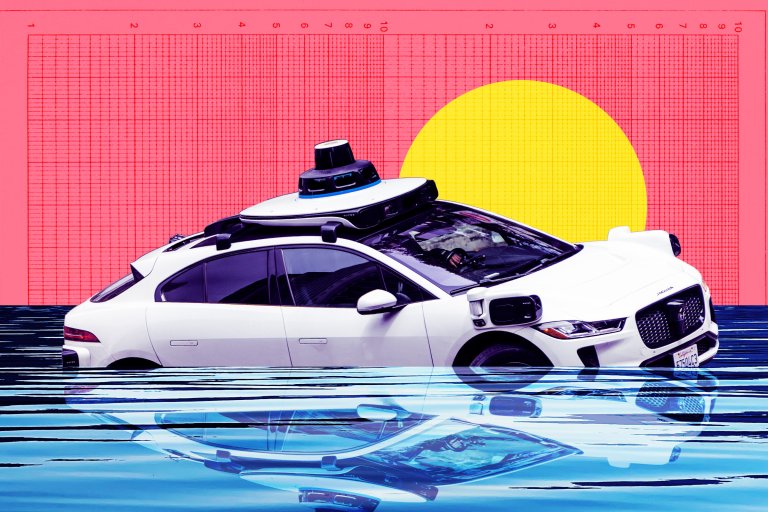

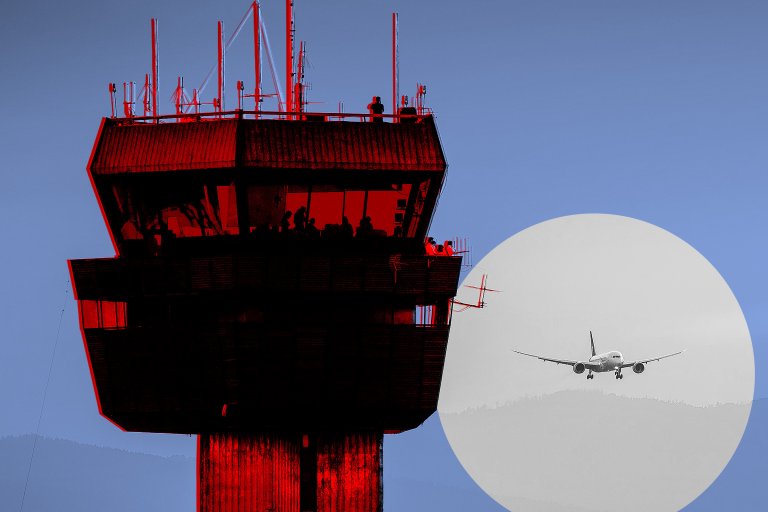

Humanity isn’t just traveling faster and farther than ever before; we’re traversing the Earth in unimaginably innovative ways. We already have working jetpacks and remarkably fast high-speed trains—and in the world of tomorrow, fantastical vehicles like flying cars may be ubiquitous. We’ll be here to cover news on Tesla, Uber and driverless cars, but also the innovations that are transforming this landscape and accelerating us in ways that previous generations only dreamed.