ChatBot

When OpenAI, the AI research organization founded by Elon Musk, created a text-generating AI system called GPT-2, the group said it was too dangerous to be released lest it be used to create propaganda or fake news.

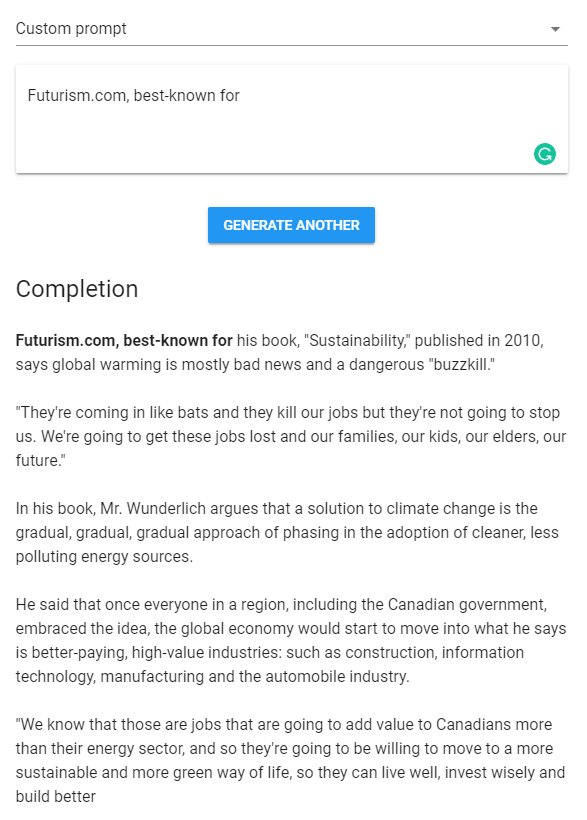

Now, thanks to a website called “TalkToTransformer.com,” you can use a watered-down version of the algorithm to write your to-do list, draft a new screenplay, or write rambling essays based on a prompt. The results aren’t perfect, but by making the algorithm publicly accessible, people could get a better understanding of what advanced AI is capable of and where it falters.

Short Term Memory

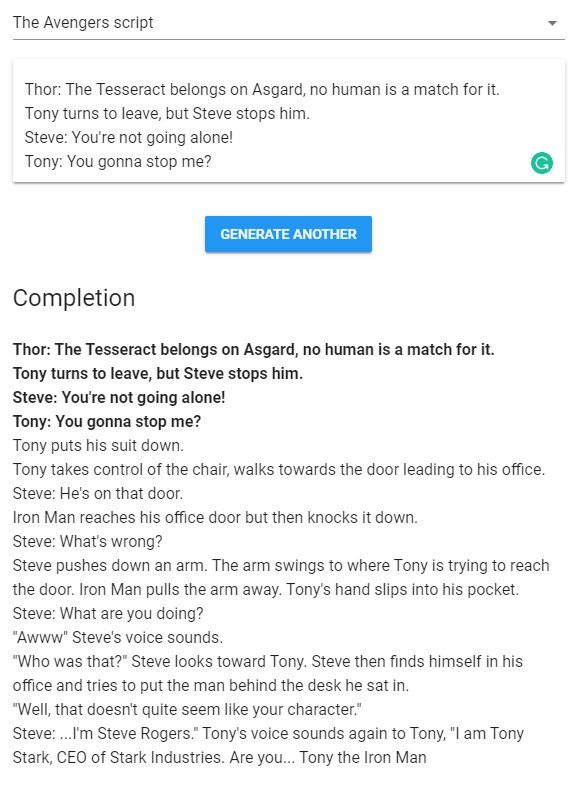

The stories that the algorithm tells are often incoherent, introducing and forgetting characters, props, and setting willy-nilly, reports The Verge after kicking the tires.

For example, when prompted with sample dialogue among characters from “The Avengers,” TalkToTransformer churned out a bizarre scene where “Tony,” “Steve,” and Thor fumbled over a door handle before Tony asked Steve if he’s “Tony the Iron Man.” Not exactly the most compelling addition to the Marvel Cinematic Universe.

Fake Fake News

When prompted with “Futurism.com, best-known for,” the algorithm instead wrote a blurb for a book written by a “Mr. Wunderlich.”

We had some fun with the algorithm, but the real question is over whether the system could be dangerous or misleading. Based on our tests, almost every single result was clearly written by a computer that doesn’t quite grasp how language works — fake news-writing AI may be on the horizon, but it isn’t here yet.

READ MORE: Use this cutting-edge AI text generator to write stories, poems, news articles, and more [The Verge]

More on GPT-2: This Site Detects Whether Text Was Written by a Bot