Meta

Meta, a Redwood City-based tech company just unveiled their latest Augmented Reality (AR) glasses live on TED in Vancouver, and it’s pretty impressive.

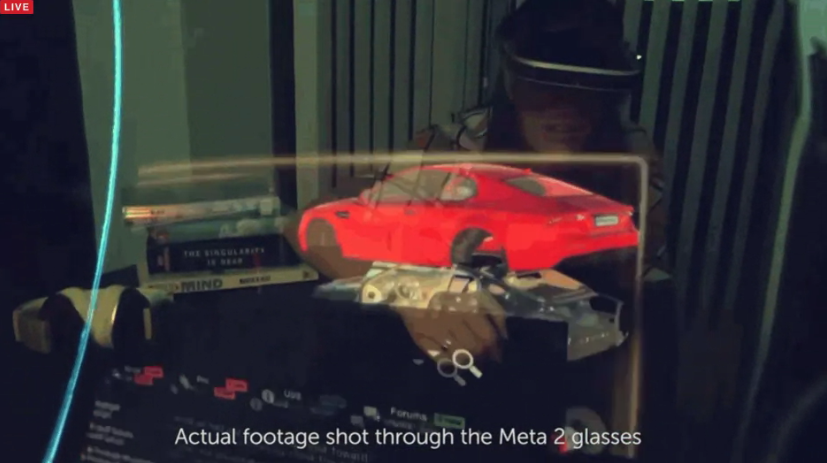

The technology is anchored on the idea that the user should be the operating system, thus creating a more interactive platform and hopefully lowering the learning curve required to use it. This gives users a way to create a more natural method of interaction between digital information and user’s real, natural environment.

Admittedly, their concept is a far cry from our long-standing method of computer interaction, where people are found operating the machine behind computer screens. But as far as Meron Gribetz, CEO of Meta, is concerned, “we’re all going to be throwing away our external monitors.”

Wearable AR

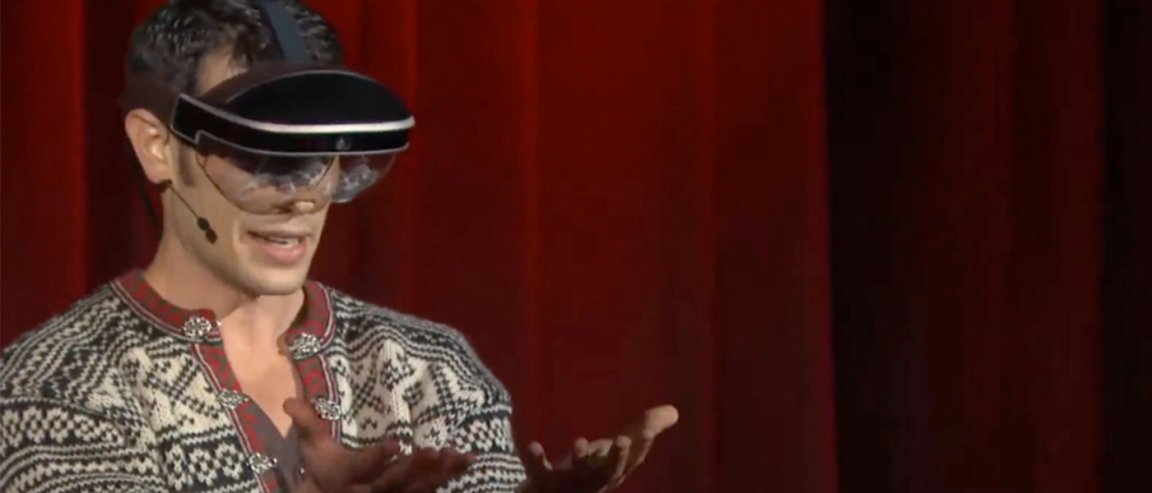

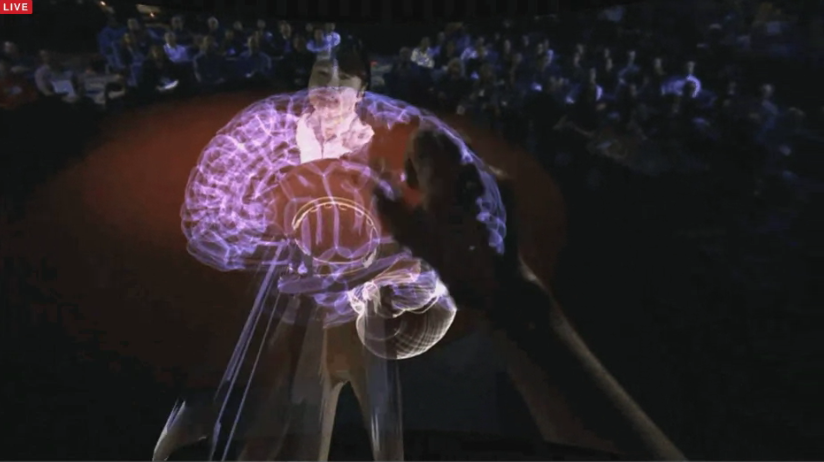

Instead of hiding behind external monitors, he’s hoping to see everyone slip into a pair of glasses that will allow digital information to be layered on top of the user’s real world environment. And to demonstrate his point, the Meta CEO showed how his AR glasses works by hosting a person-to-person “call”.

In his demonstration, Gribetz’ perspective was shown as viewed through his glasses where he was seen reaching out and taking a 3D hologram of a brain from the hands of a human holographic projection in front of him.

What Meta is trying to address is a challenge that has long plagued developers and experts in the tech industry: finding new and better ways for users to interact with digital data. This however requires a lot of advancement from current display technology, not to mention it could face potential adoption issues from the public who have since gotten used to the standard paradigm.

Meta’s latest technology though could make impressive strides towards this goal. In addition, the idea of having a wearable AR device holds more promise and offers more potential for practical use versus virtual reality (VR) devices that are usually larger and therefore will be relegated for home use.