We told you all about Joseph Paul Cohen’s app, BlindTool, the app that tells blind users about their environment through audio and vibrations. Since its release, Cohen has been contemplating ways he could improve on the technology.

Recently, he launched a Kickstarter campaign to raise the money needed to develop a more user-friendly version of BlindTool.

Challenges With BlindTool V1

Although the original app was met with praise, there were some challenges with the app that Cohen hopes to overcome:

- The app does not understand many household objects and concepts (e.g. wall)

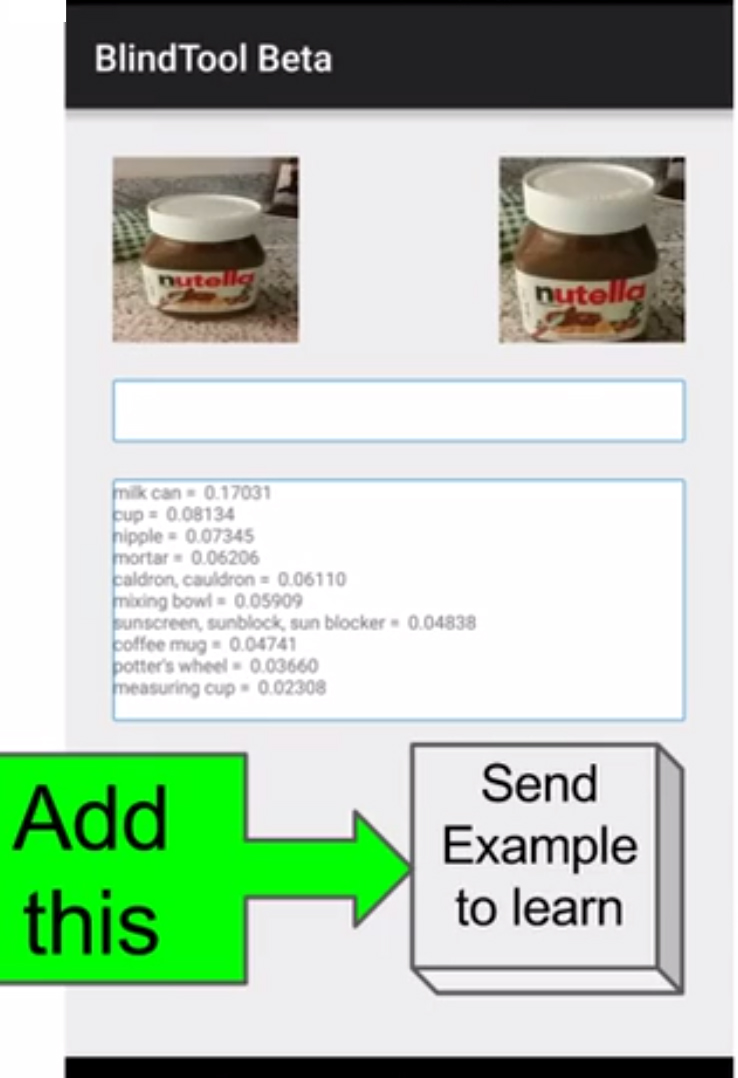

- It is confused by some objects (sometimes misidentifying)

- It doesn’t turn on the flashlight when it is dark

- It doesn’t have any options to customize it

The data set that BlindTool V1 is trained on is actually kind of randomly selected. Cohen says the words that the app knows, such as “goldfish, great white shark, hen, ostrich” (to name a few), aren’t important to blind users….aside from visually impaired zookeepers, of course.

What Will BlindTool Version 2 Do?

- BlindTool V2 will still be completely free (Note: Cohen says he does not want to charge visually impaired individuals for the app for ethical reasons)

- The app will gather labels and images from users of what they want to have the system see and then train the system to identify these things

- Add a way to customize the systems interaction by filtering out some labels or add different names for identified objects

- Test the app more with blind users to make sure it is something they want to use everyday.

Creating a better database of objects is Cohen’s main goal. However, there’s only so much processing that can be done on the phone, so Cohen can’t just add a ton of new labels.

Instead, Cohen plans to add a button to the interface. Pressing this button will allow a user to say “this object is important to me.” That information will be sent to a server, which will take all the new data and essentially train a new neural network.

Once that network has been trained, it could take up to two weeks to process. When this is completed, the server will feed that information back into the app as a newer version.

Over time, this process will create a huge crowdsourced collection of images with labels that are relevant to users, resulting in better quality and relevant predictions.

Getting to know Blindtool

We recently talked with Cohen in order to better understand BlindTool and the ideas behind it.

Futurism: How did you come up with the idea for this device?

JPC: Many years ago, I worked with a blind programmer and learned all about how things worked for the blind. We talked about things, like how math was difficult for the blind, and I was able to introduce him to LaTeX, which allows the blind to read and write math with their screen reader. It is also used by mathematicians in research, so it is really convenient.

I felt there was a better cane that could be made.

I wanted to make a cane that would work better and explain the things in front of the person, like “The doorway is 4 feet ahead and there is a step up.” At the time, I didn’t know about any of the technology that is used in the app.

Futurism: Did anything in particular motivate you to create it?

JPC: Recently, when I was learning a machine learning library called mxnet, which could be run on Android, it clicked that I could turn this technology into a tool for the blind that could be deployed at a massive scale on almost all android smartphones. I knew that it was possible, and I rushed to make it.

I think a lot of motivation comes from my fear of becoming blind.

As I was making it, I would say to myself that, if I became blind tomorrow, I could use this.

Futurism: How do you see this tech being used from a practical standpoint? Essentially, explain it like I am 5, what will it allow people to do?

JPC: Currently, it only detects objects that take up the entire camera’s view. I would put my phone in my shirt pocket with an earphone in, look around on a table, and have it say what was there. It worked okay for that, given that it didn’t get a good look at the objects. I imagine that users can use it while in a kitchen while cooking to help them sort out what is on the table; or use it in an office looking for a keyboard. Or just to get an extra sense of what something they can feel looks like to the tool.

Futurism: Why did you turn to Kickstarter for funding as opposed to other avenues?

JPC: I thought Kickstarter would be the easiest method to fund further development.

Selling it to blind users is something I am not comfortable with. Without a monetization strategy, I don’t think an investor would give

me any money.

I worked on applying for grants in the past to the government and private groups for a different project. We would put in so much effort

only to be told no, even when when we did the quick proposals. To submit to the NSF (National Science Foundation), you need to get so many identification numbers from different groups…I feel it is a complete mess and took so much time.

I think you have to specialize in grant submitting to go that route or hire someone that is.

So I feel kickstarter takes the least amount of time on my part, so I can continue to work on all the other things I want to work on.

Futurism: Finally, Star Wars or Star Trek, and who is your favorite character?

I think it is Han Solo, even though he probably is a cylon.

What Does My Investment Get Me?

- $1- You will be credited as a supporter in the app

- $9- Your name will be listed in the app as someone who funded the project

- $50 You will have access to an early version of the next release and your name will be listed in the app as someone who funded the project.

- $500- You can set the default name of an object. “Instead of speaking ‘Coffee Mug’ the app will say ‘Cup o Joe’ or ‘Cup o Frank’ or even ‘OMG COFFEE.'” These names can be changed by the user after so you won’t subtract from the day-to-day usage, but the default names will be yours.

The project goal is $5,000 by Saturday, February 6 at 11:00 AM EST.