Do You Read Me?

Most of us are able to intuitively understand human facial expressions: in conversation, millions of tiny muscle movements change our eyes, mouth, head position and more to signal to our fellow humans what we’re thinking. These unconscious movements are what make us human, but they also make it exceptionally difficult for robots to imitate us — and make those that try seem creepy, as they enter the “uncanny valley.”

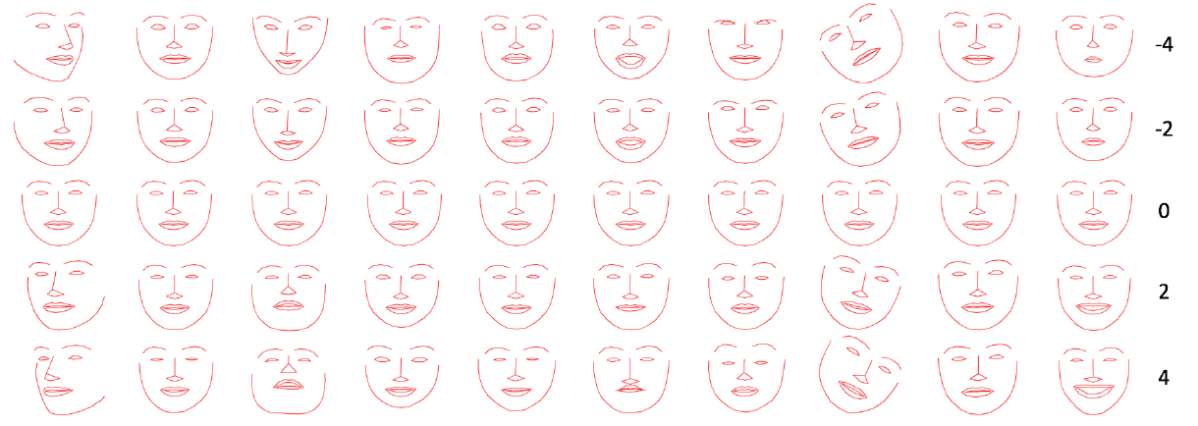

Researchers at Facebook’s AI Lab have brought us one step closer to overcoming this obstacle. They developed an animated bot that is controlled by an artificially intelligent algorithm and designed to monitor 68 points in the human face for changes during Skype conversations. Over time, the AI learned to select the appropriate reaction to what it was seeing, such as a nod of agreement, a blink of surprise, or a laugh when its interlocutor seemed to find something funny. Volunteers who then watched the bot reacting to human conversation judged it to be equally natural as an organic, human reaction.

The lab’s research on this bot will be presented at the International Conference on Intelligent Robots and Systems at the end of September.

AI Next Steps

Facebook’s bot is still displaying reactions on a very basic animation, so the algorithm may not yet be able to make a direct leap to more realistic humanoid robots. Gorden Gordon, a Tel Aviv University AI researcher, told New Scientist that it will also be important for AI to actually understand facial communication, not just imitate it. “Actual facial expressions are based on what you are thinking and feeling,” Gordon said.

Even so, it increasingly looks like we’re approaching an era where technology like AI makes robots more like us — and more difficult to tell apart from us. The Facebook AI lab has already seen chatbots create their own language, while researchers at both the University of Cambridge and Massachusetts Institute of Technology have developed systems that can determine a human’s pain level from their facial expressions.

With such advancements, it might be easy to side with Elon Musk’s view that AI is a tremendous threat to human life — or at least that we wouldn’t get along with smart AI very well. But with other AI Facebook bots already working to help improve users’ mental health, it is possible that making robots more like us could help us to help ourselves.