The Pen Is Mightier

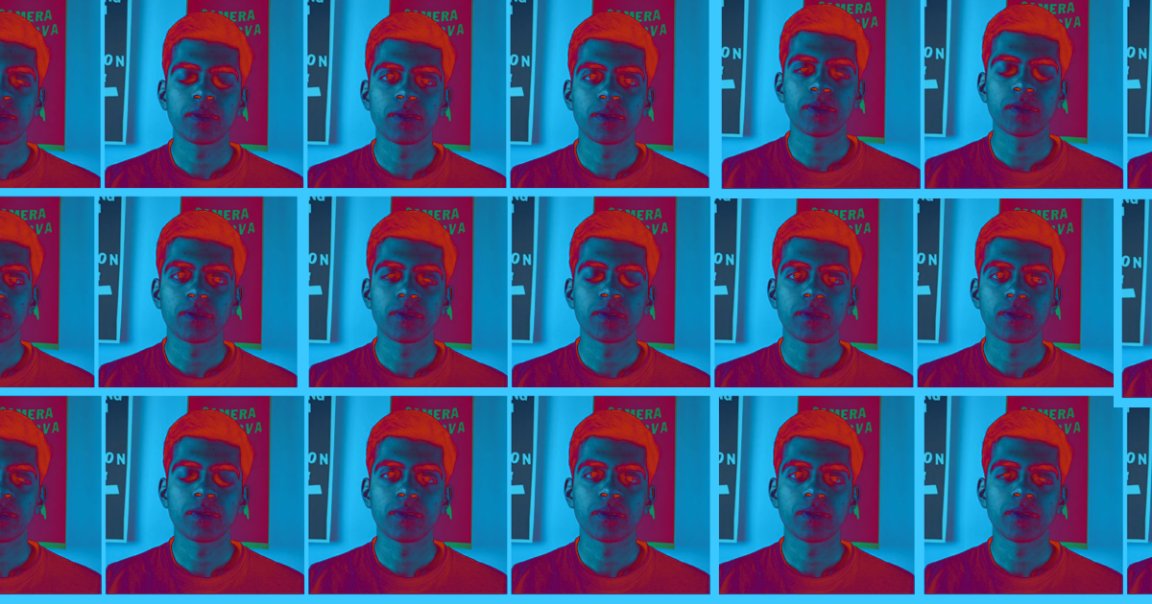

If you can type, you can now create a convincing deepfake.

Recent advances in artificial intelligence have made it far easier to create video or audio clips in which a person appears to be saying or doing something they didn’t actually say or do.

Now, a team of researchers has developed an algorithm that simplifies the process of creating a deepfake to a terrifying degree, making a video’s subject “say” any edits made to the clip’s transcript — and even its creators are concerned about what might happen if the tech falls into the wrong hands.

Intelligent Deception

The researchers — who hail from Stanford University, Princeton University, the Max Planck Institute for Informatics, and Adobe — detail how their new algorithm works in a paper published to Stanford scientist Ohad Fried’s website this week.

First, the AI analyzes a source video of a person speaking, but it isn’t just looking at their words — it’s identifying each tiny unit of sound, or phoneme, the person utters, as well as what they look like when they speak each one.

There are only approximately 44 phonemes in the English language, and according to the researchers, as long as the source video is at least 40 minutes long, the AI will have enough data to gather all the pieces it needs to make the person appear to say anything.

After that, all a person has to do is edit the transcript of the video, and the AI will generate a deepfake that matches the rewritten transcript by intelligently stitching together the necessary sounds and mouth movements.

Speak No Evil

Based on the video showing the new algorithm in action, it appears best suited for minor changes — in one example, the researchers demonstrate how the AI can replace “napalm” in the famous “Apocalypse Now” quote, “I love the smell of napalm in the morning,” with the far more innocuous “French toast.”

But even they worry that some might find far more destructive uses for the new algorithm.

“We acknowledge that bad actors might use such technologies to falsify personal statements and slander prominent individuals,” they write in their paper, later adding that they “believe that a robust public conversation is necessary to create a set of appropriate regulations and laws that would balance the risks of misuse of these tools against the importance of creative, consensual use cases.”

READ MORE: New algorithm allows researchers to change what people say on video by editing transcript [The Next Web]

More on deepfakes: This AI That Sounds Just Like Joe Rogan Should Terrify Us All