One Job

Tesla’s Autopilot mode might have something of a deathwish.

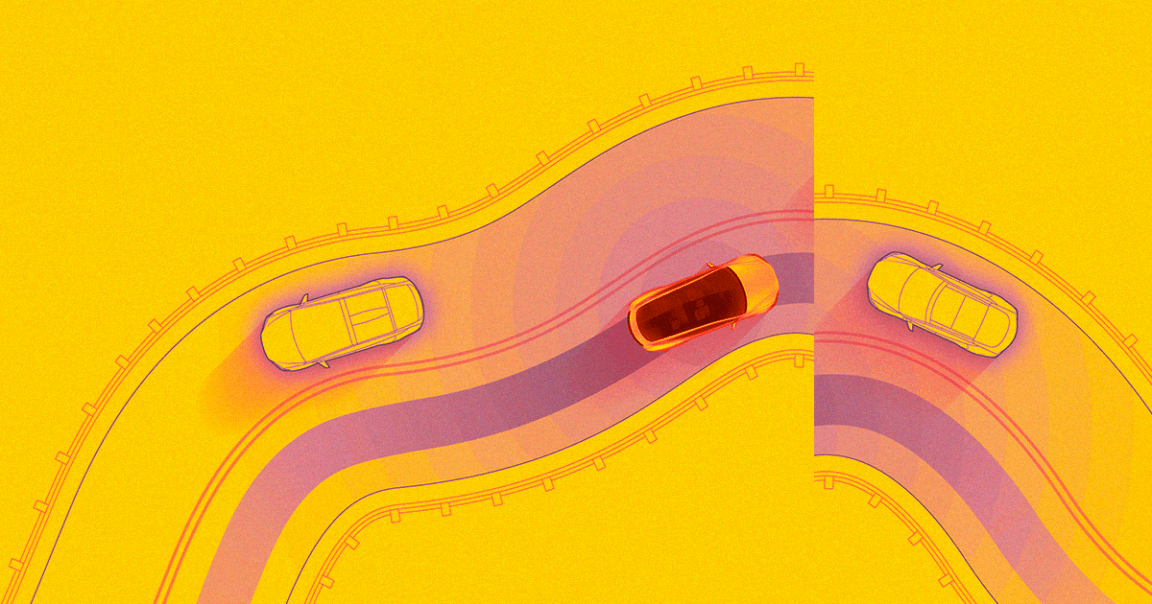

By attaching just three small stickers to the road that are nearly invisible to a human driver, researchers from Chinese tech corporation Tencent managed to trick the AI of a Tesla Model S 75 into steering towards oncoming traffic, according to the researchers’ report — a worrisome glimpse of how hackers could endanger riders in self-driving cars.

Manual Override

Tesla’s Autopilot mode is the semi-autonomous feature that allows the car to follow road markings and handle some aspects of driving without human intervention — and it already has a history of risky driving maneuvers.

But in this case, Autopilot registered the three small stickers as a lane marking — because the stickers moved off to the left, the car’s AI concluded that the road was shifting to the left when it actually continued straight on.

Achilles’ Heel

This particular exploit is possible because Tesla’s AI engineers designed the system to register lane markings that are crumbled or faded. They trained the Autopilot AI to recognize broken or fading white lines as valid lane markers.

The problem came from the fact that the three white stickers placed in a row registered in the system as enough of a line to make the car change course, according to Tencent’s report — an ironic case of a safety feature gone awry.

READ MORE: Three Small Stickers in Intersection Can Cause Tesla Autopilot to Swerve Into Wrong Lane [IEEE Spectrum]

More on Autopilot: Tesla Crash Shows Drivers Are Confused By “Autonomous” vs. “Autopilot”