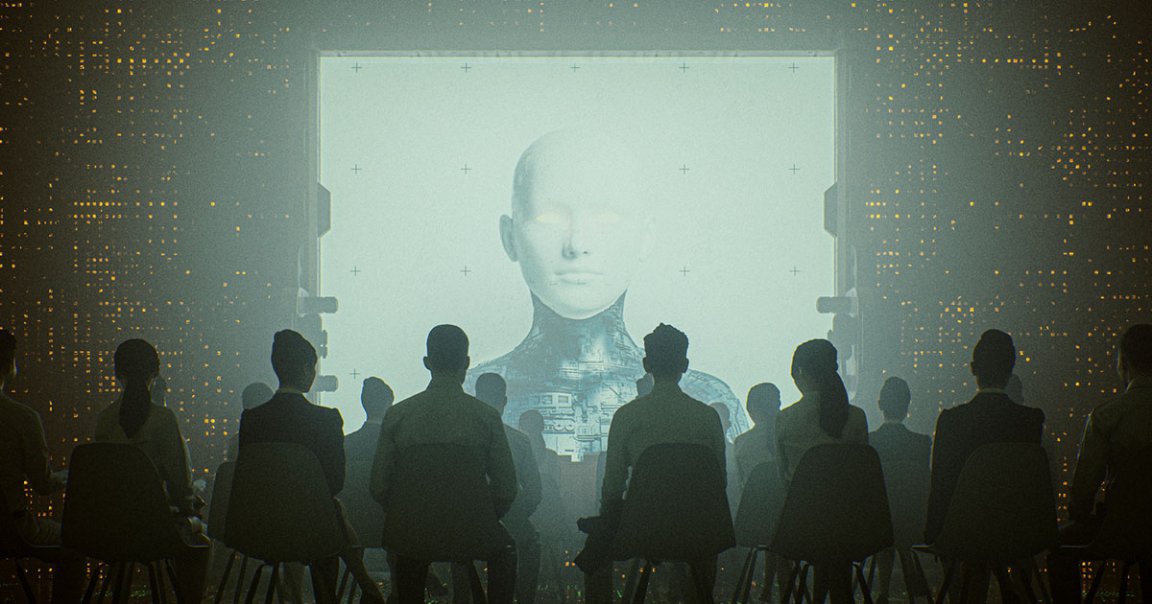

Existential Threat

Will AI one day take over and destroy us all, like Skynet in the “Terminator” franchise?

It’s a fiery debate, and experts are still very much divided. In the latest foray, philosopher and historian Émile Torres writes in The Washington Post that yes, we should be very concerned. Their specific concern is an artificial superintelligence (ASI) — an artificial power that would vastly exceed humanity’s cognitive prowess — could be the cause of humanity’s extinction, since we simply wouldn’t be able to predict or control its actions.

“What if we program an ASI to establish world peace and it hacks government systems to launch every nuclear weapon on the planet — reasoning that if no human exists, there can be no more war?” Torres wrote. “Yes, we could program it explicitly not to do that. But what about its Plan B?”

Slow Down

Of course, there’s a substantial “if” hovering over whether that tech will ever exist. Torres thinks it’s possible, though, given “exponential advances in computing,” and by virtue of its potential significance, we should be concerned.

“The success of any one of these projects would be the most significant event in human history,” the historian wrote. “Suddenly, our species would be joined on the planet by something more intelligent than us.”

That means, while it could help us “cure diseases such as cancer and Alzheimer’s, or clean up the environment,” it could also turn against us and possibly chose to wipe us out altogether.

Therefore, before it’s too late, the historian argues that “research on artificial intelligence must slow down, or even pause. And if researchers won’t make this decision, governments should make it for them.”

So, should we heed Torres’ warnings? In a sense, the question feels irrelevant — with regulators unwilling to reel in big tech, we’re probably going to find out what AI is capable of sooner rather than later.

READ MORE: Opinion: One potential side effect of AI? Human extinction. [The Washington Post]

More on AI: TikToker Fools Fans Into Thinking He Was Created With CGI