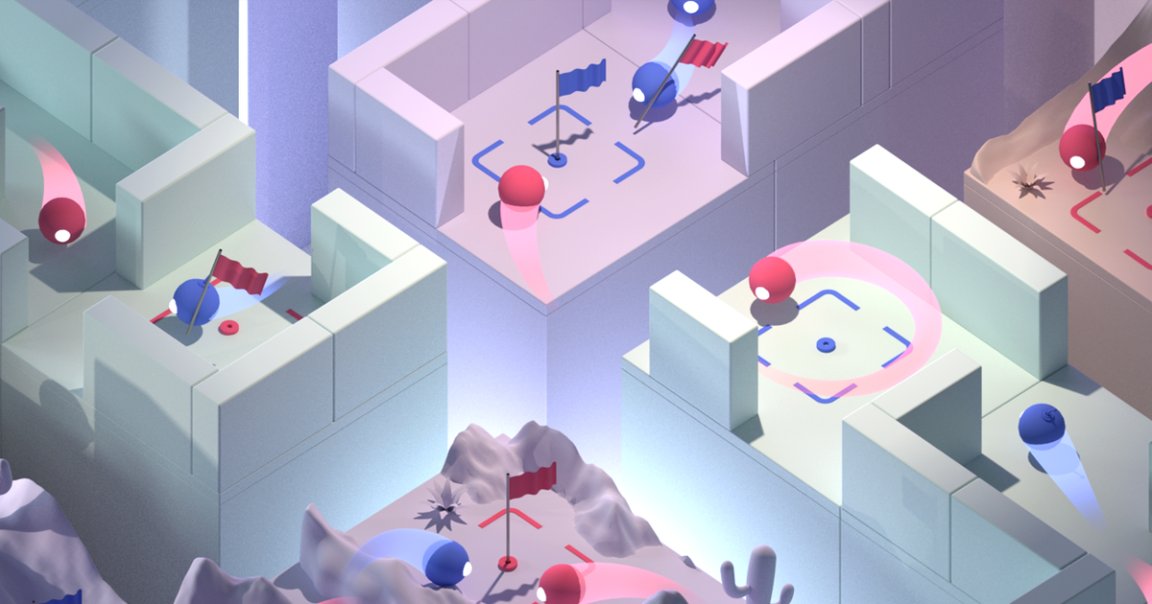

Capture the Flag

It’s old news that DeepMind’s artificial intelligence has become a champion at video games like StarCraft II and Dota 2. But now, the AI has figured out how to ace cooperative multiplayer mode as well.

While playing rounds of capture the flag in the classic first person shooter Quake III Arena, DeepMind was able to outperform human teammates — and with the reaction time slowed down to that of a typical human player. Rather than a number of AIs teaming up on a group of human players in a game of Dota 2, the AI was able to play alongside them as well.

The AI taught itself the skill through a technique called reinforcement learning — essentially, it picked up the rules of the game over thousands of matches in randomly generated environments.

Acing Multiplayer

A paper on their research was published today in Science.

“How you define teamwork is not something I want to tackle,” Max Jaderberg, a DeepMind researcher who worked on the project told The New York Times. “But one agent will sit in the opponent’s base camp, waiting for the flag to appear, and that is only possible if it is relying on its teammates.”

The researchers are hoping that training an AI to play multiplayer successfully could help in training other similar AI systems in the real world, such as helping human employees inside massive distribution centers.

READ MORE: DeepMind Can Now Beat Us at Multiplayer Games, Too [The New York Times]

More on DeepMind: StarCraft Champion Trounced by AI: “Very Human Style of Gameplay”