Salamanders, starfish, and spiders all have the same superpower: they can regrow their limbs. Sadly, humans cannot (yet), which means people with amputations have no alternative but to rely on prostheses.

While today’s artificial limbs are far better than those used back in the day, they still have their drawbacks. However, one of those shortcomings might soon be largely eliminated thanks to new research by the joint biomedical engineering program at North Carolina State University and the University of North Carolina at Chapel Hill. They published their study in the journal IEEE Transactions on Neural Systems and Rehabilitation Engineering on Friday.

The ultimate goal with prostheses is to enable the wearer to do everything they could with an organic limb, and just as easily. That means finding a way to translate the wearer’s thoughts into action in the limb — for example, the user thinks about picking up a cup, and the prosthesis picks up the cup.

Most developers rely on machine learning to enable this communication between the brain and a prosthesis. Because the brain of a person with an amputation still thinks the missing limb is intact, it still sends the same signals down to the muscles. By mentally making one motion over and over again, the person can “teach” their prosthetic limb to recognize patterns in muscle activity and respond accordingly. This is an effective technique, but far from ideal.

“Pattern recognition control requires patients to go through a lengthy process of training their prosthesis. This process can be both tedious and time-consuming,” He (Helen) Huang, the paper’s senior author, said in a university news release.

“[E]very time you change your posture, your neuromuscular signals for generating the same hand/wrist motion change,” she continued. “So relying solely on machine learning means teaching the device to do the same thing multiple times; once for each different posture, once for when you are sweaty versus when you are not, and so on.”

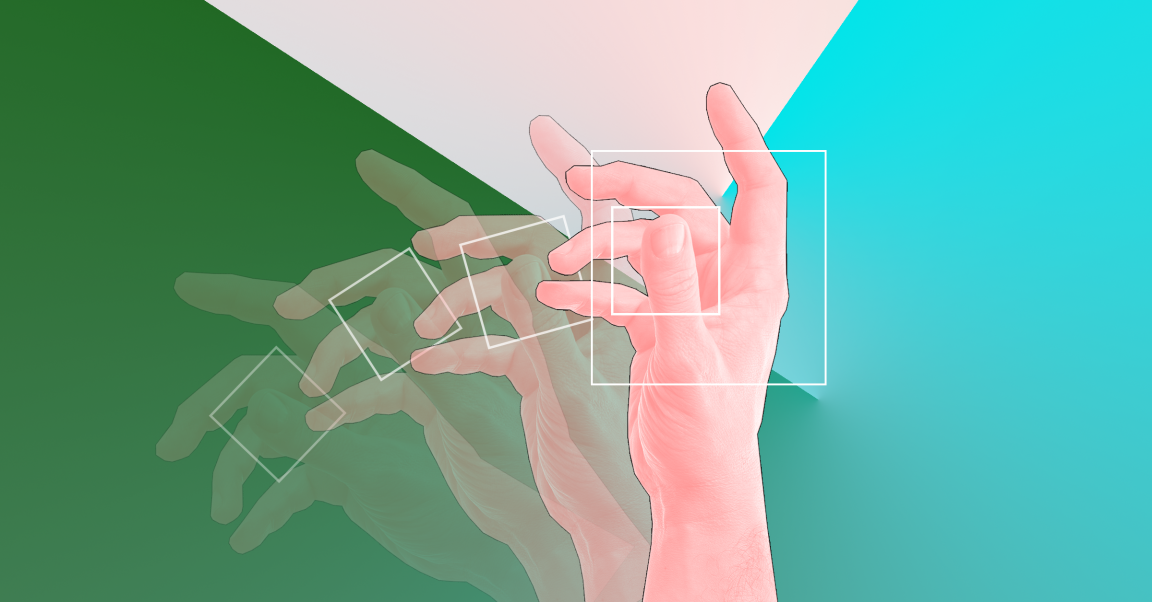

To get around this time-consuming process, Huang and her colleagues developed a user-generic, musculoskeletal computer model of the human forearm, wrist, and hand. First, they fitted the forearms of six able-bodied volunteers with electromyography sensors (sensors that record electrical activity in muscle tissue). Then, they tracked the signals sent when those volunteers made a variety of motions with their hands and arms.

Using this data, the researchers created their computer model, which serves somewhat like a software middleman between a wearer and their prosthesis.

“The model takes the place of the muscles, joints, and bones, calculating the movements that would take place if the hand and wrist were still whole,” said Huang. “It then conveys that data to the prosthetic wrist and hand, which perform the relevant movements in a coordinated way and in real time — more closely resembling fluid, natural motion.”

The model allowed both able-bodied volunteers and those with transradial amputations to complete all the expected motions in preliminary testing with minimal training. The researchers are now looking for additional volunteers for more testing before moving on to clinical trials.

That process could take several years, according to Huang. However, if it goes as hoped, this new approach to training could usher in the next era in prosthetic technology.