Analog or Digital?

It’s the Holy Grail of computing—a fully functional, multi-purpose quantum computer, furnished with all the computational power needed to tackle the most daunting of challenges.

Disburdened of the myriad handicaps inherent in classical, binary computing, a quantum computer—which harnesses the inexplicable strangeness of the quantum realm to perform multifold calculations—confers the power to virtually recreate the universe on a microchip.

Just ask the folks at Google. Because a team of computer scientists at Google’s Santa Barbara research facilities, together with physicists from the University of the Basque Country in Bilbao, Spain, have created a prototype quantum device that’s fully scalable and capable of solving an array of problems in various scientific disciplines.

Their device combines the two main approaches to quantum computing—the digital and the analog. The digital method involves manufacturing a computer’s digital circuitry by yoking together multiple qubits (“quantum bits,” the quantum equivalent of classical ones and zeroes) in special arrangements tailored to solve the problem in question. It’s a widespread and important technique, especially as it includes a number of error-correction mechanisms.

The analog approach, called adiabatic quantum computing (AQC), is messier but easier—it involves encoding the problem onto the states of a number of qubits, and then adjusting their interactions to mold their quantum states and arrive at a solution. It’s not as efficient as the digital method—random noise can effect the calculations—but it has yielded the first commercial quantum computers, such as the D-Wave devices.

Building a Better Quantum Computer

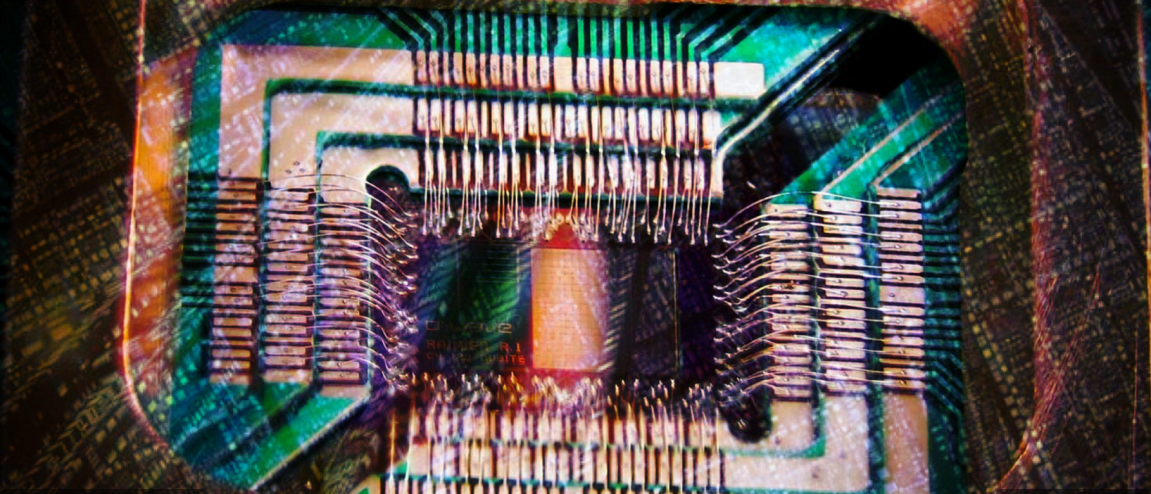

What the Google and Spanish teams achieved was to mesh the ease of the AQC technique with the error-correction abilities of the digital method. They created nine qubits from films of aluminum, deposited them onto a sapphire surface, and cooled the apparatus to a mere 0.02°K, effectively transforming the metal into a superconductor.

Information could then be programmed into the superconducting qubits, and the researchers used “logic gates” to manipulate the qubits digitally into certain states representing a solution to a given problem. In that way, the best of the digital and analog worlds were leveraged to perform quantum computations.

“With error correction, our approach becomes a general-purpose algorithm that is, in principle, scalable to an arbitrarily large quantum computer,” explains Alireza Shabani, a member of the Google team.

The new device is still just a prototype, but it shows immense promise, and may just be the tip of the quantum iceberg. Daniel Lidar, an expert on quantum computing, believes 40-qubit devices will be available in a few years.

“At that point it will become possible to simulate quantum dynamics that [are] inaccessible on classical hardware, which will mark the advent of ‘quantum supremacy,’” he says.