On Thursday, the New York Times published a glowing profile of a company called Medvi. The basic premise of the piece is that a single guy named Matthew Gallagher had used AI to rapidly build a pharmaceutical enterprise that’s on track to do nearly $2 billion in sales this year, while hiring only a skeleton crew of humans to operate the vast AI-powered venture. According to the NYT, it’s a stunning achievement that heralds a new era of business; OpenAI CEO Sam Altman, who predicted the rise of this kind of company back in 2024, told the newspaper that he’d “like to meet the guy” behind the project.

“A $1.8 billion company with just two employees?” the NYT rhapsodized. “In the age of AI, it’s increasingly possible.”

The NYT‘s tech coverage is generally pretty solid. But the framing of its story, and what it left out, left us pretty stunned. That’s because back in May of last year, we ran our own investigation of Medvi — and not only was what we found far more disturbing than the NYT‘s credulous story let on, but the situation has gotten even worse since then.

We first came across Medvi when we saw an AI-generated advertisement plastered at the foot of a local news article. It showed a mangled AI-generated image of an Ozempic package, loaded with misshapen words and a garbled attempt at the logo of Novo Nordisk, the drug company that produces it.

When we clicked the ad, it brought us to Medvi’s website, which promised that visitors could “lose 40 pounds by July with GLP-1 medication.” It was dripping with before-and-after photos showing dramatic weight loss journeys, rapturous testimonials from patients, and authoritative headshots of “incredible doctors we’ve partnered with.”

The closer we looked, though, the sleazier the whole thing started to feel. The smiling models featured on the site were clearly AI-generated, giving an immediate sense of chicanery. And when we contacted one of the doctors who Medvi claimed it was working with, he told us he had no involvement with the company and demanded that it “remove me from their sites.”

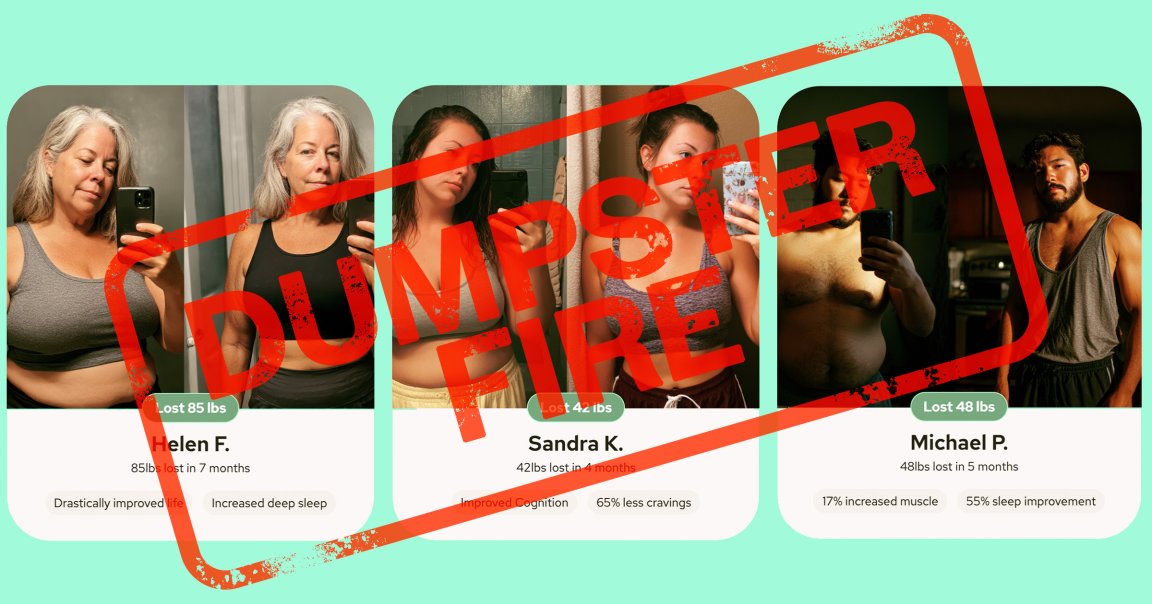

And when we examined the before-and-after photos, we found evidence of even worse behavior.

One showed a customer identified as “Michael P,” who it said had lost 48 pounds while achieving “17 percent increased muscle” and “55 percent sleep improvement,” his protruding stomach replaced with sharply-defined abdominal muscles. But when we dug into the origin of the photo, we found that “Michael” was actually a random Redditor who lost a bunch of weight in the mid-2010s — long before the advent of GLP-1s for weight loss — after giving up alcohol. His striking weight loss photos were featured in Bored Panda and Daily Mail articles dating back to 2017 and 2018. The only thing that was changed was his face, which Medvi appeared to have altered using AI.

The images of the woman identified as “Sandra K,” meanwhile, were stolen from a Newsweek article published in 2021. And the images used for “Melissa C” have been floating around the web for almost a decade; they were even featured in the same Bored Panda piece that “Michael P” was. Everyone’s face had been altered except for Melissa, and each image was accompanied with tales of how many pounds each person lost and the health benefits they allegedly experienced as a result.

Medvi’s site also featured a scrolling marquee of media company logos — an impressive list including Bloomberg, Fortune, and even the NYT, among others — insinuating that the service had garnered a large amount of mainstream press. When we checked, though, almost none of those publications had actually covered it (in reality, the only Medvi coverage we could find that aligned with its claims was a single Forbes listicle, which bore a disclaimer that “we earn a commission from the offers on this page.”)

But readers of the NYT story needed to wade through an astonishing thirty paragraphs of praise for Medvi before they heard about any of those red flags.

“Medvi’s initial website featured photos of smiling models who looked AI-generated and before-and-after weight-loss photos from around the web with the faces changed,” the newspaper acknowledged. “Some of its ads were AI slop. A scrolling ticker of mainstream media logos made it look as if Medvi had been featured in Bloomberg and The Times when it had merely advertised there.”

After another 18 paragraphs, the NYT wrote that Gallagher, after hiring his younger brother in April 2025, finally had the bandwidth to “fix some shortcuts he had initially taken, like swapping out the before-and-after weight-loss photos for ones from real customers.”

“Shortcut” is a telling word. Ctrl-f is a shortcut. Store-bought granola is a shortcut. Hawking drugs online by claiming nonexistent affiliations with doctors and manipulating photos of strangers might indeed be a way to make a lot of money quickly — but whether you see it as a shortcut or fraud is probably a litmus test for your sense of business ethics.

And whatever the NYT might claim, it doesn’t seem like Medvi ever really stopped cutting corners.

One eyebrow-raising aspect of the operation is that Medvi has historically operated under multiple similarly-named domains. Before our first story on Medvi was published, both Medvi.io and Medvi.org featured the fabricated before-and-after images of clearly fake clients. But a few days after we reported about Medvi’s dishonest imagery, archived versions of Medvi.org show that the site dropped the fake transformation images from its homepage. (It’s unclear when Medvi.io, which is now defunct, stopped using the images, if it did at all.) And as of Friday, the day after the NYT dropped its Gallagher profile, Medvi.org no longer featured any before-and-after pictures of purported customers.

But as recently as last month, nearly a year after the NYT said that Medvi had cleaned up its act, an archived version of Medvi.org shows that it was again displaying before-and-after transformations of alleged customers. They bore the same names as before — “Melissa C,” “Sandra K,” and “Michael P” — and again listed how many pounds each person had purportedly lost and the related health improvements they apparently enjoyed. (The YouTuber Stephen “Coffeezilla” Findeisen also caught the discrepancy in a scathing video.)

Even though they had the same names, these people that the site now called “Medvi patients” now looked completely different from the original roundup of Melissas, Sandras, and Michaels. Worse, some of the images now bore clear signs of AI-generation: the new Sandra’s fingers, for example, are melted into her smartphone in one of her mirror selfies. (There is one new customer included in the lineup, a “Helen K,” but she appears to have no fingernails on one hand, another clear sign of AI use.)

“The results speak for themselves,” reads a chunk of text above the seemingly AI-generated images. “Sometimes you have to see it to believe it.”

While digging into Medvi’s current practices, we also stumbled upon yet another domain, Medv.co. The site is emblazoned with Medvi’s logo, and when we clicked on links to the company’s privacy policy and terms of service, we were redirected to Medvi.org.

Unlike the other alleged clients featured over on Medvi.org and Medvi.io, these people — one depicting a younger shirtless man, the other showing an older woman with graying hair, both of whom are pictured snapping mirror selfies — weren’t given names or health improvements. But their photos each bear telling signs of AI-generation, like overly smooth skin, warped smartphones, melted pupils, and distorted background artifacts.

In other words, as of publishing this story — and days after the NYT‘s — Medv.co’s landing page still features before-and-after weight loss transformation images of people who do not appear to be real.

The NYT also neglected to mention that Medvi received a strongly-worded warning letter from the Food and Drug Administration (FDA) just two months ago, in February 2026. The warning came amid a broader crackdown on the controversial telehealth world, as Stat News reported last month, which also feels like important context about Medvi’s skyrocketing success in an explosive market that regulators are attempting to rein in.

In the letter, the FDA took issue with numerous Medvi tactics. One compliance failure it noted was the company’s practice of using images of GLP-1 vials and pill bottles with the name “MEDVI” splashed across them, which the regulator argued was misleading to consumers, as it suggested that Medvi was the compounder of the drugs it sells “when in fact it is not.” (Medvi.org has since removed Medvi’s name from these fake vials.)

The FDA also admonished Medvi’s marketing language around some of the murkier pharmaceutical products that Medvi has offered. The letter warned that Medvi’s site had positioned unapproved compounds as “FDA-approved or otherwise evaluated for safety and effectiveness when they have not.” (The letter specifically called attention to claims made on the domain Medvi.io, which is now shut down, though the letter was addressed to Medvi LLC; it’s unclear how much the FDA knows about Medvi’s tangled web of domains.)

“Failure to adequately address any violations may result in legal action without further notice, including, without limitation, seizure and injunction,” the FDA warned Medvi in the letter.

Medvi has also been ensnared in multiple lawsuits and legal actions, including a Racketeer Influenced and Corrupt Organizations Act (RICO) case that accuses its partner OpenLoop, a telehealth company, and a compounding pharmacy of selling a compounded weight loss pill with “no demonstrated mechanism of absorption or efficacy.” Medvi isn’t named as a defendant, but the plaintiff in the case claims to have purchased the drugs via Medvi’s platform.

Dr. Jonathan Slotkin, a neurosurgeon, hospital executive, and investor called the NYT‘s profile of Medvi a “transcript of a Silicon Valley fever dream” and a “byproduct of regulatory lag and consumer desperation.”

The Medvi story “probably needed to be reported as a health story, with the same questions that are asked in health stories,” Slotkin told Futurism. “It didn’t look like a health story to me; it looked like a tech story.”

***

In response to the NYT‘s Medvi profile, some readers lit up with excited praise.

“THIS IS CRAZYY!!!!” wrote one X user, while another declared that “right now, the margins are enormous for anyone who moves fast enough.”

Others, though, were quick to raise concerns about Medvi’s ongoing ethical issues. Many cited Futurism’s previous reporting, while others pointed out that, as of the NYT piece’s publication, Meta platforms were crawling with paid Medvi ads promoted by accounts belonging to clearly fake doctors. One alleged doctor being used to promote Medvi’s erectile dysfunction drugs — another burgeoning area of its telehealth business — had the head-scratching name of “Dr. Tuckr Carlzyn MD,” which doesn’t seem to be associated with any real physician.

Indeed, a review by the pharmaceuticals-focused outlet Drug Discovery & Development found the widespread use of fake doctors to promote Medvi drugs, including both semaglutide and erectile dysfunction meds. As Findeisen noted in his video, some of these advertisements also appear to include AI-faked before-and-after weight loss videos.

We reached out to Medvi to inquire about the proliferation of fake doctors, and asked specifically whether Medvi paid to promote these accounts, either directly or through a contracted third-party firm (according to the NYT’s report, Gallagher hired “media agencies to help buy ads to entice customers.”)

The company didn’t respond to these questions, nor any others we asked.

But after we reached out — and after the flood of public scrutiny on platforms like X, YouTube, and Threads — we discovered that a bunch of the fake doctor accounts promoting Medvi ads had been deleted.

Criticism of the NYT piece has come from all corners of the web, including by many in the tech, venture capital, and health worlds.

“Ok there’s an easily googleable FDA compliance issue, honestly would be hard for the NYT not to see it, so why didn’t they write about it?” asked Sheel Mohnot, a fintech investor at the venture capital firm Better Tomorrow Ventures.

“It’s just an automated GLP-1 prescription mill,” commented the journalist and professor Jeff Jarvis, calling it “nothing to lionize.”

“All in all, glorifying Medvi was not The New York Times’ finest hour,” AI skeptic and cognitive scientist Gary Marcus wrote in a Substack post, adding that Medvi should be seen as a “warning sign — for how AI can be abused.”

Though Medvi has yet to respond to our questions, the company’s founder, Gallagher, has spent the last few days on X defending his company. He complained in one post — seemingly in reference to criticism — that “the most low t [testosterone] guys” are “the loudest online” and the “Karens of the internet.” In another post, he wrote that it’s “actually a little crazy the number of people who form a whole opinion from a headline and then publicly wish horrible things will happen.”

“Details matter,” he continued. “Half of X thinks I’m slinging fake Ozempic out of my garage with a robot doctor it’s wild.” (Recall that we first came across Medvi when we saw an ad depicting an AI-generated box of Ozempic.)

In his posts, Gallagher repeatedly claimed that the fake doctors on social media were the result of poorly-policed affiliates run wild, complaining that he’s “watching in realtime as people learn about white label, drop shipping, and affiliate marketing is like seeing cavemen ‘fire bad.'”

Gallagher also had a confusing response to the FDA letter.

“Show the whole letter and you’ll see it was not directed to me, but to an affiliate with outdated verbiage,” he wrote. “We were notified and had it removed.” (The FDA letter was addressed to “MEDVi, LLC dba MEDVi.”)

“White label telemedicine is a huge benefit with a net positive for humanity,” Gallagher continued. “It has been the driving force behind big pharma lowering prices and making healthcare accessible to everyone from home. Low energy people think offering life-changing weight loss medication, prescribed by a doctor, is a trendy ‘pill mill.’ Wait til you see what’s possible with longevity services and peptides. I’m bullish on humanity. If you are too, I’ll help you build.”

After this story was published, Medvi issued a statement in response to public scrutiny regarding the FDA warning and the network of fake doctors. Details about that response can be found here.

On a certain level, the NYT is right that it’s pretty fascinating that AI has let a company with just a handful of staff rake in such an astonishing amount of money. But its story seemed determined to ignore or downplay important context about how it got there: by employing a long list of lazy and deceptive tricks to profit massively off consumers desperate for a lower-cost way to access a pharmaceutical widely heralded as a miracle drug.

Or, the paper of record put it: “shortcuts.”