Reading Faces

It’s become a custom for some protesters to cover their faces during public demonstrations. Now, it seems, technology could outwit them: a team of engineers has created an algorithm that can identify faces that are partially covered.

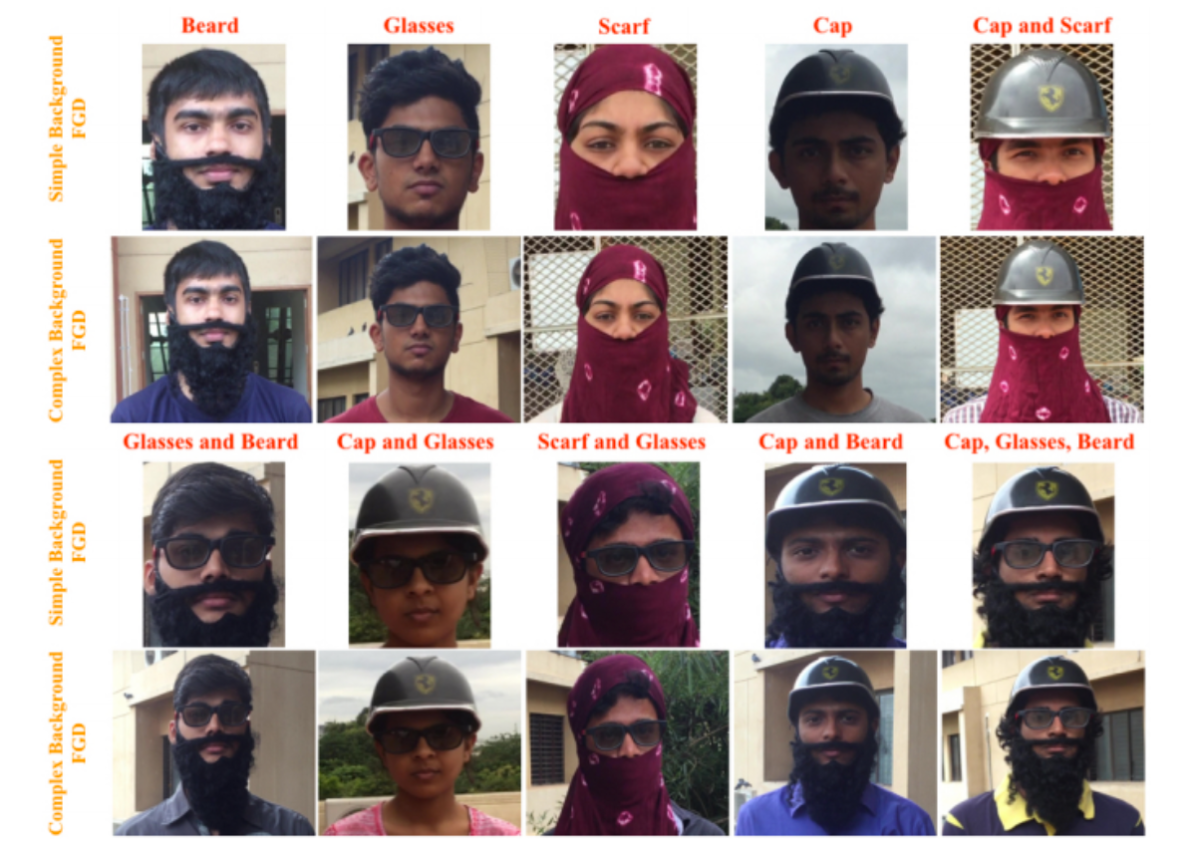

The algorithm identifies faces using angles at 14 different points on the face, according to a paper published on the preprint server arXiv to be presented at the IEEE International Conference on Computer Vision Workshops in October. The researchers trained and validated the algorithm, which relies on a form of artificial intelligence called deep learning, using a dataset of 1500 images of 25 human faces. Each face was partially obscured by one or more of ten disguises (such as sunglasses, a face scarf, or a hat) and eight complex backgrounds to simulate real-world photos. When they tested the algorithm on a new set of 500 photos, it accurately identified people wearing hats and scarves 69 percent of the time—far more accurate than comparable techniques currently in use.

The researchers created the algorithm with the intention of unmasking criminals, one of the study authors told Motherboard. And it’s not the first technique to be created with that intention—there are already algorithms that can identify people based on their hair or clothes, or the way they walk.

But Twitter users saw a more insidious use for this algorithm: Groups in power could use it to unmask and persecute those who oppose them.

Yes, we can & should nitpick this and all papers but the trend is clear. Ever-increasing new capability that will serve authoritarians well.

— Zeynep Tufekci (@zeynep) September 4, 2017

Exactly. The AI is property. It enhances its owner’s powers. Imagine the world’s fastest, loyal, unquestioning assistant.

— Jim, VoR (@JimYoull) September 5, 2017

Whose job is it, then, to ensure that an algorithm like this is used only for good?

Don’t Be Evil

Algorithms already control a stunning amount of our lives—the information we see, the jobs we get, how much defendants should pay for bail. That’s unlikely to change as technology is increasingly integrated into the systems around us. And though they are supposed to help us make decisions without our fallible human subjectivity, algorithms often end up perpetuating our preexisting biases. Algorithms that function as black boxes have the potential to leave those biases unchecked, quietly altering how the world operates. “There’s the obvious potential that, if there’s a lack of transparency around an algorithm, it could perpetuate discrimination or stereotypes,” says David Ryan Polgar, a tech ethicist based in Connecticut, in an interview with Futurism.

Because engineers usually follow the instructions of companies, it’s hard to hold them responsible for the consequences of the tools they create, Polgar says. “Engineers by their very nature are trying to solve a direct problem,” he says. “If someone tells me to build a sharper knife, I am closing my blinders and saying OK I’ll build a sharper knife. My objective is not to think of all the possible ways the knife could be misused.” That responsibility falls on the individuals in the company who decided the tool was worth having in the first place, he adds.

No technological advance is free of risk. In other industries, such as medicine, the government vets and tracks the tools that are most susceptible to being abused. But the government has not been nimble enough to make rules about how algorithms should be used, Polgar points out.

As such, it’s been up to companies to make sure the riskiest things don’t see the light of day, Polgar says. That can be dangerous because companies, governed by a small group of leaders and the whims of the market, don’t always make decisions that the general population would agree with. Companies that have gotten it wrong have dealt with substantial blowback — Facebook took heat for automatically censoring an iconic photo from the Vietnam war, and Google was forced to act quickly after its software identified two black people in a photo as “gorillas.”

It’s impossible to know if these situations could have been avoided if the algorithms behind the gaffes were subject to input from a more diverse group, or if they were more transparent to the general public. But it probably wouldn’t have hurt.

From Reaction To Prevention

As the design of these algorithms has gotten more attention, companies may begin to put more emphasis on those human needs before the public pushes back—a preventative approach to PR fiascos instead of a reactive one. Experts have called for tech companies to employ ethicists, or equal numbers of developers and liberal arts majors; other experts have emphasized that computer science majors should receive training in ethics. In 2015, Elon Musk and Sam Altman unveiled the nonprofit OpenAI, dedicated to transparency and safety for artificial intelligence.

That attention on ethics could prove essential as companies like Amazon, Google, Microsoft, Apple, and Facebook become increasingly powerful, quashing or absorbing competitors. And if customers don’t like what the companies are doing, it’s increasingly difficult to opt out. “Traditionally we would say speak with your wallet, but I don’t think works the same way now,” Polgar says.

“Creators will have blinders on to solve problems. But also responsible companies, tied in with corporate social responsibility, should put in reasonable stopgaps to ensure the likelihood that there is not a dramatic amount of misuse of the product,” he adds.

As for the facial recognition algorithm, Polgar says it touched a bit of a nerve because it cropped up at a moment of “necessary and pivotal protest” in the US. Regardless, the creators would have done well to include some caveats as to how to avoid misuse or abuse.

The algorithm still has a number of limitations. It can’t identify people wearing hard masks, like the Guy Fawkes mask often worn by members of the hacking collective Anonymous. And it’s still not accurate enough to warrant widespread use, as Inverse notes. To improve it, the researchers plan to test their algorithm on real-world scenarios.

As the algorithm advances, its engineers aren’t sure how to prevent their creation from being used for nefarious purposes in the future. But they are sure of their own intentions.

“I actually don’t have a good answer for how that can be stopped,” study co-author Amarjot Singh told Motherboard. “It has to be regulated somehow … it should only be used for people who want to use it for good stuff.”