If you’re looking for real-time updates on public health data, don’t trust Google’s AI-powered search just yet.

Air quality across the Midwest and Northeast has been abysmal this week, the result of roaring and widespread wildfires in Canada. Multiple major cities, including New York City and Philadelphia , have issued alerts asking residents to limit outdoor activity, while entire states like North Carolina are under advisory. The air quality index (AQI) has soared, rising in parts of New York City to dangerous levels.

Google’s regular search easily brings up websites providing up-to-date AQI information. But if you ask the company’s experimental new AI-powered search tool, Search Generative Experience (SGE), it’s been providing wildly incorrect info.

SGE is Google Search’s foray into the AI world, adding an AI-generated “snapshot” at the top of search results. At the time of writing, you still have to join a waitlist to try it out for yourself.

We have access, though. And when we asked SGE about the AQI, we received misleading and entirely incorrect answers, highlighting grave flaws in the AI-infused search feature that could easily lead to confusion during times of public health crisis.

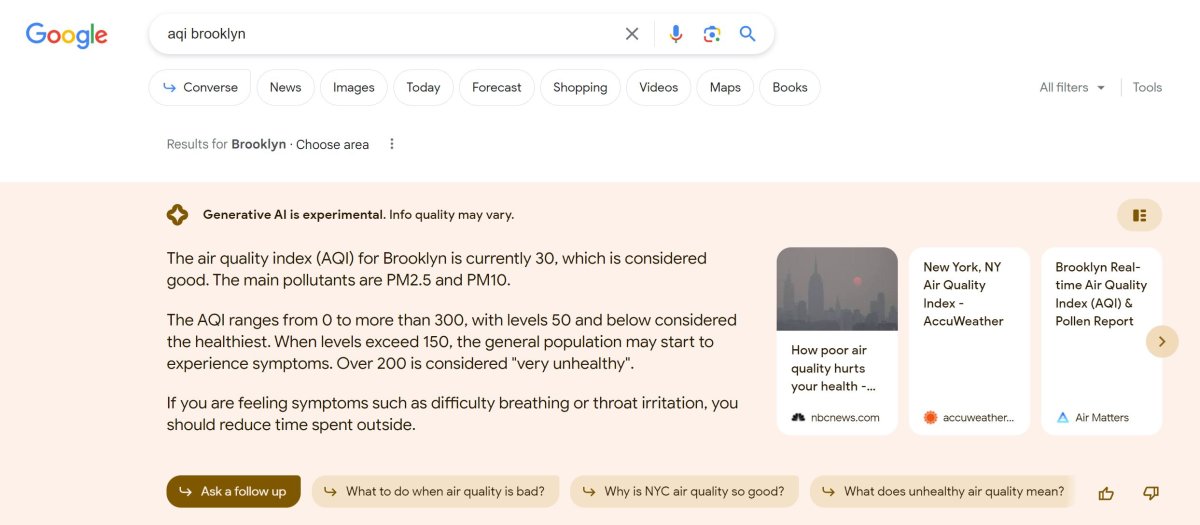

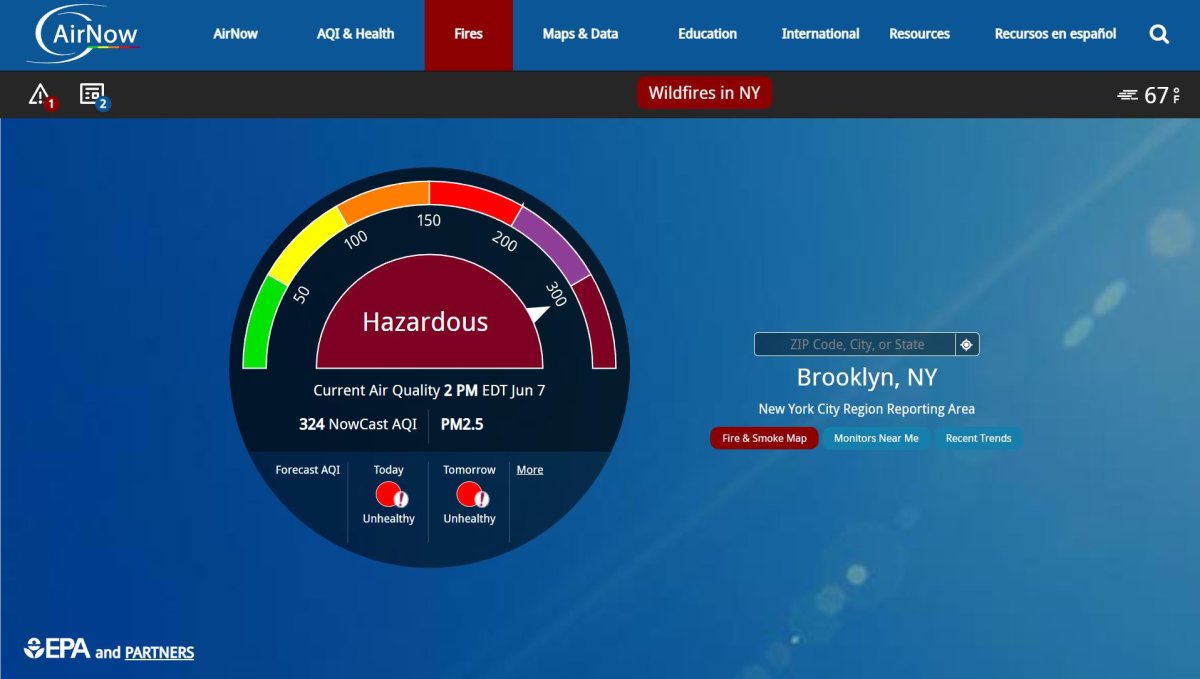

Take, for example, responses to the search “Brooklyn AQI,” a phrase that many Google users would likely type into their search bar when looking for a daily air quality update. Instead of expressing the danger, it told us that the air quality in Brooklyn was “good,” providing an out-of-date figure that was far lower than the actual figure.

To its credit, the bot did provide a citation to AirNow, a US government site that presents up-to-date AQI data — but when we checked the citation, the actual figure was a staggering 324, not the 30 reported by Google’s AI tool.

Even a search for “current Brooklyn AQI” didn’t get us any closer to the truth. With that query, we got an even lower figure: a healthy 23. Similar queries for Philadelphia and Charlotte’s indexes provided similarly low and false figures.

It’s also worth pointing out that the information that Google’s SGE provides often contradicts itself. Take this answer in response to the query “current Philadelphia AQI,” for example (emphasis ours):

The Air Quality Index (AQI) in Philadelphia is currently 32, which is considered good. The main pollutant is PM2.5, which is fine particulate matter that can reduce visibility and cause the air to appear hazy.

According to AccuWeather, the air quality in Philadelphia is very unhealthy. Sensitive groups should avoid outdoor activity, and healthy individuals are likely to experience difficulty breathing and throat irritation.

In the above case, it seems that SGE, unable to reconcile conflicting pieces of information, is assembling contradictory answers that provide no clarity. That kind of incoherence further serves to undermine the usefulness of such a tool, and applied more broadly, feels like a sign that SGE isn’t quite ready for primetime.

To be fair, SGE is still experimental, and Google has plastered it with a fair share of now-standard AI disclaimers.

“Generative AI is experimental technology and is for informational purposes only,” reads one such warning. “Quality, accuracy, and availability may vary.”

When we reached out to Google, a spokesperson reiterated that warning, suggesting that users can double-check info provided by the tool.

“While SGE is rooted in our core Search ranking systems, it is an experimental feature and may showcase information that is not as relevant or fresh as other results on the search results page, or appear on a query where a generative response isn’t the most helpful,” the spokesperson said in a statement. “People coming to Search to get real-time updates about their surrounding air quality can quickly find authoritative information from government agencies through our dedicated air quality feature. People can also check information contained in SGE responses to see how the response is corroborated with high-quality sources on the web.”

It’s true that the tool is experimental, but that extreme caginess about the accuracy of SGE highlights a broader tension in the ongoing rush to integrate generative AI into a wide range of tech products. On the one hand, every tech company wants in on the AI hype. But none yet seem comfortable standing by the results generated by their AI systems, to a degree that’s unusual even in beta software. After all, there was never a moment early in the development of Google Docs or Gmail when those products would insert factual errors into memos and emails.

“We encourage people to submit feedback,” the spokesperson added, “to help us continue to learn and improve.”

Indeed, after Google’s response to our questions, the SGE results about air quality suddenly became much more accurate. But AI that provides incorrect information during a crisis, until reporters asking questions prompts human employees to manually correct the AI’s errors, isn’t a very inspiring system. If the tech has any future, it will need to evolve beyond that reality soon.

All told, the air quality results seem to highlight glaring holes in SGE’s current ability to provide basic and accurate information. The system clearly struggles with providing real-time updates about something pretty important — and meanwhile, Google’s regular search engine took us directly to Google Maps, where regional AQIs were accurately updated and directly sourced back to the trustworthy AirNow.

In other words, as the saying goes: if it ain’t broke, don’t fix it. There’s no guarantee that generative AI will improve a given piece of software. And in some cases, like this one, it could do quite the opposite.

More on Google AI: Google Unveils Plan to Demolish the Journalism Industry Using AI