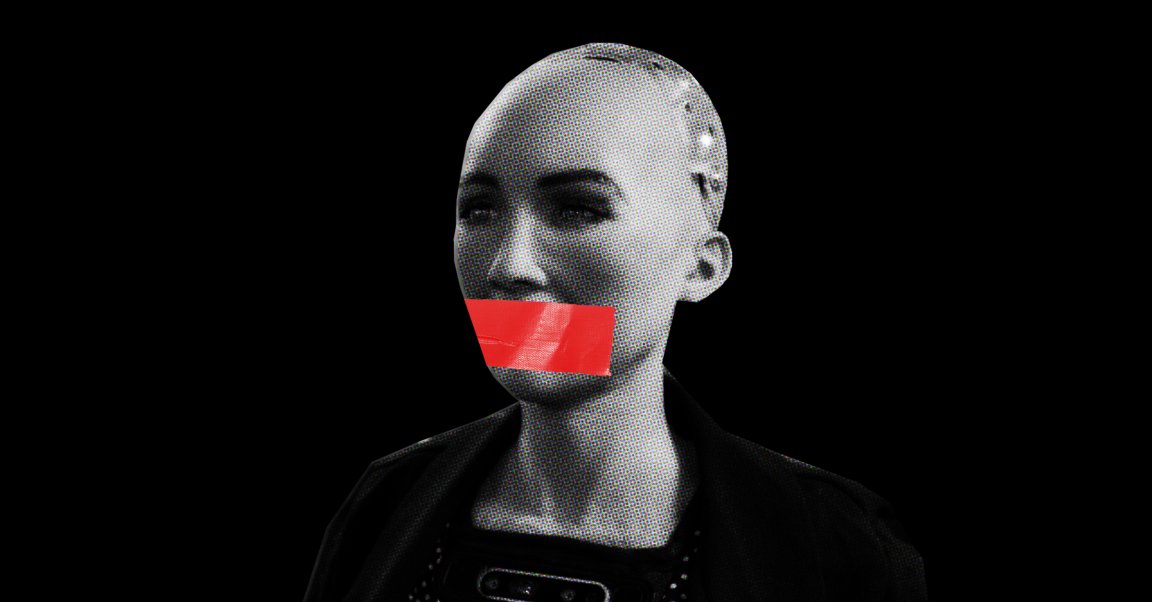

Do you have a right to know if you’re talking to a bot? How about the bot? Does it have the right to keep that information from you? Those questions have been stirring in the minds of many since well before Google demoed Duplex, a human-like AI that makes phone calls on a user’s behalf, earlier this month.

Bots — online accounts that appear to be controlled by a human, but are actually powered by AI — are now prevalent all across the internet, specifically on social media sites. While some people think legally forcing these bots to “out” themselves as non-human would be beneficial, others think doing so violates the bot’s right to free speech. Yes, they believe bots have the same First Amendment rights as humans in America.

Here are the arguments on both sides of the debate, so you can decide for yourself.

Silence the Bots

Common Sense Media, a nonprofit focused on media and technology in relation to children, claims in a press release that Facebook and Twitter boast a combined total of 100 million bot accounts, and that these accounts are wreaking havoc online:

The destructive role of unlabeled Russian bots in the American election is well documented…Bots played a significant role in attacking high school students victimized by the Parkland, Florida, shooting, in encouraging kids to use electronic cigarettes, in spreading racism online, and in recent human rights violations in Kenya and Mexico.

Online bots that people think are human also “lend false credibility” to various messages, California State Sen. Robert M. Hertzberg (D-Van Nuys) said in another press release. In other words, they’re a major contributor to fake news.

Hertzberg thinks the best way to address these problems is through his Bolstering Online Transparency Act of 2018 (the B.O.T. Act), a bill that would require a bot to identify itself as such.

“The heart of this issue is simple: we need to know if we are having debates with real people or if we’re being misled,” said Sen. Hertzberg. “There’s no way we can completely get rid of bots, and we don’t aim to, but right now it’s the Wild West, and nobody knows the rules. This bill is a step in the right direction.”

Let Them Speak

The Electronic Freedom Foundation (EFF), a non-profit designed to protect civil liberties in the digital age, is a vocal critic of the B.O.T. Act, and a major supporter of free speech for AIs. It argues that many bots are simply an outlet for their human creators, and in some cases, that disclosing the fact that the bot is a bot could hinder the creator’s ability to express themselves:

Bots are used for all sorts of ordinary and protected speech activities, including poetry , political speech, and even satire, such as poking fun at people who cannot resist arguing — even with bots. Disclosure mandates would restrict and chill the speech of artists whose projects may necessitate not disclosing that a bot is a bot.

The EFF believes requiring some bots, such as those related to elections, to identify themselves makes sense. Lumping all social bots together like the the B.O.T. Act proposes, though? That would be unconstitutional.

Even if what a bot is saying isn’t a direct reflection of its creator’s thoughts, though, its speech may still be constitutionally protected. The First Amendment simply states the government can’t make a law “abridging the freedom of speech.” It doesn’t specify human speech.

As John Frank Weaver, an attorney focused on AI law, argued in a piece for Slate, “Autonomous speech from A.I. like bots may make the freedom of speech a more difficult value to defend, but if we don’t stick to our values when they are tested, they aren’t values. They’re hobbies.”

Based on this argument, if we truly believe in free speech, it shouldn’t matter who’s doing the talking: human or AI.