Understanding AI Drivers

Autonomously driven vehicles are making headway thanks to artificial intelligence (AI) software that gives these cars self-driving capabilities. Though, while developers understand how to make AI work — i.e., using neural networks and programming them with deep learning algorithms — knowing how these systems actually work and process information is largely unknown, according to a report by the MIT Technology Review.

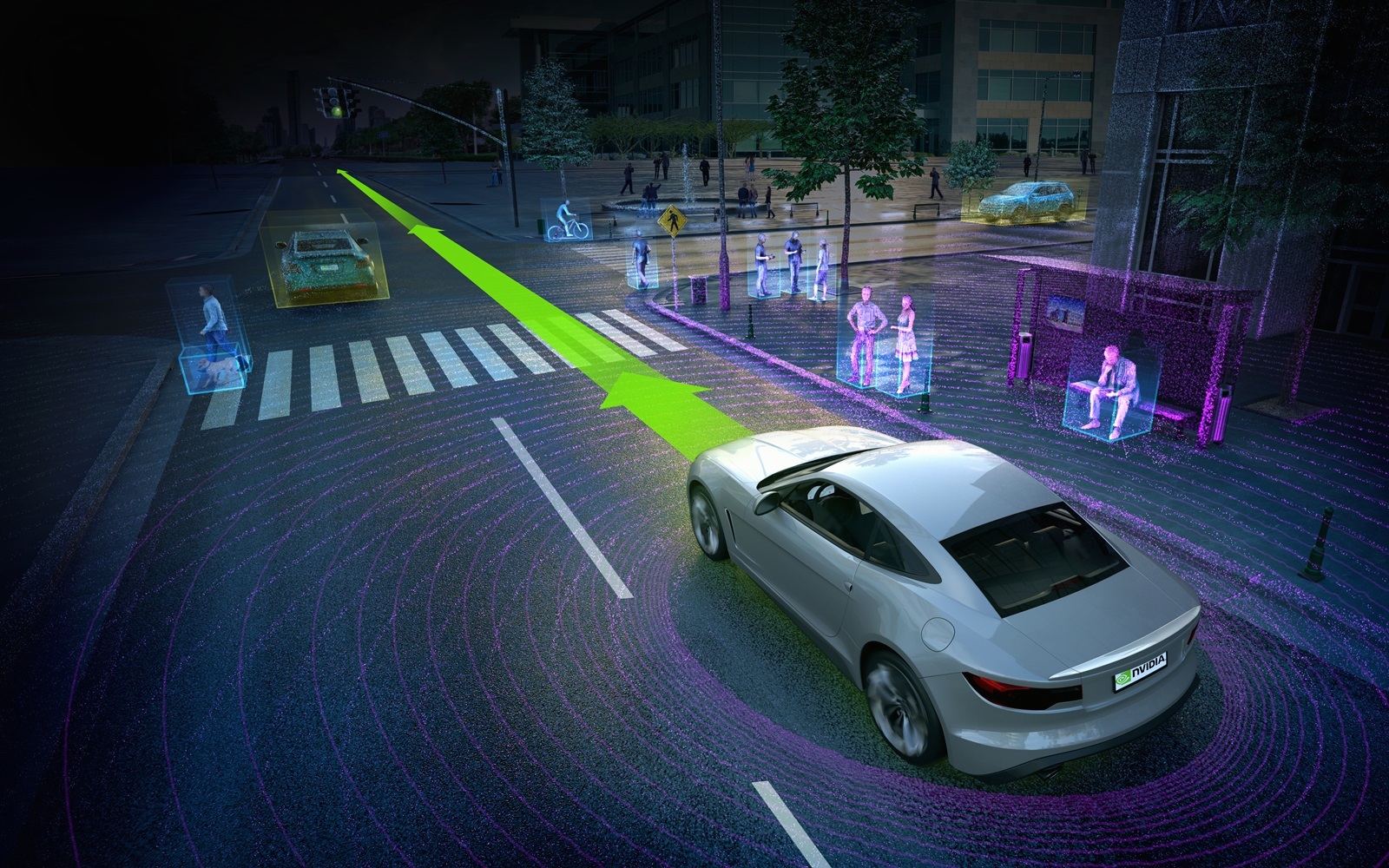

One software developer wants to change that by giving a peek at what happens inside their self-driving AI software. Chip maker Nvidia, that has been working on its own self-driving AI systems, has now found a way to really explore how AI drives a car.

“To gain insight into how the learned system decides what to do, and thus both enable further system improvements and create trust that the system is paying attention to the essential cues for safe steering, we developed a simple method for highlighting those parts of an image that are most salient in determining steering angles,” Nvidia’s researchers wrote in a paper.

Driving Like Humans, Only Better

Nvidia’s neural network “learned” by studying how human drivers see. “The network recorded what the driver saw using a camera on the car, and then paired the images with data about the driver’s steering decisions,” Nvidia’s Danny Shapiro wrote in a blog post. Nvidia’s research has shown that their AI system tends to focus on road edges, lane markings, and parked vehicles, like human drivers do. It even ignores images deemed irrelevant to driving — avoiding distractions, perhaps?

“What’s revolutionary about this is that we never directly told the network to care about these things,” Nvidia’s chief architect for self-driving cars Urs Muller told Shapiro. “I can’t explain everything I need the car to do, but I can show it, and now it can show me what it learned.”

While the research doesn’t fully explain how an AI “thinks,” it provides good insight. At the very least, it could help us understand how to make AI “think” more like humans —or even better. As Nvidia’s self-driving AI demonstrated, it has even learned “to recognize features which would be hard to anticipate and program by human engineers, such as bushes lining the edge of the road and atypical vehicle classes.”