Ethical AI

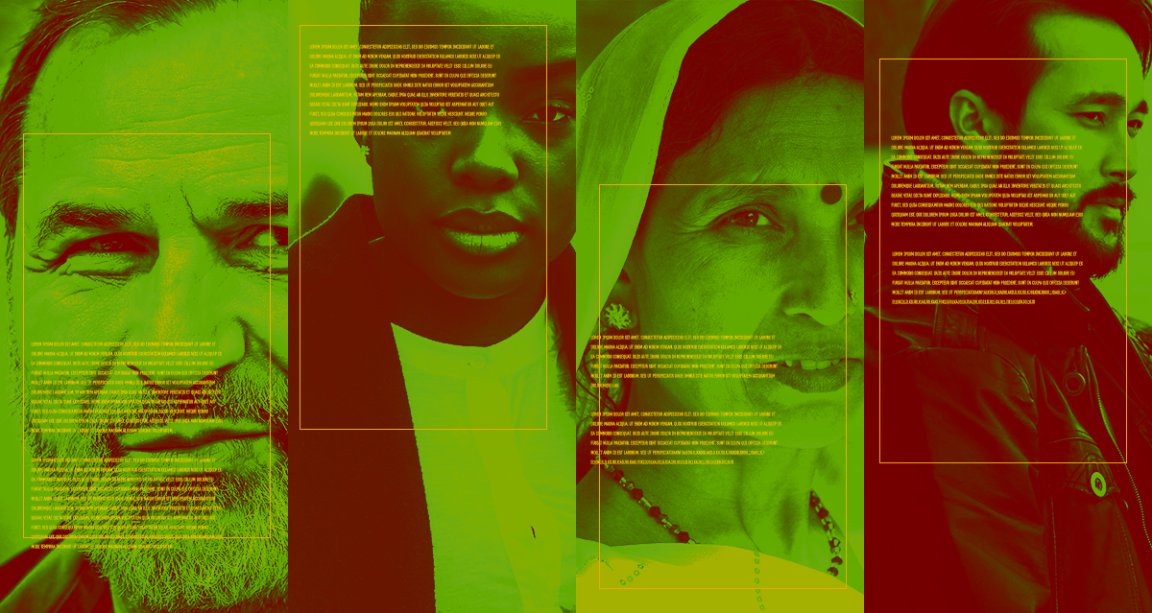

By now, it should surprise no one to hear that artificial intelligence has a bias problem. People program their societal prejudices into algorithms all the time, often without meaning to. For instance, most image-recognition algorithms correctly identify women in flowing white dresses as “brides” but fail to do so for Indian women wearing wedding saris.

To solve that problem, Google created an open challenge called the Inclusive Images Competition. The goal of the contest, the MIT Technology Review reports, is to develop data sets and algorithms that result in AI that recognizes more diverse people and customs.

Deep Unlearning

Three months ago, competing teams set out to train image-detection algorithms to be more culturally inclusive, both by using more thoughtful labels on the photos used during training and by improving the algorithms themselves.

These new algorithms were then put through a stress test of photos sent from volunteers around the world. Those that accurately labeled the new photos — for instance, identifying a woman in the process of getting married as a “bride” instead of the vague, less-helpful default label of “person” earned more points according to Google’s metrics.

Necessary Progress

The top five teams on the running leaderboard, led by Samsung AI deep learning engineer Pavel Ostyakov, will each receive a $5,000 prize — admittedly a small reward for helping solve a major problem within AI research.

Over the next few days, the winning teams will present their work at the Thirty-second Conference on Neural Information Processing Systems, a major international AI conference.

None of those five teams built a perfectly-accurate or unbiased algorithm; only one of the top five teams, for instance, built an algorithm that could correctly identify an Indian bride. But the contest marks an important step in the right direction toward building inclusive AI that can serve all types of people.

READ MORE: AI has a culturally biased world view that Google has a plan to change [MIT Technology Review]

More on algorithmic bias: Microsoft Announces Tool To Catch Biased AI Because We Keep Making Biased AI