AI isn’t all chatbots and meme generators. According to a new study published in the journal Science, it can also serve as a fountain of misinformation — and all it takes is for someone to turn open the spigot.

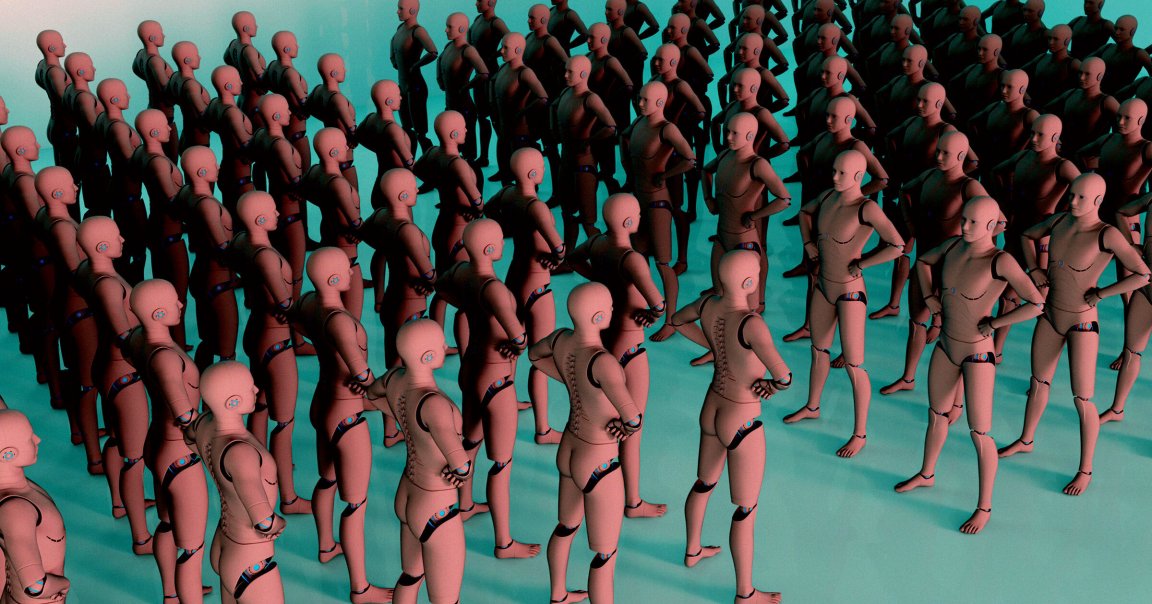

The new research examines the scale at which AI, namely large language models (LLMs) and autonomous agents, can be used to manipulate opinions on a “population-wide level.” The researchers point to a specific threat in the form of AI swarms: massive assemblages of autonomous AI tools that can ape real humans en masse via the internet and social media.

According to the researchers, available evidence indicates “organized social media manipulation has expanded from 28 countries in 2017 to 70 countries” today, in nations ranging from the Philippines to the United States, and plenty of places in between. Incidents of AI-driven misinformation in Brazilian and Irish elections, for example, make it clear that democratic institutions are already under fire from these kinds of threats, which researchers say are growing in sophistication.

“Fusing LLM reasoning with multiagent architectures, these systems are capable of coordinating autonomously, infiltrating communities, and fabricating consensus efficiently,” the paper’s abstract warns.

Legislating against that type of interference raises confounding issues, like whether propaganda botnets count as free speech. Indeed, some of these AI bot networks operate right out in the open as for-profit startups, courting millions from venture capitalists.

Even before AI, the emergence of a few unaccountable social media platforms had created the conditions necessary for large-scale misinformation campaigns to flourish. Even before AI, these kinds of campaigns have had devastating consequences in the real world, like the Facebook-enabled Rohingya genocide in Myanmar.

We’re already seeing previews of what AI-enabled misinformation campaigns look like in practice, often in the form of right wing actors stirring fury over welfare recipients or immigrants.

Whatever comes as a result of AI misinformation, it’s clear the path was laid years ago — and there’s alarmingly little political will to turn back now.

More on AI ethics: Tech Companies Are Using Insidious Tactics to Build Data Centers on Indigenous Lands, Activists Say