Diagnosing with “The Stethoscope of the 21st Century”

A new kind of doctor has entered the exam room, but doesn’t have a name. In fact, these doctors don’t even have faces. Artificial intelligence has made its way into hospitals around the world. Those wary of a robot takeover have nothing to fear; the introduction of AI into health care is not necessarily about pitting human minds against machines. AI is in the exam room to expand, sharpen, and at times ease the mind of the physician so that doctors are able to do the same for their patients.

Bertalan Meskó, known better as The Medical Futurist, has called artificial intelligence the “the stethoscope of the 21st century.” His assessment may prove to be even more accurate than he expected. While various techniques and tests give them all the information they need to diagnose and treat patients, physicians are already overburdened with clinical and administrative responsibilities, and sorting through the massive amount of available information is a daunting, if not impossible, task.

That’s where having the 21st century stethoscope could make all the difference.

The applications for AI in medicine go beyond administrative drudge work, though. From powerful diagnostic algorithms to finely-tuned surgical robots, the technology is making its presence known across medical disciplines. Clearly, AI has a place in medicine; what we don’t know yet is its value. To imagine a future in which AI is an established part of a patient’s care team, we’ll first have to better understand how AI measures up to human doctors. How do they compare in terms of accuracy? What specific, or unique, contributions is AI able to make? In what way will AI be most helpful — and could it be potentially harmful — in the practice of medicine? Only once we’ve answered these questions can we begin to predict, then build, the AI-powered future that we want.

AI vs. Human Doctors

Although we are still in the early stages of its development, AI is already just as capable as (if not more capable than) doctors in diagnosing patients. Researchers at the John Radcliffe Hospital in Oxford, England, developed an AI diagnostics system that’s more accurate than doctors at diagnosing heart disease, at least 80 percent of the time. At Harvard University, researchers created a “smart” microscope that can detect potentially lethal blood infections: the AI-assisted tool was trained on a series of 100,000 images garnered from 25,000 slides treated with dye to make the bacteria more visible. The AI system can already sort those bacteria with a 95 percent accuracy rate. A study from Showa University in Yokohama, Japan revealed that a new computer-aided endoscopic system can reveal signs of potentially cancerous growths in the colon with 94 percent sensitivity, 79 percent specificity, and 86 percent accuracy.

In some cases, researchers are also finding that AI can outperform human physicians in diagnostic challenges that require a quick judgment call, such as determining if a lesion is cancerous. In one study, published December 2017 in JAMA, deep learning algorithms were able better diagnose metastatic breast cancer than human radiologists when under a time crunch. While human radiologists may do well when they have unrestricted time to review cases, in the real world (especially in high-volume, quick-turnaround environments like emergency rooms) a rapid diagnosis could make the difference between life and death for patients.

Then, of course, there’s IBM’s Watson: When challenged to glean meaningful insights from the genetic data of tumor cells, human experts took about 160 hours to review and provide treatment recommendations based on their findings. Watson took just ten minutes to deliver the same kind of actionable advice. Google recently announced an open-source version of DeepVariant, the company’s AI tool for parsing genetic data, which was the most accurate tool of its kind in last year’s precisionFDA Truth Challenge.

AI is also better than humans at predicting health events before they happen. In April, researchers from the University of Nottingham published a study that showed that, trained on extensive data from 378,256 patients, a self-taught AI predicted 7.6 percent more cardiovascular events in patients than the current standard of care. To put that figure in perspective, the researchers wrote: “In the test sample of about 83,000 records, that amounts to 355 additional patients whose lives could have been saved.” Perhaps most notably, the neural network also had 1.6 percent fewer “false alarms” — cases in which the risk was overestimated, possibly leading to patients having unnecessary procedures or treatments, many of which are very risky.

AI is perhaps most useful for making sense of huge amounts of data that would be overwhelming to humans. That’s exactly what’s needed in the growing field of precision medicine. Hoping to fill that gap is The Human Diagnosis Project (Human Dx), which is combining machine learning with doctors’ real-life experience. The organization is compiling input from 7,500 doctors and 500 medical institutions in more than 80 countries in order to develop a system that anyone — patient, doctor, organization, device developer, or researcher — can access in order to make more informed clinical decisions.

You have to design these things with an end user in mind.

Shantanu Nundy, the Director of the Human Diagnosis Project Nonprofit, told Futurism that, when it comes to developing technology in any industry, the AI should be seamlessly integrated into its function. “You have to design these things with an end user in mind. People use Netflix, but it’s not like ‘AI for watching movies,’ right? People use Amazon, but it’s not like ‘AI for shopping.’”

In other words, if the tech is designed well and implemented in a way that people find useful, people don’t even realize they’re using AI at all.

For open-minded, forward-thinking clinicians, the immediate appeal of projects like Human Dx is that it would, counterintuitively, allow them to spend less time engaged with technology. “It’s been well-documented that over 50 percent of our time now is in front of a screen,” Nundy, who is also a practicing physician in the D.C. area, told Futurism. AI can give doctors some of that time back by allowing them to offload some of the administrative burdens, like documentation.

In this respect, when it comes to healthcare, AI isn’t necessarily about replacing doctors, but optimizing and improving their abilities.

Mental Health Care with a Human Touch

“I see the value of AI today as augmenting humans, not as replacing humans,” Skyler Place, chief behavioral science officer in the mobile health division at Cogito, a Boston-based AI and behavioral analytics company, told Futurism.

Cogito has been using AI-powered voice recognition and analysis to improve customer service interactions across many industries. The company’s foray into healthcare has come in the form of Cogito Companion, a mental health app that tracks a patient’s behavior.

The app monitors a patient’s phone for both active and passive behavior signals, such as location data that could indicate a patient hasn’t left their home for several days or communication logs that indicate they haven’t texted or spoken on the phone to anyone for several weeks (the company claims the app only knows if a patient is using their phone to call or text — it doesn’t track who a user is calling or what’s being said). The patient’s care team can monitor the subsequent reports for signs that, in turn, may indicate changes to the patient’s overall mental health.

Cogito has teamed up with several healthcare systems throughout the country to test the app, which has found a particular niche in the veteran population. Veterans are at high risk for social isolation and may be reluctant to engage with the healthcare system, particularly mental health resources, often because of social stigma. “What we’ve found is that the app is acting as a way to build trust, in a way to drive engagement in healthcare more broadly,” Place said, adding that the app “effectively acts as a primer for behavioral change,” which seems to help veterans feel empowered and willing to engage with mental health services.

Here’s where the AI comes in: the app also uses machine learning algorithms to analyze “audio check ins” — voice recordings the patient makes (somewhat akin to an audio diary). The algorithms are designed to pick up on emotional cues, just as two humans talking would. “We’re able to build algorithms that match the patterns in how people are speaking, such as energy, intonation, the dynamism or flow in a conversation,” Place explained.

From there, humans train the algorithm to learn what “trustworthy” or “competence” sound like, to identify the voice of someone who is depressed, or the differences in the voice of a bipolar patient when they’re manic versus when they’re depressed. While the app provides real-time information for the patient to track their mood, the information also helps clinicians track their patient’s progress over time.

At Cogito, Place has seen the capacity of artificial intelligence to help us “understand the human aspects of conversations and the human aspects of mental health.”

Understanding, though, is just the first step. The ultimate goal is finding a treatment that works, and that’s where doctors currently shine in relation to mental health issues. But where do robots stand when it comes to things that are more hands-on?

Under The (Robotic) Knife

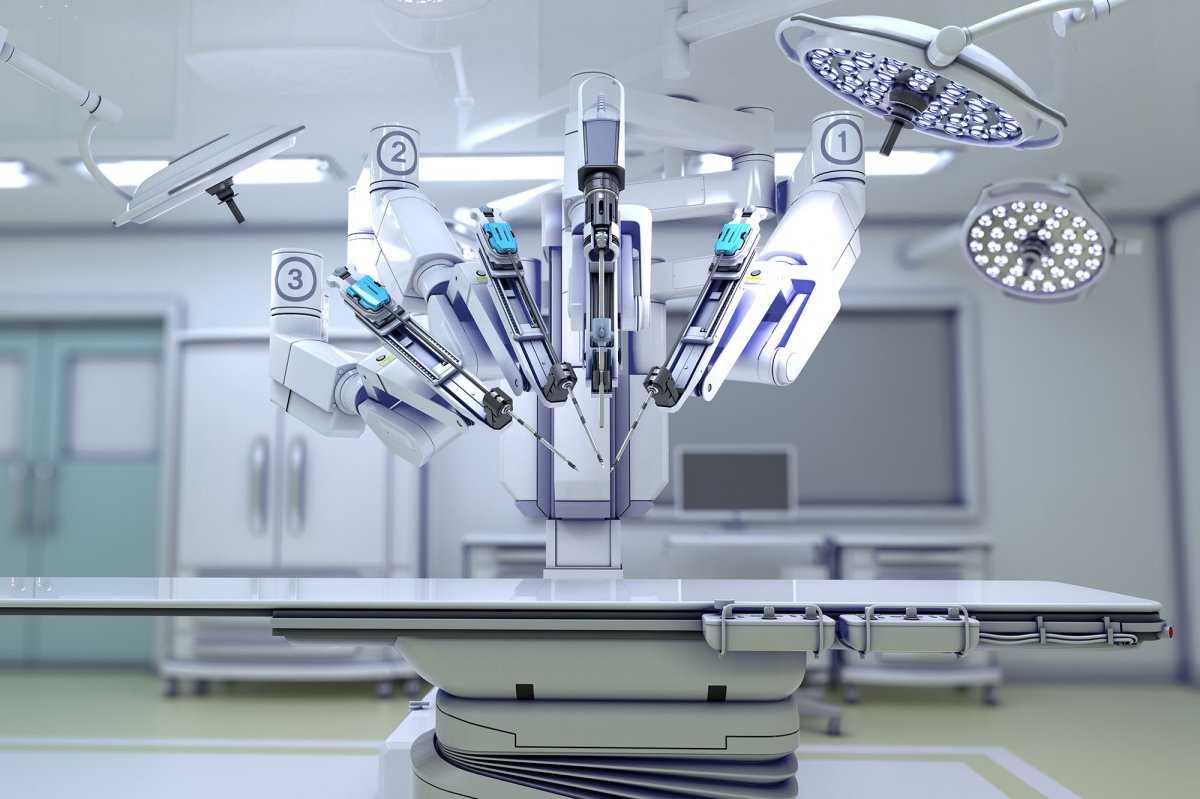

Over the last couple of decades, one of the most headline-making applications for AI in medicine has been the development of surgical robots.

In most cases to date, surgical robots (the da Vinci is the most well-known) function as an extension of the human surgeon, who controls the device from a nearby console. One of the more ambitious procedures, claimed to be a world-first, took place in Montreal in 2010. It was the first in-tandem performance of both a surgical robot as well as a robot anesthesiologist (cheekily named McSleepy); data gathered on the procedure reflects the impressive performance of these robotic doctors.

In 2015, more than a decade after the first surgical robots entered the operating room, MIT performed a retrospective analysis of FDA data to assess the safety of robotic surgery. There were 144 patient deaths and 1,391 patient injuries reported during the period of study, which were mainly caused by technical difficulties or device malfunctions. The report noted that “despite a relatively high number of reports, the vast majority of procedures were successful and did not involve any problems.” But the number of events in more complex surgical areas (like cardiothoracic surgery) were “significantly higher” than in areas like gynecology and general surgery.

The takeaway would seem to be that, while robotic surgery can perform well in some specialties, the more complex surgeries are best left to human surgeons — at least for now. But this could change quickly, and as surgical robots are able to operate more independently from human surgeons, it will become harder to know who to blame when something goes wrong.

Can a patient sue a robot for malpractice? As the technology is still relatively new, litigation in such cases constitutes something of a legal gray area. Traditionally, experts consider medical malpractice to be the result of negligence on the part of the physician or the violation of a defined standard of care. The concept of negligence, though, implies an awareness that AI inherently lacks, and while it’s conceivable that robots could be held to performance standards of some kind, those standards would need to exist.

So if not the robot, who, or what, takes the blame? Can a patient’s family hold the human surgeon overseeing the robot accountable? Or should the company that manufactured the robot shoulder the responsibility? The specific engineer who designed it? This is a question that, at present, has no clear answer — but it will need to be addressed sooner rather than later.

Building, Not Predicting, the Future

In the years to come, AI’s role in medicine will only grow: In a report prepared by Accenture Consulting, the market value of AI in medicine in 2014 was found to be $600 million. By 2021, that figure is projected to reach $6.6 billion.

The industry may be booming, but we shouldn’t integrate AI hurriedly or haphazardly. That’s in part because things that are logical to humans are not to machines. Take, for example, an AI trained to determine if skin lesions were potentially cancerous. Dermatologists often use rulers to measure the lesions that they suspect to be cancerous. When the AI was trained on those biopsy images, it was more likely to say a lesion was cancerous if a ruler was present in the image, according to The Daily Beast.

Algorithms may also inherit our biases, in part because there’s a lack of diversity in the materials used to train AI. In medicine or not, the data the machines are trained on is largely determined by who is conducting the research and where it’s being done. White men still dominate the fields of clinical and academic research, and they also make up most of the patients who participate in clinical trials.

A tenet of medical decision-making is whether the benefits of a procedure or treatment outweigh the risks. When considering whether or not AI is ready to be on equal footing with a human surgeon in the operating room, a little risk-benefit and equality analysis will go a long way.

“I think if you build [the technology] with the right stakeholders at the table, and you invest the extra effort to be really inclusive in how you do that, then I think we can change the future,” Nundy, of Human Dx, said. “We’re trying to actually shape what the future holds.”

Though sometimes we fear that robots are leading the charge towards integrating AI in medicine, humans are the ones having these conversations and, ultimately, driving the change. We decide where AI should be applied and what’s best left done the old-fashioned way. Instead of trying to predict what a doctor’s visit will be like in 20 years, physicians can use AI as a tool to start building the future they want — the future that’s best for them and their patients — today.

Editor’s note: This article originally misspelled Shantanu Nundy’s name and title. They have been updated accordingly. We regret the error.