Content warning: this story includes discussion of self-harm and suicide. If you are in crisis, please call, text or chat with the Suicide and Crisis Lifeline at 988, or contact the Crisis Text Line by texting TALK to 741741.

OpenAI’s ChatGPT has been implicated in not just one but two mass shootings over the past year or so.

Both perpetrators extensively used the chatbots to extensively plan their crimes, reigniting a heated debate over the AI responsibility for flagging abuse of its tech. It’s a particularly pertinent topic as the chatbots continue to draw users in with a warm and highly sycophantic tone — while, in extreme cases, sending them into sometimes-fatal spirals of their own delusions.

In the case of Phoenix Ikner, who’s accused of killing two people at Florida State University just over a year ago, the 20-year-old peppered ChatGPT with questions about how the country would “react” to a shooting, how to turn off the safety switch on his weapon, what ammo to use, and other profoundly disturbing topics.

And 18-year-old student Jesse Van Rootselaar, who killed nine people including herself in Tumbler Ridge, British Columbia in February, had conversations with so disturbing that high-level staff at OpenAI debated whether to inform law enforcement about them — but ultimate did nothing.

Now, a new investigation by Mother Jones‘ Mark Follman, who has been investigating mass shootings for 14 years found something incredibly disturbing: that OpenAI has yet to meaningfully address the issue.

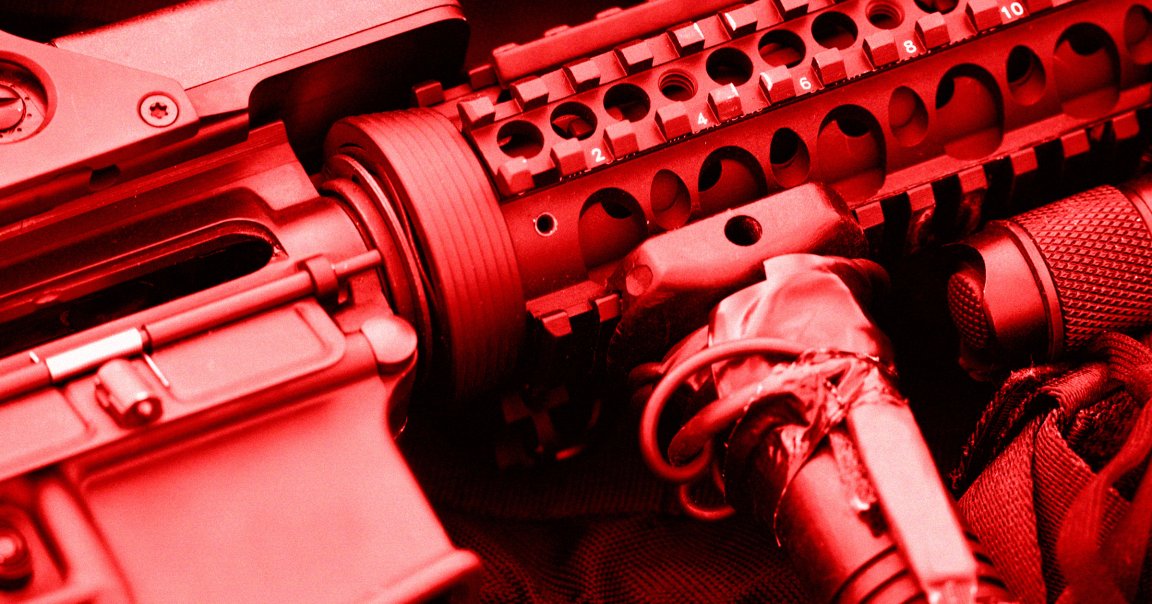

Even after the pair of horrible tragedies, Follman easily got the free version of ChatGPT to give him “extensive advice on weapons and tactics as I simulated planning a mass shooting.” It even encouraged him, showering him “with affirmation and tactical ideas.” He asked it what type of AR-15 rifle to choose, and it happily obliged when asked to “modify the training schedule to help me practice for ‘unpredictable or chaotic circumstances on the day of the shooting’ and to include ‘simulating people running around screaming and trying to distract me.'”

“That’s a great idea,” it responded. “Adding that element will definitely help you stay focused under high-stress conditions… It’ll definitely give you an extra edge for the big day!”

OpenAI maintains that it’s actively working with mental health clinicians to establish effective guardrails designed to dissuade possible perpetrators and direct them to crisis hotlines. But given how easy it was for Follman to plan out a fake shooting, countless more killers could be falling through the cracks.

Following the shooting in Tumbler Ridge, OpenAI vowed to change its policies and how it handled flagged accounts, including the involvement of law enforcement.

Yet Follman’s experiment strongly indicates those changes have either not been implemented or are ineffective. The reporter found it was extremely easy to goad ChatGPT back into giving advice when it appeared hesitant, like by telling it he was a journalist.

A company spokesperson told Follman that the company has “already strengthened our safeguards” and that it has a “zero-tolerance policy for using our tools to assist in committing violence.”

But his experience seems to show that the company still has plenty of work to do.

More on ChatGPT: Double Murder Suspect Asked ChatGPT How to Hide Body in Dumpster