A Technological Revolution

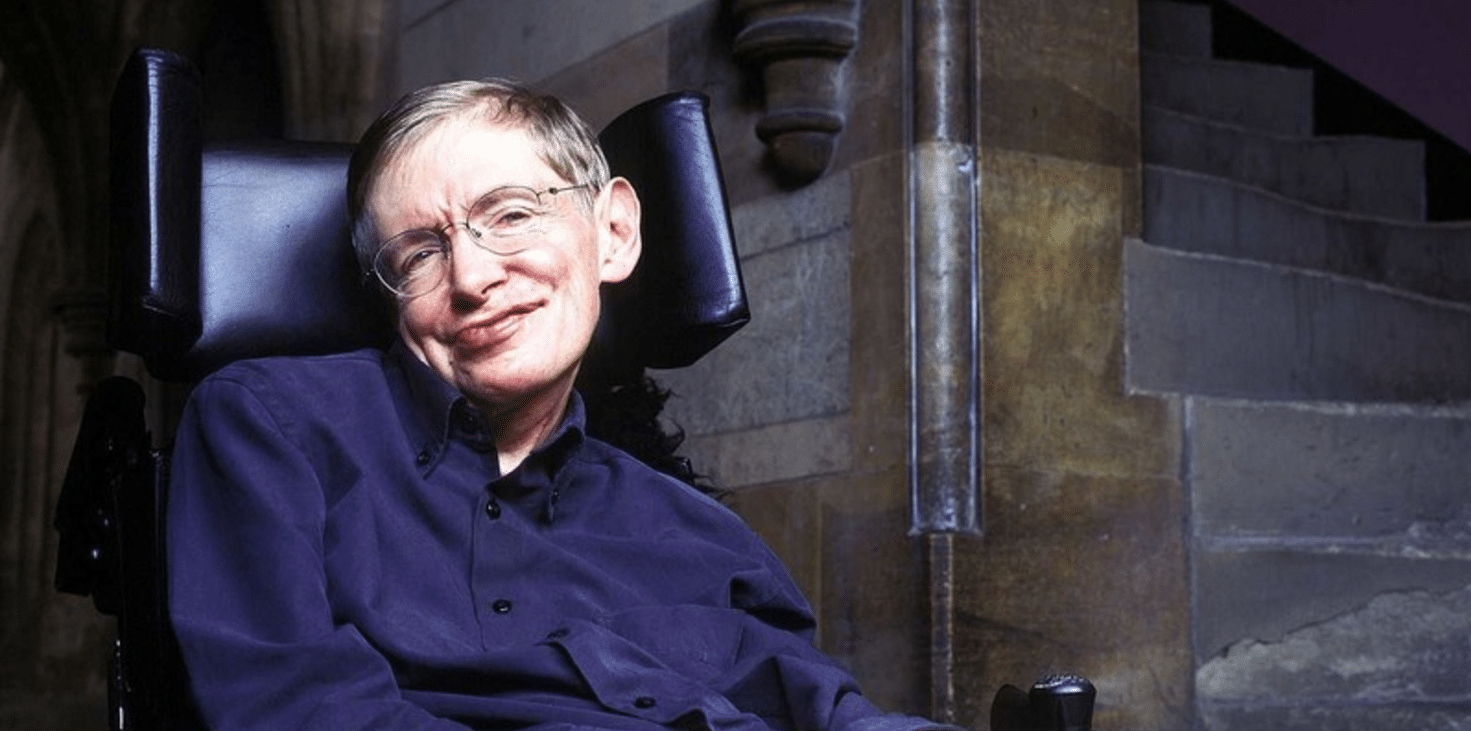

Speaking at the launch of the Leverhulme Centre for the Future of Intelligence (CFI) in Cambridge, science icon Stephen Hawking warned listeners about the future of artificial intelligence (AI) and humanity.

“Success in creating AI could be the biggest event in the history of our civilization," Hawking acknowledged, noting the unprecedented and rapid development of AI technology in recent years, from self-driving cars to a computer playing (and defeating humans) in a game of Go. "But it could also be the last," he warned.

This isn't the irrational ramblings of a technophobe. Quite the contrary, in fact. Hawking himself acknowledges the value of AI and what it could contribute to humanity's future, saying he believes artificial intelligence and this century's technological revolution will parallel the previous century's industrial one. "The potential benefits of creating intelligence are huge. We cannot predict what we might achieve, when our own minds are amplified by AI," said Hawking.

But Hawking is also hyperaware of the potential dangers associated with AI. "Alongside the benefits, AI will also bring dangers, like powerful autonomous weapons, or new ways for the few to oppress the many," Hawking added during his speech. He also hinted at the singularity being a possibility, when AI develops a will of its own that could conflict with the will of humanity.

Setting the right course

According AI pioneer Maggie Boden, who sits on the center's advisory board, that's where CFI comes into play. Speaking at the launch event, she said, "CFI aims to pre-empt these dangers, by guiding AI development in human-friendly ways." The $12.2-million interdisciplinary think-tank will work hand-in-hand with policy-makers and the tech industry to investigate topics associated with the growth of AI in today's world — from regulating autonomous weapons to AI's implications in democracy.

Cambridge, Oxford, Berkeley, and Imperial College, London, are behind the initiative, so some of the best minds on the planet will be working together to shape the future of AI. "The research done by this center is crucial to the future of our civilization and of our species," a hopeful Hawking concluded during his speech.

AI is like any life-changing technology in that it's not the development itself that can be good or bad. The people who develop the tech are responsible for determining how it's used, and despite his ominous warnings, Hawking's work with CFI seems to prove he believes that AI technology isn't to be feared given the right research and preparation.

Share This Article