Recognizing Art

Deep neural networks have progressed immensely in recent years. Far from being moonshots, they are now at a point where they are better than humans at several tasks. They are capable of understanding text without previously encountering the words, of translating different languages, they can even cut transcription errors by half. And now, it seems they can recognize and replicate art (and a number of art styles).

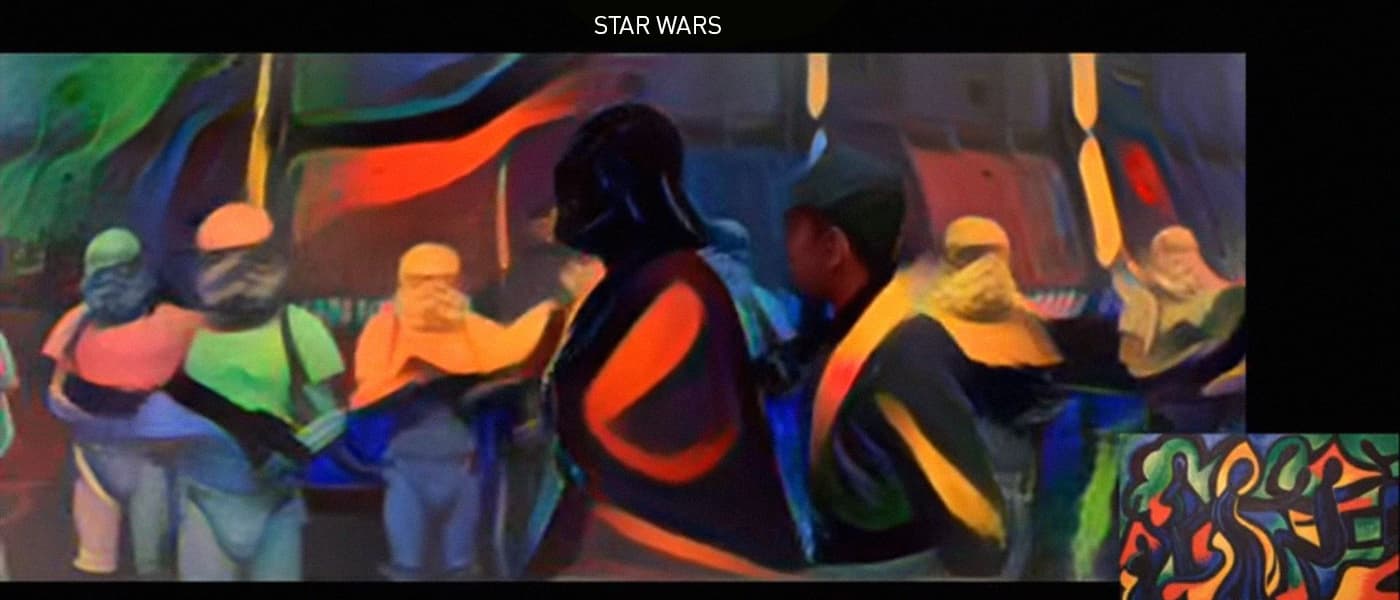

A team from the University of Freiburg in Germany have used deep neural networks to copy the artistic style of famous painters and paste them into video clips from movies and TV shows. Van Gogh's Starry Night style on a clip from Ice Age? No problem.

One obvious drawback to the technology is that it needs some serious computing power. It takes three minutes for each frame to be processed on a system using NVIDIA's $1,000-plus Titan X graphics card.

However, these constraints are not serious enough that time and development can't chip away at them. Given all of this, it seems likely that smart TVs of the future could have art-filtering as a feature within the decade.

Copying and Pasting

According to MIT Tech Review, the way the whole thing works is something like this: Deep neural networks, which work in layers, will extract information from an image then pass on the leftover data to the next layer. The first few layers take on broad patterns such as color, while progressively deeper layers extract more detail, which allow object recognition.

Previous research into the topic has yielded the fact that by analyzing not the information at each layer but the correlation of each layer, one can capture the style of a painting or image. From this, the style can be separated from the image and copied into another image/form.

But translating this to video creates a whole host of problems. Small shifts in the video could mean big differences in the way the style is applied. As edges start moving or become occluded, the video becomes jumpier and more incoherent. To solve this, an algorithm that analyzes the changes between successive frames and prevents big differences had to be developed.

Share This Article